Contents Overview

- Introduction: Emergence of Intelligent GUI Agents

- Core Design and Functionalities

- Training Methodology and Data Generation

- Performance Evaluation and Benchmarking

- Practical Applications and Deployment

- Final Thoughts: The Future of GUI Automation

Introduction: Emergence of Intelligent GUI Agents

Graphical user interfaces (GUIs) have become the cornerstone of modern computing, spanning smartphones, desktops, and web platforms. Historically, automating interactions within these environments relied heavily on fragile scripts or rigid rule-based systems, which often failed to adapt to the complexity and variability of real-world interfaces. The advent of vision-language models has opened new avenues, enabling agents to interpret screen content, reason about user tasks, and perform actions with human-like understanding. Despite this progress, many existing solutions depend on proprietary, opaque models or face challenges in generalizing across platforms and maintaining reasoning accuracy.

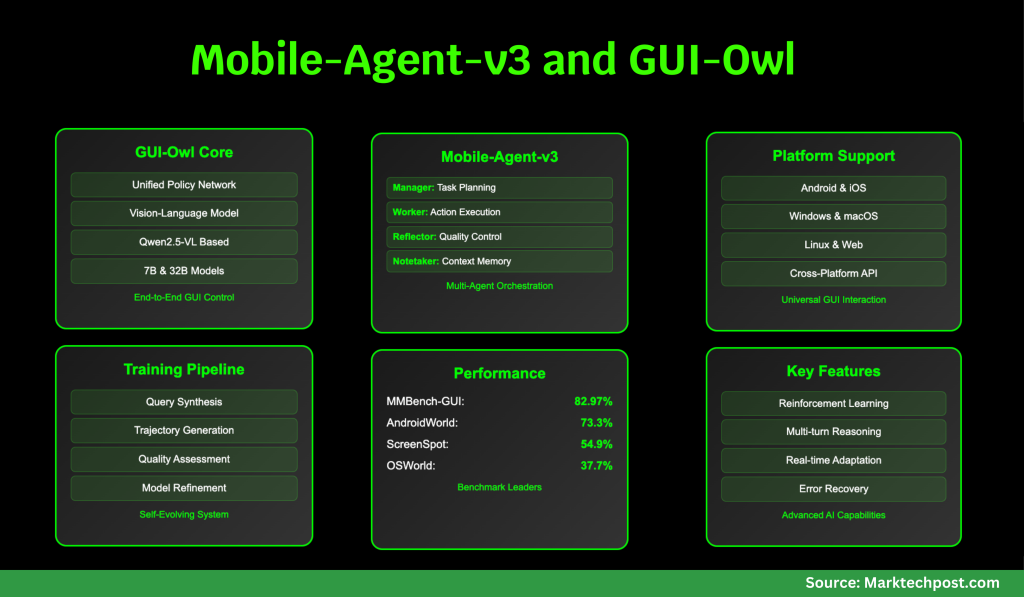

Addressing these limitations, researchers at Alibaba Qwen have developed GUI-Owl and Mobile-Agent-v3, two cutting-edge frameworks designed to revolutionize GUI automation. GUI-Owl is an end-to-end multimodal agent built upon the Qwen2.5-VL vision-language foundation, extensively trained on a vast and varied dataset of GUI interactions. It seamlessly integrates perception, grounding, reasoning, planning, and execution into a unified policy network, enabling robust, multi-turn interactions across diverse platforms. Complementing this, Mobile-Agent-v3 orchestrates a team of specialized agents-Manager, Worker, Reflector, and Notetaker-to manage complex, long-duration tasks with dynamic planning, reflection, and memory retention.

Core Design and Functionalities

GUI-Owl: The Backbone Model

GUI-Owl is architected to navigate the complexity and variability inherent in real-world GUIs. Originating from the advanced Qwen2.5-VL vision-language model, it undergoes rigorous additional training on specialized GUI datasets. This training encompasses:

- Element Grounding: Accurately identifying UI components based on natural language instructions.

- Task Decomposition: Breaking down intricate commands into manageable, actionable steps.

- Action Semantics: Understanding the impact of user actions on the GUI state.

The model is fine-tuned through a combination of supervised learning and reinforcement learning, emphasizing alignment with real-world task success metrics.

Innovative Features of GUI-Owl

- Integrated Policy Network: Unlike traditional approaches that separate perception, planning, and execution, GUI-Owl consolidates these functions into a single neural network. This integration facilitates smooth multi-turn decision-making and explicit intermediate reasoning, essential for handling ambiguous and dynamic GUI scenarios.

- Cloud-Based Scalable Training: The team developed a virtual environment spanning Android, Ubuntu, macOS, and Windows platforms. This “Self-Evolving GUI Trajectory Production” system enables the generation of high-quality interaction data by having GUI-Owl and Mobile-Agent-v3 engage with virtual devices, followed by rigorous validation of action sequences. Successful interactions feed back into training, fostering continuous improvement.

- Diverse Data Augmentation: To enhance grounding and reasoning capabilities, the researchers employed multiple data synthesis techniques, including generating UI element grounding tasks from accessibility trees and crawled screenshots, extracting task planning knowledge from historical trajectories and large language models, and creating action semantics datasets by predicting GUI state changes from before-and-after screenshots.

- Advanced Reinforcement Learning: GUI-Owl benefits from a scalable reinforcement learning framework featuring asynchronous training and a novel “Trajectory-aware Relative Policy Optimization” (TRPO) method. TRPO effectively assigns credit across lengthy, variable-length action sequences, addressing the challenge of sparse rewards typical in GUI automation tasks.

Mobile-Agent-v3: Coordinated Multi-Agent System

Mobile-Agent-v3 is a versatile agent framework engineered to manage complex, multi-step workflows that span multiple applications. It decomposes tasks into subgoals, adapts plans dynamically based on execution feedback, and maintains persistent contextual memory. The framework coordinates four specialized agents:

- Manager Agent: Translates high-level instructions into subgoals and updates plans responsively based on ongoing results.

- Worker Agent: Executes the most pertinent subgoal by considering the current GUI state, prior feedback, and accumulated notes.

- Reflector Agent: Assesses the outcomes of actions by comparing expected and actual GUI states, providing diagnostic feedback.

- Notetaker Agent: Stores essential information such as codes and credentials across application boundaries, supporting extended task sequences.

Training Methodology and Data Generation

One of the primary challenges in developing GUI agents is the scarcity of scalable, high-quality training data. Manual annotation is costly and insufficient to capture the diversity of real-world GUIs. To overcome this, the GUI-Owl team implemented a self-evolving data generation pipeline:

- Instruction Synthesis: For mobile applications, human-curated directed acyclic graphs (DAGs) model realistic navigation flows and input parameters. Large language models then generate natural language instructions from these paths, which are refined and validated against actual app interfaces.

- Interaction Trajectory Creation: Given a user query, GUI-Owl or Mobile-Agent-v3 interacts with virtual environments to produce sequences of actions and state transitions, known as trajectories.

- Trajectory Validation: A two-tiered critic system evaluates each action’s correctness and the overall success of the trajectory using both textual and multimodal reasoning, culminating in consensus-based judgments.

- Guidance Generation: For complex queries, the system synthesizes step-by-step guidance from successful trajectories, enabling the agent to learn from positive examples.

- Iterative Model Refinement: Newly validated trajectories are incorporated into the training dataset, and the model is retrained, creating a continuous feedback loop for self-improvement.

Performance Evaluation and Benchmarking

UI Element Grounding and Comprehension

GUI-Owl demonstrates exceptional performance in grounding tasks, where the goal is to locate UI elements based on natural language queries. The 7-billion parameter version of GUI-Owl outperforms all open-source counterparts of similar size, while the 32-billion parameter model surpasses proprietary giants like GPT-4o and Claude 3.7. For instance, on the MMBench-GUI L2 benchmark-which spans Windows, macOS, Linux, iOS, Android, and web platforms-GUI-Owl-7B achieves a score of 80.49, and GUI-Owl-32B reaches 82.97, both leading the field. On the ScreenSpot Pro benchmark, which tests high-resolution and complex interfaces, GUI-Owl-7B scores 54.9, significantly outperforming models such as UI-TARS-72B and Qwen2.5-VL-72B. These results highlight GUI-Owl’s comprehensive and precise grounding capabilities, from simple button identification to detailed text localization.

Advanced GUI Understanding and Decision-Making

The MMBench-GUI L1 benchmark assesses UI comprehension and single-step decision-making through question-answering tasks. GUI-Owl-7B scores 84.5 on easy, 86.9 on medium, and an impressive 90.9 on hard difficulty levels, outperforming all existing models. This reflects not only accurate perception but also sophisticated reasoning about interface states and potential actions. On the Android Control benchmark, which focuses on single-step decisions within pre-annotated contexts, GUI-Owl-7B attains 72.8, the highest among 7B models, while GUI-Owl-32B reaches 76.6, exceeding even the largest open and proprietary models.

End-to-End Task Execution and Multi-Agent Synergy

True evaluation of GUI agents lies in their ability to autonomously complete multi-step tasks in interactive environments. The AndroidWorld and OSWorld benchmarks challenge agents to navigate apps and operating systems to fulfill user instructions. GUI-Owl-7B scores 66.4 on AndroidWorld and 34.9 on OSWorld, whereas Mobile-Agent-v3, leveraging GUI-Owl as its core, achieves state-of-the-art results with 73.3 and 37.7 respectively. The multi-agent architecture excels in managing long-horizon, error-prone tasks, with the Reflector and Manager agents enabling dynamic replanning and error recovery.

Integration in Real-World Agent Frameworks

GUI-Owl’s versatility is further demonstrated by its integration as the central reasoning module within established agentic systems such as Mobile-Agent-E (Android) and Agent-S2 (desktop). In these settings, GUI-Owl-32B achieves a 62.1% success rate on AndroidWorld and 48.4% on a challenging OSWorld subset, outperforming all baseline models. This confirms GUI-Owl’s practical utility as a modular, plug-and-play component for diverse automation frameworks.

Practical Applications and Deployment

GUI-Owl supports a comprehensive, platform-specific action repertoire. On mobile devices, this includes taps, long presses, swipes, text input, system navigation buttons (e.g., back, home), and app launching. On desktop platforms, actions extend to mouse movements, clicks, drags, scrolls, keyboard inputs, and application-specific commands. These high-level actions are translated into low-level device instructions using tools like ADB for Android and pyautogui for desktops, facilitating seamless deployment in real-world environments.

The agent’s decision-making process is transparent and interpretable: at each step, it analyzes the current screen, references compressed historical context, reasons about the next action, articulates its intent, and executes the command. This explicit intermediate reasoning enhances robustness and supports integration into larger multi-agent ecosystems, where distinct roles such as planner, executor, and critic collaborate effectively.

Final Thoughts: The Future of GUI Automation

GUI-Owl and Mobile-Agent-v3 mark a significant advancement toward versatile, autonomous GUI agents capable of operating across platforms. By merging perception, grounding, reasoning, and action within a unified model and establishing a scalable, self-improving training pipeline, these frameworks achieve state-of-the-art results on both mobile and desktop benchmarks, outperforming even the largest proprietary models in critical tasks. This progress paves the way for more intelligent, adaptable automation tools that can transform user interaction with digital interfaces.