Alibaba’s Qwen Team Unveils FP8-Quantized Checkpoints for Qwen3-Next-80B-A3B Models

Alibaba’s Qwen research group has introduced FP8-quantized checkpoints for their latest Qwen3-Next-80B-A3B models, available in two post-training variants designed to optimize high-throughput inference with ultra-long context capabilities and efficient Mixture-of-Experts (MoE) utilization. These FP8 releases parallel the previously launched BF16 versions but incorporate “fine-grained FP8” quantization with a block size of 128, alongside deployment instructions tailored for the latest sglang and vLLM nightly builds. It’s important to note that benchmark results remain consistent with the original BF16 models, as FP8 is provided primarily to enhance performance and convenience rather than to serve as a separate evaluation baseline.

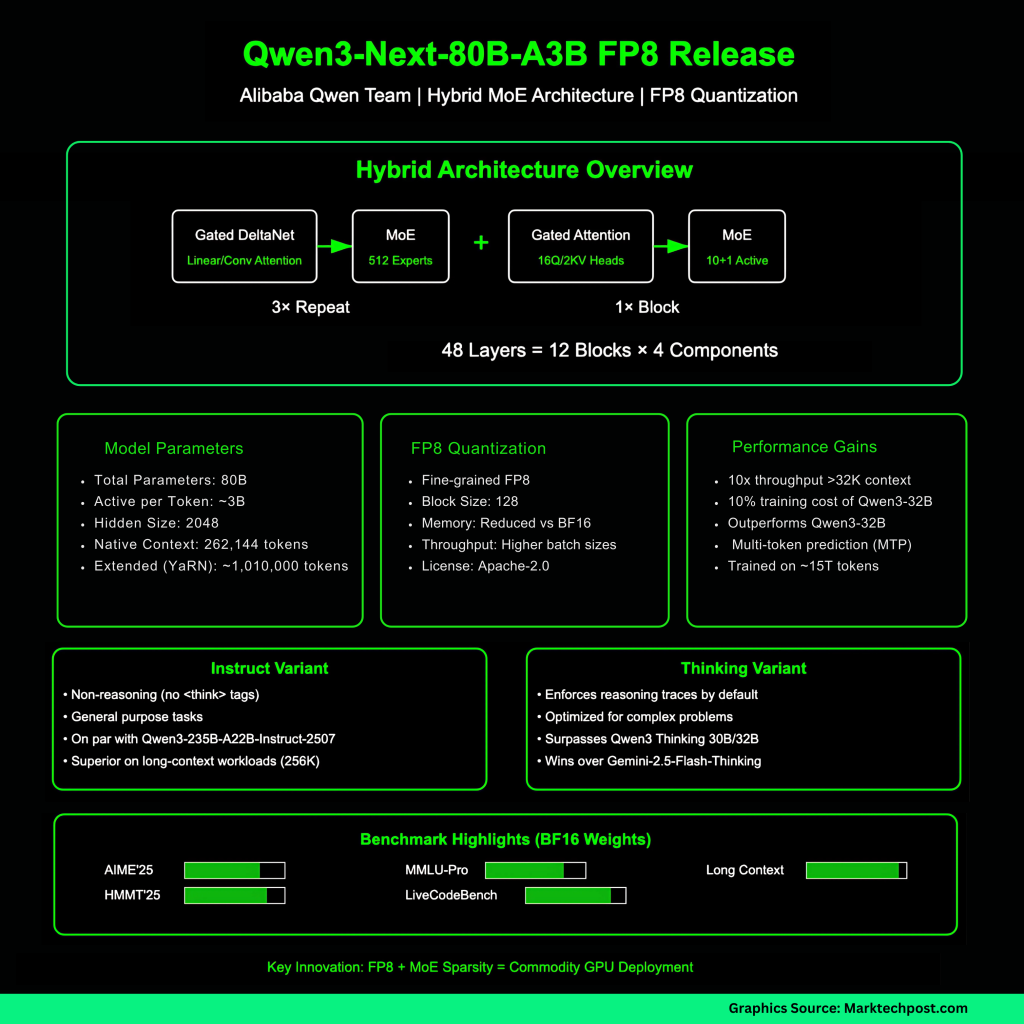

Innovative Architecture of the Qwen3-Next-80B-A3B Model

The Qwen3-Next-80B-A3B model employs a hybrid design that integrates Gated DeltaNet-a linear and convolution-inspired attention approximation-with Gated Attention mechanisms, interspersed with an ultra-sparse Mixture-of-Experts (MoE) layer. Out of the total 80 billion parameters, approximately 3 billion are activated per token through routing across 512 experts (10 routed experts plus 1 shared expert). The architecture is structured into 48 layers grouped into 12 blocks, each block consisting of three repetitions of Gated DeltaNet followed by MoE layers, capped by one Gated Attention followed by MoE layer. The model supports a native context length of 262,144 tokens and has been validated for contexts extending up to roughly 1,010,000 tokens using Rotary Positional Embeddings (RoPE) scaling via the YaRN method. Key specifications include a hidden size of 2048, 16 query attention heads, 2 key-value heads with a head dimension of 256, and DeltaNet’s 32 value and 16 query-key linear heads at a head dimension of 128.

Performance Highlights and Variants

According to the Qwen team, the 80B-A3B base model surpasses the Qwen3-32B in downstream task performance while requiring only about 10% of the training cost. It also achieves roughly a tenfold increase in inference throughput for contexts exceeding 32,000 tokens, driven by the low activation rate in MoE layers and multi-token prediction (MTP) techniques. The two post-training variants serve distinct purposes: the Instruct variant excludes reasoning tags (e.g., <think>), focusing on straightforward instruction following, whereas the Thinking variant incorporates reasoning traces by default, making it better suited for tackling complex problem-solving tasks.

Details on FP8 Quantization and Deployment

The FP8 quantization applied in these models is described as “fine-grained” with a block size of 128, differing slightly from BF16 in deployment requirements. Both sglang and vLLM frameworks necessitate the latest main or nightly builds to support these FP8 models. Example commands are provided for running inference with a 256K token context and optional multi-token prediction. For the Thinking variant, it is recommended to enable a reasoning parser flag (such as --reasoning-parser deepseek-r1 in sglang or deepseek_r1 in vLLM) to fully leverage its reasoning capabilities. These FP8 checkpoints continue to be distributed under the Apache-2.0 license.

Benchmark Comparisons Using BF16 Weights

Benchmark data, based on BF16 weights, show that the Instruct variant of Qwen3-Next-80B-A3B matches the performance of the much larger Qwen3-235B-A22B-Instruct-2507 model across various knowledge, reasoning, and coding benchmarks, while excelling in long-context tasks up to 256K tokens. The Thinking variant demonstrates superior results on challenging assessments such as AIME 2025, HMMT 2025, MMLU-Pro/Redux, and LiveCodeBench v6, outperforming earlier Qwen3 Thinking models (including the 30B A3B-2507 and 32B versions) and even surpassing Gemini-2.5-Flash-Thinking on multiple fronts.

Training Regimen and Post-Training Enhancements

The Qwen3-Next-80B-A3B models were initially trained on approximately 15 trillion tokens before undergoing post-training refinement. Stability improvements such as zero-centered and weight-decayed layer normalization were incorporated. For the Thinking variant, reinforcement learning with human feedback (RLHF) using GSPO was applied to effectively manage the hybrid attention and high-sparsity MoE architecture. Multi-token prediction (MTP) is leveraged both to accelerate inference and to enhance the quality of pretraining signals.

Significance of FP8 Quantization in Modern AI Workloads

FP8 quantization offers substantial benefits on contemporary AI accelerators by reducing memory bandwidth demands and lowering the memory footprint compared to BF16 precision. This efficiency gain enables larger batch sizes or longer input sequences without increasing latency. Given that the A3B model activates only about 3 billion parameters per token, combining FP8 quantization with MoE sparsity significantly boosts throughput, especially in long-context scenarios. When paired with speculative decoding techniques enabled by MTP, these improvements become even more pronounced. However, quantization intricacies can affect routing and attention mechanisms, causing variability in speculative decoding acceptance rates and final task accuracy depending on the inference engine and kernel implementations. Therefore, Qwen recommends using the latest sglang or vLLM versions and fine-tuning speculative decoding parameters for optimal results.

Conclusion: Practical Implications for Long-Context AI Applications

The introduction of FP8-quantized checkpoints makes the 80B/3B-active A3B architecture feasible for deployment on mainstream inference engines at ultra-long contexts of up to 256K tokens. This release preserves the hybrid MoE design and multi-token prediction pathways, ensuring high throughput without sacrificing model fidelity. While benchmark results are consistent with BF16 versions, users are encouraged to validate FP8 accuracy and latency within their specific environments, especially when employing reasoning parsers and speculative decoding. Overall, these advancements reduce memory bandwidth requirements and enhance concurrency, positioning the Qwen3-Next-80B-A3B models as strong candidates for production workloads demanding extensive context handling.