Building a Sophisticated Graph-Based AI Agent with Gemini 1.5 Flash and GraphAgent

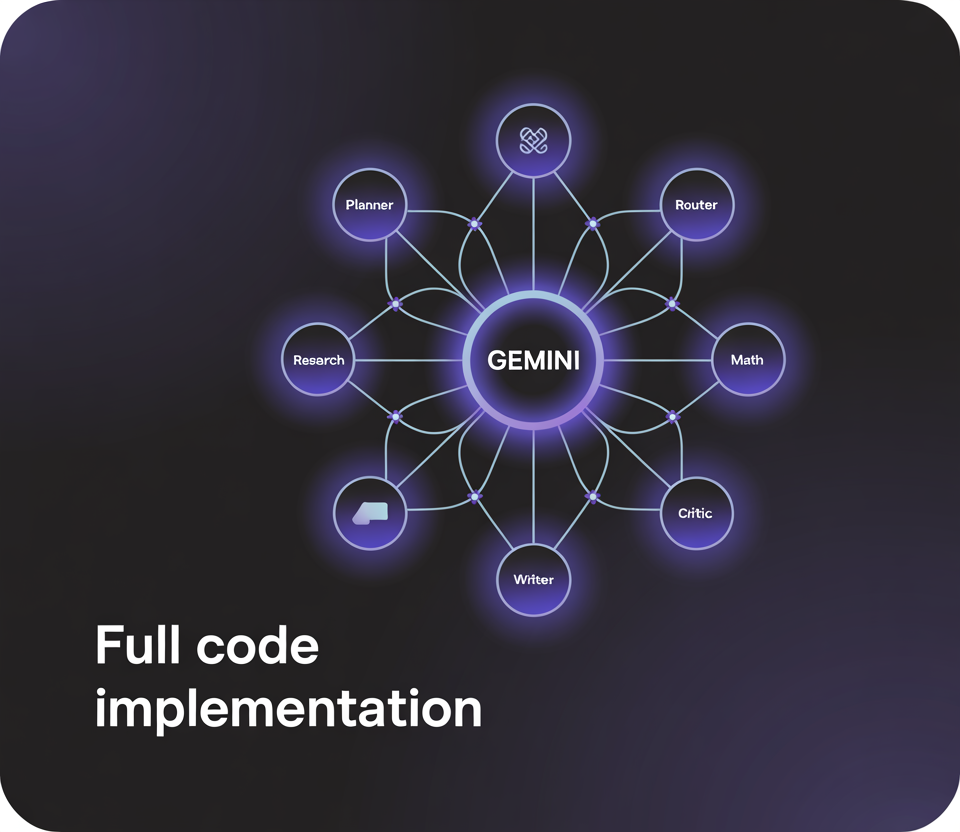

This guide walks you through creating a cutting-edge AI agent structured as a directed graph using the GraphAgent framework, powered by the Gemini 1.5 Flash model. Each node in the graph is assigned a distinct role: a planner to decompose complex tasks, a router to manage workflow decisions, research and math nodes to fetch external data and perform calculations, a writer to generate coherent responses, and a critic to review and enhance the final output. Gemini is integrated via a custom wrapper that processes structured JSON prompts, while local Python functions serve as safe tools for mathematical evaluation and document retrieval. By running this pipeline end-to-end, we illustrate how modular reasoning, information retrieval, and validation can be seamlessly orchestrated within a unified system.

Essential Libraries and Setup

We start by importing fundamental Python modules for data manipulation, timing, and secure expression evaluation. The dataclasses and typing modules help organize the agent’s internal state. The google.generativeai client is used to interface with Gemini, and optionally, NetworkX can be included for graph visualization purposes.

import os, json, time, ast, math, getpass

from dataclasses import dataclass, field

from typing import Dict, List, Callable, Any

import google.generativeai as genai

try:

import networkx as nx

except ImportError:

nx = None

Configuring the Gemini Model and LLM Interaction

We define a utility function to initialize the Gemini model with a tailored system prompt that encourages structured and concise responses. Another helper function sends prompts to the model, controlling the randomness of outputs via the temperature parameter. This setup ensures consistent, tool-aware outputs from the language model.

def initialize_model(api_key: str, model_name: str = "gemini-1.5-flash"):

genai.configure(api_key=api_key)

return genai.GenerativeModel(model_name, system_instruction=(

"You are GraphAgent, a principled planner-executor. "

"Provide structured, succinct outputs and utilize tools when requested."

))

def query_llm(model, prompt: str, temperature=0.2) -> str:

response = model.generate_content(prompt, generation_config={"temperature": temperature})

return (response.text or "").strip()

Implementing Safe Math Evaluation and Document Retrieval Tools

To empower the agent with reliable computation and information access, we implement two critical utilities. The safe_eval_math function securely parses and evaluates arithmetic expressions using Python’s ast module, preventing unsafe code execution. The search_docs function performs a simple relevance-based search over a predefined set of knowledge snippets, simulating retrieval-augmented generation (RAG) capabilities without external dependencies.

def safe_eval_math(expression: str) -> str:

node = ast.parse(expression, mode="eval")

allowed_nodes = (

ast.Expression, ast.BinOp, ast.UnaryOp, ast.Num, ast.Constant,

ast.Add, ast.Sub, ast.Mult, ast.Div, ast.Pow, ast.Mod,

ast.USub, ast.UAdd, ast.FloorDiv, ast.AST

)

def validate(n):

if not isinstance(n, allowed_nodes):

raise ValueError("Expression contains unsafe elements")

for child in ast.iter_child_nodes(n):

validate(child)

validate(node)

return str(eval(compile(node, "", "eval"), {"__builtins__": {}}, {}))

DOCUMENTS = [

"Solar panels convert sunlight into electricity with an average capacity factor of about 20%.",

"Onshore wind turbines capture kinetic energy with a capacity factor near 35%.",

"Retrieval-augmented generation (RAG) combines search with language model prompting for enhanced responses.",

"LangGraph supports cyclic agent graphs, ideal for orchestrating complex toolchains."

]

def search_docs(query: str, top_k: int = 3) -> List[str]:

query_lower = query.lower()

ranked = sorted(DOCUMENTS, key=lambda doc: -sum(word in doc.lower() for word in query_lower.split()))

return ranked[:top_k]

State Management and Node Functionality

We define a State dataclass to maintain the task description, planning details, collected evidence, scratchpad notes, results, and control flags throughout the graph execution. Each node function manipulates this state and returns the label of the next node to execute, enabling a dynamic and modular workflow.

@dataclass

class State:

task: str

plan: str = ""

scratch: List[str] = field(default_factory=list)

evidence: List[str] = field(default_factory=list)

result: str = ""

step: int = 0

done: bool = False

def node_plan(state: State, model) -> str:

prompt = f"""Decompose the task into actionable steps.

Task: {state.task}

Respond with JSON: {{"subtasks": ["..."], "tools": {{"search": true/false, "math": true/false}}, "success_criteria": ["..."]}}"""

response = query_llm(model, prompt)

try:

plan = json.loads(response[response.find("{"): response.rfind("}")+1])

except Exception:

plan = {"subtasks": ["Research", "Synthesize"], "tools": {"search": True, "math": False}, "success_criteria": ["clear answer"]}

state.plan = json.dumps(plan, indent=2)

state.scratch.append("PLAN:n" + state.plan)

return "route"

def node_route(state: State, model) -> str:

prompt = f"""You are a router deciding the next node.

Recent notes:n{chr(10).join(state.scratch[-5:])}

If math is required, return 'math'; if research is needed, return 'research'; if ready to write, return 'write'.

Task: {state.task}"""

choice = query_llm(model, prompt).lower()

if "math" in choice and any(char.isdigit() for char in state.task):

return "math"

if "research" in choice or not state.evidence:

return "research"

return "write"

def node_research(state: State, model) -> str:

prompt = f"""Generate three targeted search queries for the task:

Task: {state.task}

Return a JSON list of strings."""

queries_json = query_llm(model, prompt)

try:

queries = json.loads(queries_json[queries_json.find("["): queries_json.rfind("]")+1])[:3]

except Exception:

queries = [state.task, "background " + state.task, "pros and cons " + state.task]

hits = []

for q in queries:

hits.extend(search_docs(q, top_k=2))

state.evidence.extend(list(dict.fromkeys(hits)))

state.scratch.append("EVIDENCE:n- " + "n- ".join(hits))

return "route"

def node_math(state: State, model) -> str:

prompt = "Extract a single arithmetic expression from this task:n" + state.task

expr = query_llm(model, prompt)

expr = "".join(ch for ch in expr if ch in "0123456789+-*/().%^ ")

try:

value = safe_eval_math(expr)

state.scratch.append(f"MATH: {expr} = {value}")

except Exception as e:

state.scratch.append(f"MATH ERROR: {expr} ({e})")

return "route"

def node_write(state: State, model) -> str:

prompt = f"""Compose the final answer.

Task: {state.task}

Use the evidence and math results below, citing inline as [1], [2], etc.

Evidence:n{chr(10).join(f'[{i+1}] {e}' for i, e in enumerate(state.evidence))}

Notes:n{chr(10).join(state.scratch[-5:])}

Provide a concise, well-structured response."""

draft = query_llm(model, prompt, temperature=0.3)

state.result = draft

state.scratch.append("DRAFT:n" + draft)

return "critic"

def node_critic(state: State, model) -> str:

prompt = f"""Review and enhance the answer for accuracy, completeness, and clarity.

If improvements are needed, return the revised answer; otherwise, reply with 'OK'.

Answer:n{state.result}

Criteria:n{state.plan}"""

critique = query_llm(model, prompt)

if critique.strip().upper() != "OK" and len(critique) > 30:

state.result = critique.strip()

state.scratch.append("REVISED ANSWER")

state.done = True

return "end"

Graph Execution and Workflow Control

All node functions are registered in a dictionary for easy lookup. The run_graph function orchestrates the execution, cycling through nodes based on their returned labels until the task is complete or a maximum step count is reached. An ASCII diagram illustrates the flow, showing how the agent loops between research and math nodes before finalizing the output with writing and critique.

NODES: Dict[str, Callable[[State, Any], str]] = {

"plan": node_plan,

"route": node_route,

"research": node_research,

"math": node_math,

"write": node_write,

"critic": node_critic

}

def run_graph(task: str, api_key: str) -> State:

model = initialize_model(api_key)

state = State(task=task)

current_node = "plan"

max_steps = 12

while not state.done and state.step plan -> route -> (research route) & (math route) -> write -> critic -> END

"""

Running the Agent: Entry Point

The main program securely obtains the Gemini API key, prompts the user for a task, and initiates the graph execution. It tracks the elapsed time, prints the workflow diagram, displays the final answer, and outputs supporting evidence and recent scratchpad notes to maintain transparency and traceability.

if __name__ == "__main__":

api_key = os.getenv("GEMINI_API_KEY") or getpass.getpass("🔐 Enter your GEMINI_API_KEY: ")

user_task = input("📝 Please enter your task: ").strip() or "Compare solar and wind energy reliability; calculate 5*7."

start_time = time.time()

final_state = run_graph(user_task, api_key)

duration = time.time() - start_time

print("n=== WORKFLOW ===")

print(ascii_workflow())

print(f"n✅ Completed in {duration:.2f} seconds:n{final_state.result}n")

print("---- Supporting Evidence ----")

print("n".join(final_state.evidence))

print("n---- Recent Scratchpad Notes ----")

print("n".join(final_state.scratch[-5:]))

Summary: Harnessing Graph-Oriented AI for Structured Reasoning

This example highlights how graph-based agent design can impose deterministic control over inherently probabilistic language models. The planner node breaks down complex tasks, the router dynamically chooses between research and computation, and the critic iteratively refines the output for accuracy and clarity. Gemini serves as the core reasoning engine, while the graph nodes provide modularity, safety, and transparent state management. This architecture paves the way for advanced extensions such as custom tool integrations, persistent multi-turn memory, or parallel node execution in more sophisticated deployments.