As artificial intelligence continues to revolutionize automation, Zhipu AI has launched ComputerRL, an innovative framework that equips AI agents with the capability to proficiently operate and interact within intricate digital environments. This breakthrough tackles a fundamental obstacle in AI agent design: the gap between automated agents and the human-centric graphical user interfaces (GUIs) they must navigate. By seamlessly merging programmatic API calls with direct GUI manipulations, ComputerRL enhances the efficiency and adaptability of desktop automation, marking a transformative leap toward truly autonomous computer agents.

Integrating APIs and GUIs: A New Approach to Human-Computer Interaction

Conventional AI agents that rely solely on GUI interactions often face limitations due to interfaces optimized for human users, resulting in slow and inefficient task execution such as mouse clicks and scrolling. ComputerRL introduces a novel API-GUI hybrid model, combining the accuracy and speed of API-driven commands with the versatility of GUI-based controls. This dual strategy empowers agents to utilize APIs for tasks that benefit from direct programmatic access, while retaining the ability to perform GUI operations when APIs are unavailable or insufficient.

One of the framework’s standout features is its automated API generation powered by large language models (LLMs). By providing example tasks, users enable the system to analyze requirements, craft APIs using appropriate Python libraries, and produce comprehensive test cases. This automation ensures that APIs cover broad functionalities, simplifying agent workflows and boosting performance. For example, APIs for popular Ubuntu applications like GIMP and LibreOffice facilitate complex operations such as advanced image editing or document formatting with significantly fewer steps compared to GUI-only methods.

Robust and Scalable Infrastructure for Reinforcement Learning

Training AI agents to operate desktops at scale has traditionally been hampered by the inefficiency of virtualized environments. ComputerRL addresses this challenge through a distributed reinforcement learning (RL) architecture built on Docker containers and gRPC communication, enabling thousands of Ubuntu virtual machines to run concurrently. This infrastructure supports benchmarks like AgentBench and overcomes previous limitations related to resource consumption and network latency.

Key innovations include lightweight virtual machine deployment using qemu-in-docker, multi-node clustering for horizontal scalability, and a user-friendly web interface for real-time monitoring. Integrated with the AgentRL framework, this setup facilitates fully asynchronous training by decoupling data collection from model updates, thereby maximizing throughput. Features such as dynamic batch sizing and mechanisms to reduce off-policy bias allow for prolonged training sessions without performance degradation.

Entropulse: Sustaining Exploration Through Alternating Training Cycles

One of the persistent challenges in reinforcement learning is entropy collapse, where agents gradually lose their exploratory behavior during extended training. ComputerRL counters this with Entropulse, a technique that alternates between reinforcement learning phases and supervised fine-tuning (SFT) on successful rollout trajectories. This cyclical approach rejuvenates entropy levels, maintaining agent adaptability and driving continuous improvement.

The training process initiates with behavior cloning (BC) using diverse trajectories generated by multiple LLMs to ensure a rich learning base. It then employs step-level Group Relative Policy Optimization (GRPO) guided by rule-based rewards that assign positive feedback only to correct and contributory actions within successful task executions. Entropulse enhances this by selectively fine-tuning the agent on a curated set of high-quality, varied data from previous rollouts, preventing premature convergence and enabling the model to scale effectively across extensive training iterations.

Performance Evaluation on the OSWorld Benchmark

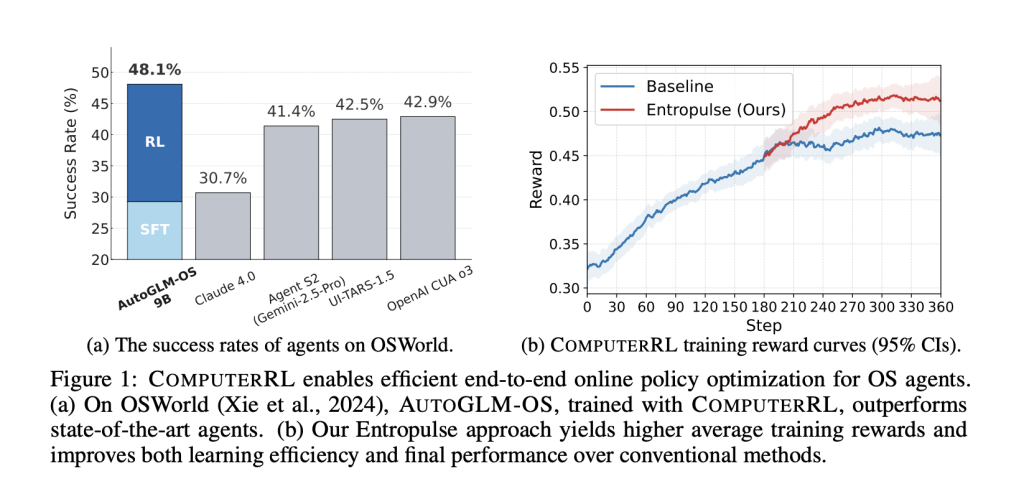

ComputerRL was rigorously tested using open-source models such as GLM-4-9B-0414 and Qwen2.5-14B, which were adapted into AutoGLM-OS variants. On the OSWorld benchmark-a comprehensive test suite assessing agent performance in interactive Ubuntu environments-AutoGLM-OS-9B achieved a notable success rate of 48.1%. This outperformed leading proprietary models including OpenAI’s CUA o3 at 42.9% and Claude 4.0 at 30.7%. The framework also demonstrated strong results on the OSWorld-Verified subset, scoring 47.3%.

Detailed ablation studies underscored the effectiveness of the API-GUI hybrid approach, which boosted success rates by 134% compared to GUI-only baselines, especially in office productivity and professional application scenarios. Training phase analyses revealed that behavior cloning established a 31.9% baseline, with reinforcement learning phases enhanced by Entropulse pushing performance to 45.8%. Entropy monitoring confirmed Entropulse’s critical role in sustaining learning momentum.

Practical case studies highlighted the framework’s real-world utility, such as automating the creation of sales summary tables in LibreOffice Calc and generating comprehensive system diagnostics through Terminal commands. Nonetheless, error analysis identified challenges including visual perception inaccuracies (accounting for 25.8% of failures) and difficulties coordinating actions across multiple applications (34.4%), indicating areas for future refinement.

Advancing Toward Fully Autonomous Desktop Agents

Looking forward, ComputerRL lays the groundwork for more sophisticated agents capable of managing dynamic, multi-step tasks over extended periods. Future enhancements may involve broadening the diversity of training data, incorporating multimodal sensory inputs such as voice and vision, and developing hierarchical planning mechanisms to improve decision-making complexity. Ensuring safety and reliability will be paramount, with anticipated features including permission controls and action validation protocols to guarantee responsible and aligned automation in real-world deployments.

By combining scalable reinforcement learning with an innovative API-GUI interaction model, ComputerRL represents a significant milestone in the evolution of AI-driven desktop intelligence. As open-source models like AutoGLM-OS continue to push the envelope, this framework paves the way for versatile, general-purpose agents that can seamlessly integrate into everyday computing environments.