Zhipu AI has unveiled GLM-4.6, the latest advancement in its GLM model lineup, designed to enhance agent-driven workflows, support extensive context understanding, and improve performance on practical coding challenges. This iteration significantly expands the input capacity to 200,000 tokens and allows for a maximum output length of 128,000 tokens. Additionally, it optimizes token efficiency for real-world applications and offers open-source weights for users who prefer local deployment.

Key Innovations in GLM-4.6

- Expanded Context and Output Capacity: The model now processes up to 200K tokens in input and generates outputs up to 128K tokens, enabling more complex and lengthy interactions.

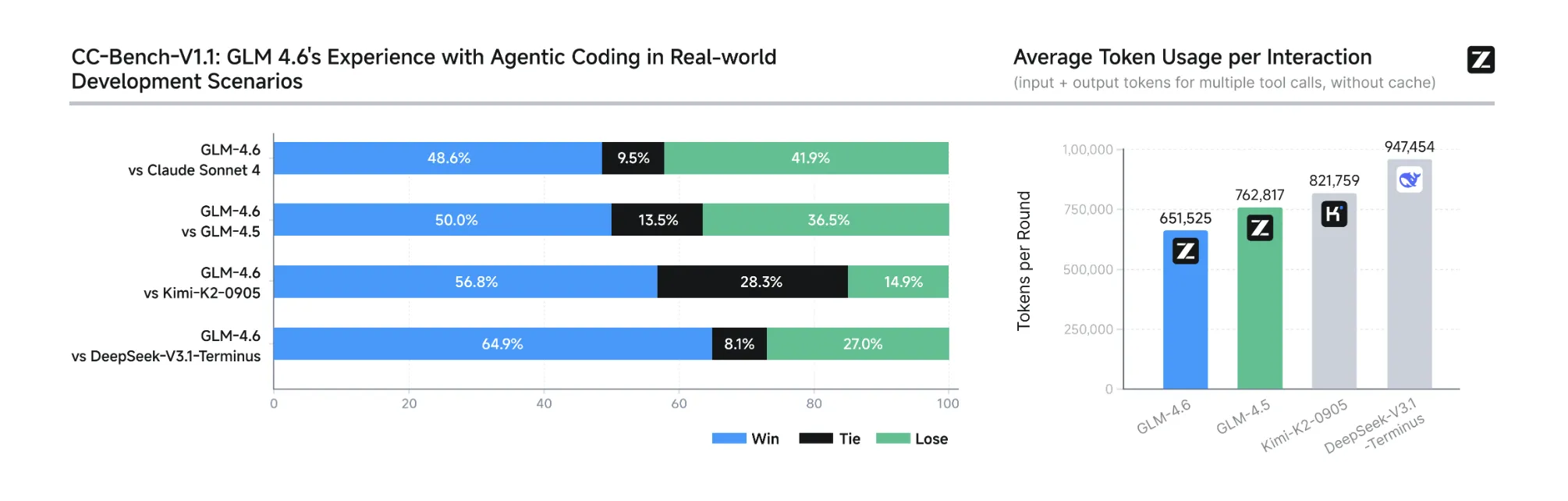

- Enhanced Coding Performance: On the comprehensive CC-Bench-a benchmark involving multi-turn coding tasks evaluated by humans in isolated Docker environments-GLM-4.6 achieves a 48.6% win rate against Claude Sonnet 4, demonstrating near-equal proficiency. It also completes tasks using approximately 15% fewer tokens compared to its predecessor, GLM-4.5. Detailed task prompts and agent decision paths are publicly accessible for transparency.

- Benchmark Improvements: Zhipu AI reports consistent performance gains over GLM-4.5 across eight widely recognized benchmarks. While GLM-4.6 matches Claude Sonnet 4 and 4.6 on several fronts, it still trails slightly behind Sonnet 4.5 in coding-specific tasks, an important consideration for developers selecting models.

- Integration and Accessibility: GLM-4.6 is accessible through the Z.ai API and OpenRouter. It seamlessly integrates with popular coding assistants such as Claude Code, Cline, Roo Code, and Kilo Code. Existing users of Coding Plan can upgrade simply by switching the model identifier to

glm-4.6. - Open-Source Licensing and Model Details: The model’s weights are openly available under the MIT license on Hugging Face, featuring a 355 billion parameter Mixture of Experts (MoE) architecture with BF16/F32 precision tensors. Note that the total parameter count does not equate to active parameters per token, and specific active parameter figures for GLM-4.6 are not disclosed.

- Support for Local Deployment: GLM-4.6 supports local inference through frameworks like vLLM and SGLang. The weights can be downloaded from Hugging Face and ModelScope, with community-driven quantization efforts enabling efficient operation on workstation-grade hardware.

Overview of GLM-4.6’s Impact

GLM-4.6 represents a meaningful evolution in large language model capabilities, particularly with its unprecedented 200K token context window and improved token efficiency-reducing consumption by roughly 15% on CC-Bench tasks compared to GLM-4.5. Its competitive performance against Claude Sonnet 4, combined with open access and local deployment options, makes it a versatile choice for developers and researchers aiming to leverage advanced AI in coding and reasoning applications.

Frequently Asked Questions

1. What are the maximum input and output token limits for GLM-4.6?

GLM-4.6 supports an input context length of up to 200,000 tokens and can generate outputs as long as 128,000 tokens.

2. Are the model weights publicly available, and under what terms?

Yes, the model weights are openly accessible under the MIT license on Hugging Face. The model features a 355 billion parameter Mixture of Experts (MoE) architecture with BF16/F32 tensor precision.

3. How does GLM-4.6 perform compared to GLM-4.5 and Claude Sonnet 4 in practical tasks?

On the extended CC-Bench, GLM-4.6 uses about 15% fewer tokens than GLM-4.5 and achieves a near-equal win rate of 48.6% against Claude Sonnet 4.

4. Is it possible to run GLM-4.6 on local machines?

Absolutely. The model weights are available for download on Hugging Face and ModelScope, with support for local inference through vLLM and SGLang. Community efforts are underway to optimize the model for workstation-level hardware via quantization.