Despite advancements in AI models designed for extended context understanding, even the most robust systems struggle to accurately track objects and maintain counts throughout lengthy, complex video streams. The forthcoming breakthrough in this domain will likely stem from models that anticipate future events and selectively retain only the most unexpected and significant occurrences, rather than simply increasing computational power or expanding context windows. Researchers from New York University and Stanford have introduced Cambrian-S, a spatially grounded multimodal large language model tailored for video analysis, alongside the VSI Super benchmark and the extensive VSI 590K dataset, both crafted to evaluate and enhance spatial supersensing capabilities in prolonged video sequences.

Advancing Beyond Language: The Evolution to Spatial Supersensing

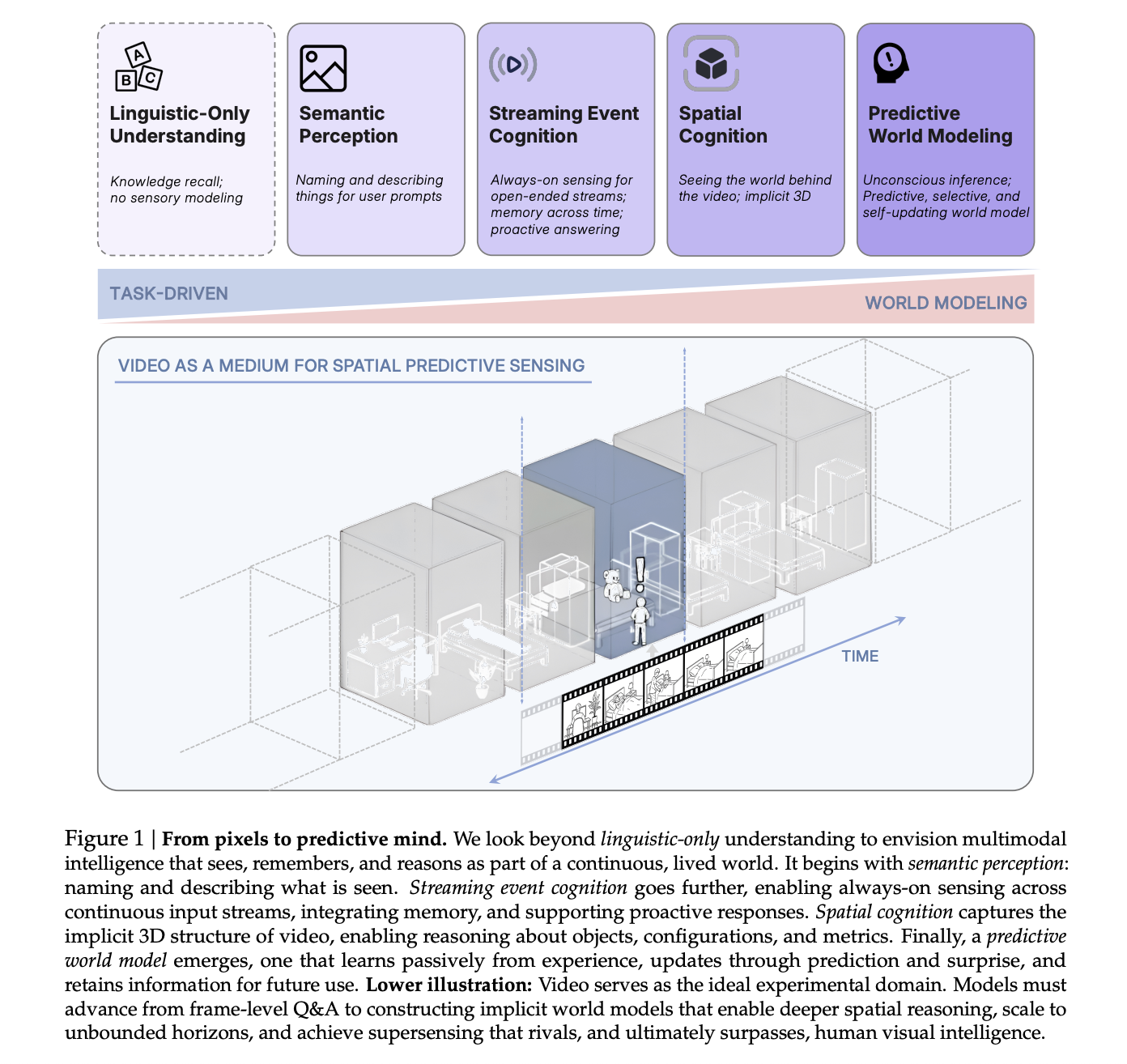

The team conceptualizes spatial supersensing as an advanced stage of AI cognition that transcends pure linguistic reasoning. This progression encompasses four key levels: semantic perception, continuous event understanding, implicit three-dimensional spatial awareness, and predictive modeling of the environment.

Current video multimodal large language models (MLLMs) typically rely on sampling sparse frames and leveraging language-based priors. These models often respond to benchmark queries by analyzing captions or isolated frames rather than continuous visual streams. Diagnostic evaluations reveal that many popular video benchmarks can be addressed with limited or even text-only inputs, indicating a lack of rigorous testing for true spatial sensing abilities.

Cambrian-S is designed to operate at the upper echelons of this hierarchy, requiring the model to retain spatial configurations over time, reason about object positions and quantities, and forecast transformations within a three-dimensional space.

Introducing VSI Super: A Rigorous Benchmark for Continuous Spatial Awareness

To highlight the limitations of existing systems in spatial supersensing, the researchers developed VSI Super, a comprehensive two-part benchmark that operates on arbitrarily long indoor video sequences.

VSI Super Recall (VSR) challenges models to perform long-term spatial observation and memory. Human annotators modify indoor walkthrough videos from datasets like ScanNet, ScanNet++, and ARKitScenes by inserting unusual objects-such as a miniature robot toy-into four distinct frames at varying spatial points. These edited clips are concatenated into streams lasting up to four hours. The model’s task is to accurately recall the sequence of locations where the object appears, a demanding “needle in a haystack” challenge requiring sequential memory.

VSI Super Count (VSC) assesses the model’s ability to maintain continuous object counting despite changes in viewpoint and environment. This benchmark stitches together room tour videos from VSI Bench and asks the model to tally the total number of a specified object across multiple rooms. The model must navigate viewpoint shifts, revisit scenes, and handle transitions while keeping an accurate cumulative count. Performance is measured using mean relative accuracy over durations ranging from 10 to 120 minutes.

When tested at a streaming rate of one frame per second, Cambrian-S 7B’s accuracy on VSR declines sharply-from 38.3% at 10 minutes to just 6.0% at 60 minutes, dropping to zero beyond that. VSC accuracy remains near zero regardless of duration. Similarly, Gemini 2.5 Flash experiences performance degradation on VSI Super despite its extended context window, underscoring that merely increasing context size is insufficient for sustained spatial sensing.

VSI 590K: A Comprehensive Dataset for Spatially Grounded Instruction

To explore whether expanding training data could enhance spatial reasoning, the researchers compiled VSI 590K, a vast spatial instruction dataset comprising 5,963 videos, 44,858 images, and 590,667 question-answer pairs sourced from ten diverse datasets.

These sources include richly annotated 3D indoor scans such as ScanNet, ScanNet++ V2, ARKitScenes, S3DIS, and Aria Digital Twin; synthetic environments from ProcTHOR and Hypersim; and pseudo-annotated web data like YouTube RoomTour and robotics datasets including Open X Embodiment and AgiBot World.

The dataset categorizes questions into 12 spatial types, including object counting, absolute and relative distances, object and room dimensions, and order of appearance. Crucially, these questions are generated from 3D annotations or reconstructions, ensuring that spatial relationships are grounded in geometric reality rather than textual inference. Ablation studies reveal that real annotated videos contribute the most significant improvements on VSI Bench, followed by simulated data, with pseudo-annotated images providing additional but smaller gains. Training on the full dataset mixture yields the best spatial reasoning performance.

The Cambrian-S Model Family: Architecture and Training Strategy

Building upon the Cambrian-1 framework, Cambrian-S employs Qwen2.5 language backbones available in 0.5B, 1.5B, 3B, and 7B parameter configurations, paired with a SigLIP2 SO400M vision encoder and a two-layer multilayer perceptron (MLP) connector.

The training process unfolds in four distinct phases:

- Phase 1: Vision-language alignment using image-text pairs.

- Phase 2: Image instruction tuning, enhancing the model’s ability to follow visual instructions, similar to the improved Cambrian-1 setup.

- Phase 3: Extension to video through general video instruction tuning on a 3 million sample dataset named Cambrian-S 3M.

- Phase 4: Specialized spatial video instruction tuning using a combination of VSI 590K and a subset of Phase 3 data.

On the VSI Bench, Cambrian-S 7B achieves an accuracy of 67.5%, outperforming open-source competitors like InternVL3.5 8B and Qwen VL 2.5 7B, as well as proprietary models such as Gemini 2.5 Pro by over 16 percentage points. Importantly, this spatial focus does not compromise the model’s general video understanding capabilities, as it maintains strong results on benchmarks like Perception Test and EgoSchema.

Enhancing Predictive Capabilities with Latent Frame Prediction and Surprise-Based Memory

To transcend the limitations of static context expansion, the researchers introduced a predictive sensing mechanism. This involves adding a Latent Frame Prediction (LFP) head-a two-layer MLP tasked with forecasting the latent representation of the upcoming video frame alongside the standard next-token prediction.

During Phase 4 training, the model optimizes a combined loss function incorporating mean squared error and cosine similarity between predicted and actual latent features, balanced against the language modeling loss. A curated subset of 290,000 videos from VSI 590K, sampled at one frame per second, is dedicated to this objective. Throughout this phase, the connector, language model, and both output heads are trained jointly, while the SigLIP vision encoder remains fixed.

At inference, the cosine distance between predicted and observed features serves as a “surprise” metric. Frames exhibiting low surprise are compressed before storage in long-term memory, whereas high-surprise frames are preserved in greater detail. A fixed-size memory buffer leverages this surprise score to determine which frames to consolidate or discard, ensuring that queries retrieve the most relevant visual information.

This surprise-driven memory system enables Cambrian-S to sustain accuracy on VSR as video length increases, all while maintaining stable GPU memory consumption. It consistently outperforms Gemini 1.5 Flash and Gemini 2.5 Flash across all tested durations, avoiding the steep performance drops seen in models relying solely on extended context windows.

For VSC, the team developed a surprise-based event segmentation approach. The model accumulates features in an event buffer and, upon detecting a high-surprise frame signaling a scene change, summarizes the buffer into a segment-level count before resetting. Aggregating these segment counts produces the final tally. In streaming evaluations, Gemini Live and GPT Realtime achieve less than 15% mean relative accuracy, plummeting near zero on 120-minute streams. In contrast, Cambrian-S with surprise segmentation attains approximately 38% accuracy at 10 minutes and maintains around 28% even at 120 minutes.

Summary of Insights

- The Cambrian-S model and VSI 590K dataset demonstrate that meticulously curated spatial data combined with powerful video MLLMs can markedly enhance spatial cognition on VSI Bench, yet challenges remain on VSI Super, indicating that scaling alone cannot resolve spatial supersensing.

- VSI Super’s design, featuring arbitrarily long indoor videos, rigorously tests continuous spatial observation, recall, and counting, making it resistant to naive context window enlargement and sparse frame sampling strategies.

- Benchmark results reveal that leading models, including Gemini 2.5 Flash and Cambrian-S, experience significant performance degradation on VSI Super even when video lengths fall within their nominal context limits, exposing fundamental architectural weaknesses in current long-context multimodal systems.

- The Latent Frame Prediction-based predictive sensing module leverages next-frame prediction errors (surprise) to guide memory compression and event segmentation, delivering substantial improvements on VSI Super compared to models relying solely on extended context, while keeping GPU memory usage efficient.

- This research frames spatial supersensing as a hierarchical process from semantic perception to predictive world modeling, advocating that future video MLLMs must integrate explicit predictive objectives and surprise-driven memory mechanisms-not just larger models and datasets-to effectively process unbounded streaming video in real-world applications.

Concluding Thoughts

Cambrian-S serves as a critical benchmark for current video MLLMs, revealing that VSI Super is more than a challenging test-it uncovers a structural flaw in long-context architectures that depend heavily on reactive perception. The introduction of a predictive sensing module, grounded in Latent Frame Prediction and surprise-driven memory, represents a pivotal advancement by linking spatial sensing with internal world modeling rather than relying solely on scaling data and parameters. This work signals a paradigm shift from passive video interpretation toward active, predictive spatial supersensing as the next frontier in multimodal AI system design.