Is it possible for a speech enhancement system trained exclusively on authentic noisy audio samples to effectively disentangle speech from background noise without relying on paired clean-noisy data? Researchers from Brno University of Technology and Johns Hopkins University introduce Unsupervised Speech Enhancement using Data-defined Priors (USE-DDP), a novel dual-stream encoder-decoder framework designed to decompose any noisy audio input into two distinct waveforms: an estimated clean speech signal and residual noise. Remarkably, this model learns entirely from unpaired datasets-leveraging a clean speech corpus and optionally a noise dataset-without ever requiring aligned clean-noisy pairs. The training process enforces that the sum of the two output signals reconstructs the original noisy input, preventing trivial solutions and aligning with principles used in neural audio codec designs.

Significance of Unsupervised Speech Enhancement

Traditional speech enhancement methods typically depend on paired datasets containing both clean and noisy versions of the same audio, which are costly and often impractical to collect in real-world environments. While some unsupervised approaches like MetricGAN-U eliminate the need for clean references, they depend heavily on external evaluation metrics during training, which can limit generalization. USE-DDP circumvents these limitations by relying solely on data-driven priors imposed through adversarial discriminators trained on separate clean speech and noise datasets. This approach uses reconstruction consistency to ensure the separated outputs combine to recreate the original noisy input, enabling effective enhancement without paired supervision.

Architecture and Methodology

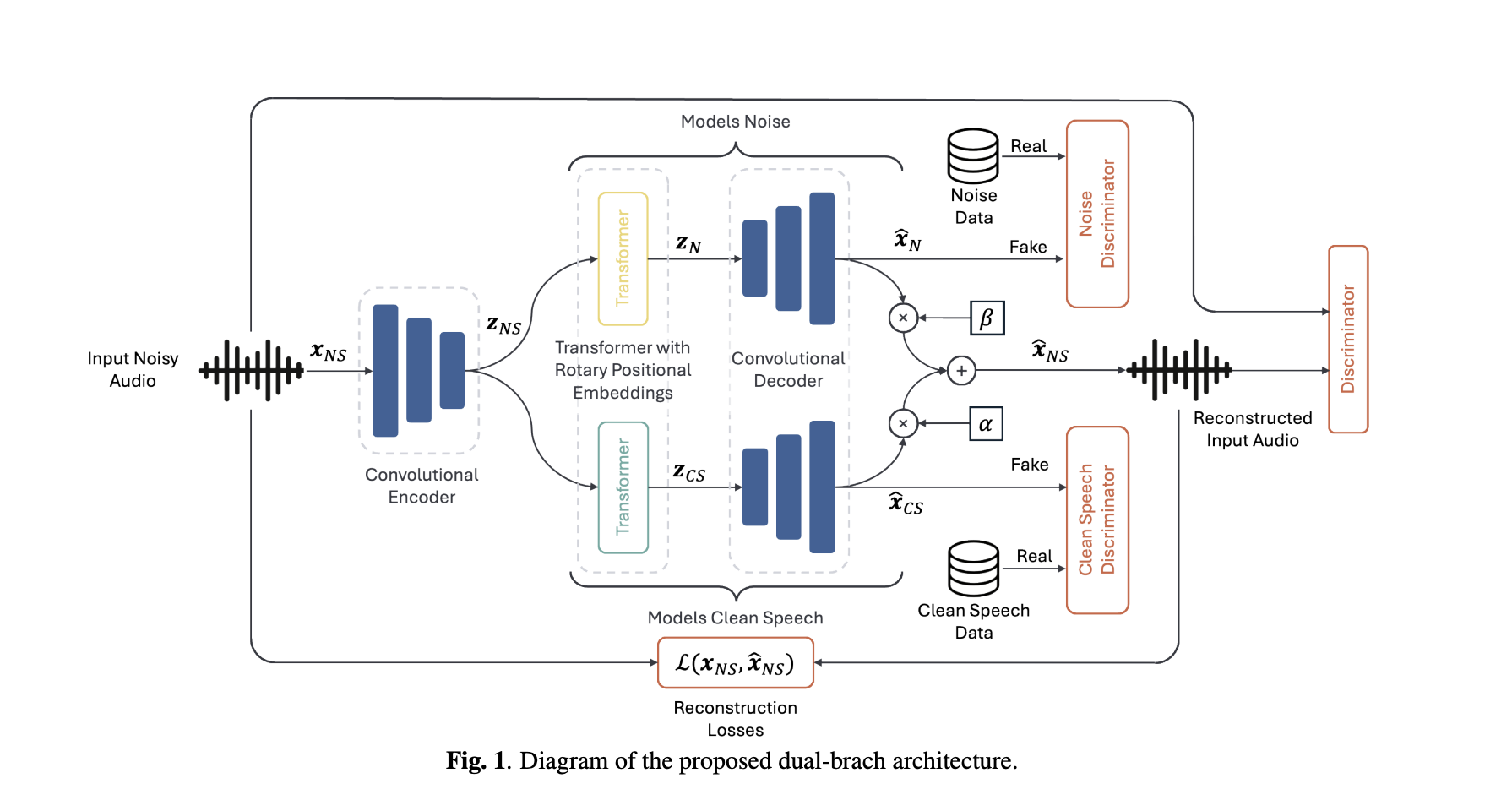

- Dual-Branch Generator: The system employs a codec-inspired encoder that compresses the input audio into a latent representation. This latent code is then processed by two parallel transformer-based branches (using RoFormer architecture), each dedicated to extracting either clean speech or noise components. A shared decoder reconstructs the waveforms from these latent streams. The final output is a weighted sum of the two signals, with learnable scalar coefficients adjusting amplitude discrepancies. The model optimizes multi-scale mel-spectrogram, short-time Fourier transform (STFT), and scale-invariant signal-to-distortion ratio (SI-SDR) losses, mirroring techniques from neural audio codec training.

- Adversarial Priors: Three sets of discriminators-targeting clean speech, noise, and the noisy mixture-enforce that each output matches the statistical properties of their respective domains. The clean speech branch is guided to resemble the clean corpus, the noise branch aligns with the noise dataset, and the reconstructed mixture is constrained to sound natural. Least Squares GAN (LS-GAN) and feature matching losses are employed to stabilize adversarial training.

- Pretrained Initialization: Starting the encoder and decoder with weights from a pretrained Descript Audio Codec significantly accelerates convergence and enhances the quality of the enhanced speech compared to training from scratch.

Performance Evaluation and Benchmarking

Evaluated on the widely used VCTK+DEMAND simulated dataset, USE-DDP achieves performance on par with leading unsupervised methods such as unSE and unSE+ (which utilize optimal transport techniques). It also delivers competitive DNSMOS scores compared to MetricGAN-U, despite not directly optimizing for this metric. For instance, the DNSMOS score improves from a baseline of 2.54 (noisy input) to approximately 3.03 with USE-DDP, while PESQ scores increase from 1.97 to around 2.47. The model’s noise attenuation is more aggressive in non-speech segments, which slightly lowers the CBAK metric but aligns with the explicit noise prior enforcement.

Impact of Dataset Selection on Results

A pivotal insight from this research is that the choice of clean speech corpus used to define the prior profoundly influences enhancement outcomes and can lead to overly optimistic results in simulated environments:

- In-domain Clean Prior (e.g., VCTK clean on VCTK+DEMAND): Yields the highest scores (DNSMOS ~3.03) but benefits from an unrealistic overlap with the test data distribution, effectively “peeking” at the target domain.

- Out-of-domain Clean Prior: Results in noticeably lower performance metrics (e.g., PESQ around 2.04), reflecting domain mismatch and some noise leakage into the speech estimate.

- Real-world Dataset (CHiME-3): Using a “close-talk” channel as an in-domain clean prior can degrade performance because the reference contains environmental noise bleed-through. Conversely, employing a truly clean, out-of-domain corpus improves DNSMOS and UTMOS scores on both development and test sets, though it may slightly reduce intelligibility under stronger noise suppression.

This finding underscores the necessity for transparent and careful selection of priors when reporting state-of-the-art results on simulated benchmarks.

Expert Analysis

The USE-DDP framework innovatively formulates speech enhancement as a two-source separation problem guided by data-driven priors rather than relying on metric-based optimization. The reconstruction constraint ensuring that clean speech plus noise equals the original input, combined with adversarial priors over independent datasets, provides a robust inductive bias. Leveraging pretrained neural audio codec weights further stabilizes training and improves output quality. While the approach competes well with existing unsupervised methods, it is crucial to acknowledge that the choice of clean speech prior significantly affects performance metrics, emphasizing the importance of dataset transparency in future research.