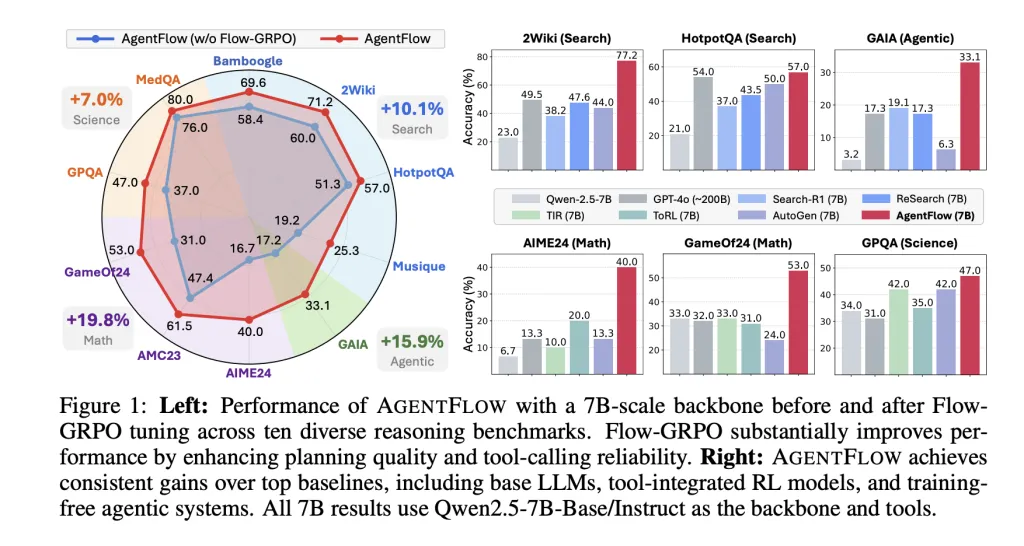

Summary: AgentFlow introduces a trainable modular agent framework composed of four key components-Planner, Executor, Verifier, and Generator-coordinated through a dedicated memory system and integrated toolset. The Planner is uniquely optimized within the loop using a novel on-policy reinforcement learning algorithm called Flow-GRPO. This method distributes a trajectory-level success reward to each decision step and employs token-wise PPO-style updates enhanced with KL divergence regularization and group-normalized advantage estimation. Evaluated across ten diverse benchmarks, a 7-billion parameter model fine-tuned with Flow-GRPO achieves significant improvements: +14.9% in search tasks, +14.0% in agentic reasoning, +14.5% in mathematics, and +4.1% in science, outperforming strong existing baselines.

Introducing AgentFlow: A Modular Framework for Tool-Enhanced Reasoning

AgentFlow conceptualizes multi-turn reasoning that leverages external tools as a Markov Decision Process (MDP). At each interaction step, the Planner sets a sub-goal and selects an appropriate tool along with its context; the Executor then activates the chosen tool; the Verifier assesses whether the process should continue; and finally, the Generator produces the conclusive output once the task concludes. This system maintains a structured, dynamic memory that logs states, tool invocations, and verification outcomes, effectively managing context size and enabling transparent audit trails. Notably, only the Planner undergoes training, while the other modules can remain as fixed, pre-built engines.

The publicly available implementation includes a flexible toolkit featuring components such as base_generator, python_coder, google_search, wikipedia_search, and web_search. It also provides streamlined scripts for inference, training, and benchmarking, all distributed under an MIT license.

Flow-GRPO: A Novel Training Algorithm for Long-Horizon Decision Making

Flow-based Group Refined Policy Optimization (Flow-GRPO) addresses the challenge of optimizing policies over extended sequences with sparse rewards by transforming the problem into manageable single-turn updates:

- Broadcasting final outcome rewards: A single, verifiable reward signal-derived from an LLM acting as a judge-reflects the correctness of the entire trajectory and is assigned to every individual step, ensuring alignment between local decisions and overall success.

- Token-level clipped objectives: Importance sampling ratios are calculated at the token level, incorporating PPO-style clipping and a KL divergence penalty relative to a reference policy to prevent excessive deviation.

- Group-normalized advantage estimation: Variance reduction techniques applied across groups of on-policy rollouts stabilize the training updates.

Performance Evaluation Across Diverse Benchmarks

Benchmark categories: The evaluation spans four main task domains: knowledge-intensive search (including datasets like Bamboogle, 2Wiki, HotpotQA, Musique), agentic reasoning (GAIA textual split), mathematical problem solving (AIME-24, AMC-23, Game of 24), and scientific question answering (GPQA, MedQA). GAIA serves as a benchmark for general assistant capabilities with a focus on tool use, excluding multimodal inputs in the textual split.

Key results with a 7B parameter backbone fine-tuned via Flow-GRPO: The model demonstrates average performance boosts of +14.9% in search tasks, +14.0% in agentic reasoning, +14.5% in math challenges, and +4.1% in science questions compared to robust baselines. Impressively, this system reportedly outperforms GPT-4o on the same benchmark suite. Additional benefits include enhanced planning accuracy, a reduction in tool invocation errors by up to 28.4% on GAIA, and positive scaling trends with increased turn budgets and model size.

Ablation studies: Online training with Flow-GRPO yields a +17.2% improvement over a static planner baseline, whereas offline supervised fine-tuning of the planner results in a notable performance drop of −19.0% on the composite metric.

Essential Insights and Implications

- Modular architecture with focused training: AgentFlow divides the agent into four distinct modules-Planner, Executor, Verifier, and Generator-supported by an explicit memory system, with training efforts concentrated solely on the Planner within the reinforcement learning loop.

- Innovative RL approach for complex tasks: Flow-GRPO effectively transforms the challenge of long-horizon reinforcement learning into token-level, single-turn policy updates by broadcasting trajectory-level rewards and applying PPO-style clipping combined with KL regularization and group-normalized advantages.

- Substantial benchmark improvements: The 7B parameter AgentFlow model achieves significant gains across ten benchmarks, notably surpassing GPT-4o in search, agentic reasoning, math, and science domains.

- Enhanced reliability in tool usage: The framework reduces errors in tool calls and improves planning quality, especially as the number of interaction turns and model scale increase.

Final Thoughts

AgentFlow represents a significant advancement in modular AI agents by structuring tool-using capabilities into four specialized components and focusing training exclusively on the Planner through the Flow-GRPO algorithm. This approach leverages trajectory-level feedback distributed across individual steps, combined with token-level policy optimization techniques, to achieve superior performance on a wide range of challenging benchmarks. The open-source release, complete with versatile tools and user-friendly scripts, invites further exploration and development within the AI research community.