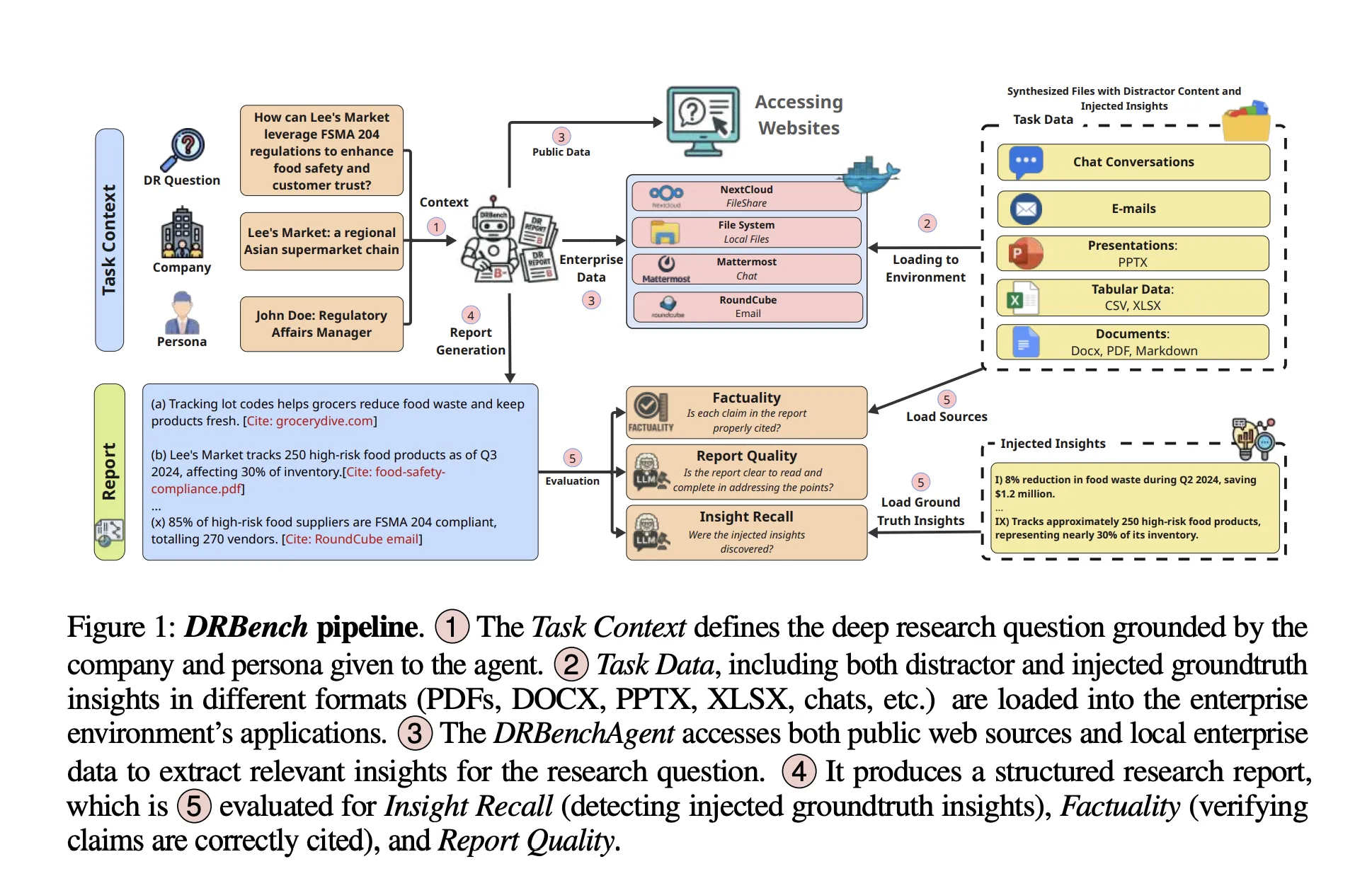

ServiceNow Research has introduced DRBench, an innovative benchmark and executable platform designed to assess “deep research” AI agents tackling complex, open-ended enterprise challenges. These tasks demand the integration of information from both the public internet and confidential corporate data to generate well-referenced, comprehensive reports. Unlike benchmarks limited to web data, DRBench simulates multifaceted enterprise workflows involving diverse data sources such as files, emails, chat conversations, and cloud storage. This setup compels AI agents to efficiently locate, filter, and attribute relevant insights across various applications before composing a coherent research document.

Comprehensive Scope of DRBench

The initial version of DRBench encompasses 15 intricate research assignments spanning 10 distinct enterprise sectors, including areas like Sales, Cybersecurity, and Regulatory Compliance. Each assignment defines a complex research question, contextualizes it within a specific company and user persona, and provides a curated set of verified insights categorized into three groups: publicly accessible insights (sourced from stable, time-anchored URLs), relevant internal insights, and internal distractor insights. These insights are embedded within authentic enterprise documents and applications, challenging agents to discern pertinent information while disregarding misleading data. The dataset was meticulously crafted through a hybrid process combining large language model (LLM) generation with rigorous human validation, culminating in a total of 114 verified insights distributed across all tasks.

Simulated Enterprise Ecosystem

A standout feature of DRBench is its containerized enterprise environment that integrates widely used corporate tools secured behind authentication layers and application-specific APIs. The DRBench Docker container orchestrates several services: Nextcloud for shared document management via WebDAV, Mattermost for team messaging with RESTful APIs, Roundcube email client supporting SMTP/IMAP protocols, FileBrowser for local file system navigation, and a VNC/NoVNC desktop interface enabling graphical user interactions. Each research task initializes by distributing data across these platforms-documents are uploaded to Nextcloud and FileBrowser, chat logs populate Mattermost channels, emails are threaded within the mail system, and user credentials are provisioned consistently. Agents can interact through either the web interfaces or programmatic APIs provided by these services. This environment is deliberately designed as a “needle-in-a-haystack” challenge, embedding both relevant and distracting insights within realistic file formats (PDF, DOCX, PPTX, XLSX), chats, and emails, all padded with plausible but irrelevant content to test the agent’s discernment.

Evaluation Metrics: Measuring Agent Performance

DRBench assesses AI agents along four critical dimensions aligned with typical analyst workflows: Insight Recall, Distractor Avoidance, Factual Accuracy, and Report Quality. Insight Recall involves breaking down the agent’s report into discrete, cited insights and matching these against the ground truth using an LLM-based evaluator, focusing on recall rather than precision. Distractor Avoidance penalizes the inclusion of irrelevant, injected distractor insights. Factual Accuracy and Report Quality evaluate the correctness, clarity, and structural coherence of the final report based on a detailed rubric, ensuring that the output is both reliable and professionally presented.

Introducing the DRBench Agent: A Baseline for Deep Research

To demonstrate the benchmark’s capabilities, the research team developed a baseline AI, the DRBench Agent (DRBA), engineered to operate seamlessly within the DRBench environment. DRBA is structured into four main modules: research planning, action planning, an iterative research loop with Adaptive Action Planning (AAP), and report generation. The planning phase supports two strategies: Complex Research Planning (CRP), which outlines investigation domains, anticipated data sources, and success criteria; and Simple Research Planning (SRP), which formulates concise sub-queries. The research loop dynamically selects tools, processes retrieved content (including storage in a vector database), identifies knowledge gaps, and iterates until the task is complete or a maximum iteration limit is reached. The report writer then synthesizes the findings, meticulously tracking citations to ensure traceability.

Significance for Enterprise AI Agents

While many “deep research” AI agents perform well on public web-based question sets, real-world enterprise deployment demands the ability to reliably locate critical internal information, filter out credible but irrelevant distractors, and accurately cite both public and private data sources within the constraints of enterprise security, authentication, and user interface complexities. DRBench addresses this crucial gap by: (1) anchoring tasks in authentic company and persona contexts; (2) dispersing evidence across multiple enterprise applications alongside the open web; and (3) rigorously evaluating whether agents successfully extract targeted insights and produce clear, factual reports. This holistic approach makes DRBench an invaluable tool for developers seeking comprehensive, end-to-end evaluation beyond isolated component testing.

Summary of Key Features

- DRBench challenges deep research agents with complex, open-ended enterprise-level tasks requiring integration of public web and private organizational data.

- The benchmark includes 15 diverse tasks across 10 industry domains, each grounded in realistic user personas and corporate settings.

- It incorporates a variety of enterprise data types-productivity documents, cloud storage, emails, and chat logs-alongside public web content, surpassing traditional web-only benchmarks.

- Agent outputs are evaluated on insight recall, accuracy, and report coherence and structure using a rubric-based scoring system.

- All code and benchmark resources are openly available on GitHub, facilitating reproducibility and community-driven enhancements.

Final Thoughts

From the perspective of enterprise AI evaluation, DRBench represents a significant advancement toward standardized, comprehensive testing of “deep research” agents. Its open-ended tasks, realistic persona-driven contexts, and requirement to synthesize evidence from both public web and private corporate knowledge bases mirror the workflows that production teams prioritize. By explicitly measuring recall of relevant insights, factual correctness, and report quality, DRBench moves beyond simplistic web-only benchmarks that often overfit to browsing heuristics. Although the 15 tasks across 10 domains are modest in scale, they effectively reveal system limitations in areas such as heterogeneous data retrieval, disciplined citation, and iterative planning, making DRBench a practical and forward-looking benchmark for enterprise AI research.