How can organizations efficiently operate trillion-parameter language models on their current heterogeneous GPU clusters without investing in expensive new hardware or becoming dependent on a single cloud vendor? The research team at Perplexity has unveiled TransferEngine along with the comprehensive pplx garden toolkit as open-source solutions designed specifically for large language model (LLM) infrastructures. This innovative framework enables the deployment of models scaling up to one trillion parameters across mixed GPU environments, eliminating the need for costly GB200-class hardware or vendor lock-in.

Overcoming Network Fabric Limitations in Large-Scale LLM Deployments

State-of-the-art Mixture of Experts (MoE) models like DeepSeek V3, boasting 671 billion parameters, and Kimi K2, reaching one trillion parameters, exceed the memory capacity of a single 8-GPU node. Consequently, these models must be distributed across multiple nodes, shifting the primary bottleneck from raw computational power (FLOPs) to the inter-GPU network fabric.

The current hardware ecosystem is fragmented: NVIDIA’s ConnectX 7 typically employs Reliable Connection (RC) transport with in-order delivery, while AWS’s Elastic Fabric Adapter (EFA) uses Scalable Reliable Datagram (SRD) transport, which is reliable but delivers packets out of order. To achieve 400 Gbps bandwidth, a single GPU may require multiple network adapters-four at 100 Gbps or two at 200 Gbps-depending on the platform.

Existing communication libraries such as DeepEP, NVSHMEM, MoonCake, and NIXL often optimize for a single vendor’s hardware, resulting in degraded or absent support across other platforms. Prior to this development, no effective cross-provider solution existed for LLM inference workloads.

Introducing TransferEngine: A Cross-Platform RDMA Abstraction for LLMs

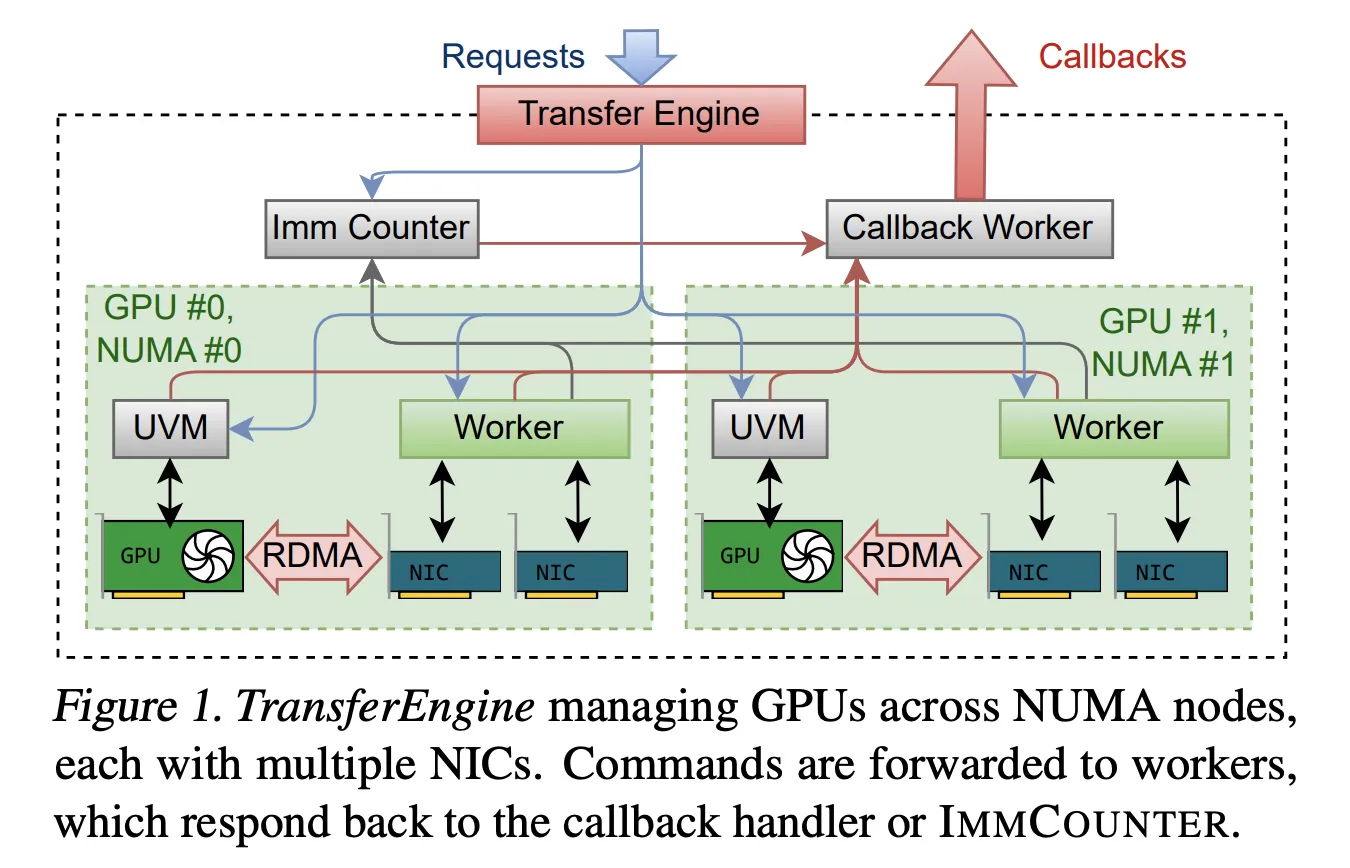

TransferEngine tackles these challenges by focusing on the common guarantees provided by various Network Interface Controllers (NICs). It assumes a reliable RDMA transport but does not rely on message ordering. The system exposes one-sided Write with Immediate (WriteImm) operations and an ImmCounter primitive to notify completion events.

Implemented in Rust, TransferEngine offers a streamlined API featuring two-sided Send and Receive for control messages, alongside three primary one-sided operations: submit_single_write, submit_paged_writes, and submit_scatter. Additionally, a submit_barrier primitive facilitates synchronization among peer groups. Peers are identified via a NetAddr structure, and memory regions are described with MrDesc. Advanced pipelines benefit from alloc_uvm_watcher, which creates device-side watchers to synchronize CPU and GPU operations.

Under the hood, TransferEngine launches a dedicated worker thread per GPU and constructs a DomainGroup per GPU to coordinate between one and four RDMA NICs. For example, a single ConnectX 7 NIC delivers 400 Gbps, while on AWS EFA, the DomainGroup aggregates multiple adapters (four at 100 Gbps or two at 200 Gbps) to match this bandwidth. The sharding mechanism intelligently distributes data transfers across all available NICs.

Performance benchmarks demonstrate that TransferEngine achieves peak throughput of 400 Gbps on both NVIDIA ConnectX 7 and AWS EFA, matching the performance of vendor-specific solutions without sacrificing portability.

pplx garden: The Open-Source Ecosystem for Scalable LLM Networking

TransferEngine is distributed as part of the pplx garden repository on GitHub under the permissive MIT license. The repository is organized for clarity: fabric-lib contains the core RDMA TransferEngine library; p2p-all-to-all implements a Mixture of Experts all-to-all communication kernel; python-ext provides Python bindings for the Rust core; and python/pplx_garden houses the Python package code.

The toolkit requires a modern GPU cluster environment, with recommendations including Linux kernel 5.12 or later (for DMA-BUF support), CUDA 12.8+, libfabric, libibverbs, GDRCopy, and an RDMA fabric with GPUDirect RDMA enabled. Each GPU should be equipped with at least one dedicated RDMA NIC to maximize performance.

Disaggregated Prefill and Decode: Streaming KvCache Efficiently

The first major application of TransferEngine is in disaggregated inference workflows, where prefill and decode stages operate on separate GPU clusters. This setup demands high-speed streaming of the KvCache from prefill GPUs to decode GPUs.

Using the alloc_uvm_watcher primitive, TransferEngine monitors model progress during prefill. After each layer’s attention output projection, the model increments a watcher value. Upon detecting this change, the system issues paged writes to transfer the corresponding KvCache pages, followed by a single write for the remaining context. This layer-wise streaming approach avoids rigid collective communication patterns and supports dynamic cluster membership.

Accelerated Weight Transfer for Asynchronous Reinforcement Learning

The second key use case involves asynchronous reinforcement learning fine-tuning, where training and inference are conducted on separate GPU pools. Traditional methods funnel updated parameters through a single rank before broadcasting, limiting throughput to one NIC.

TransferEngine enables direct point-to-point weight transfers, allowing each training GPU to write its parameter shard directly into the corresponding inference GPUs via one-sided writes. The process is pipelined, dividing tensors into stages: host-to-device copy (for Fully Sharded Data Parallel weight offloading), reconstruction and optional quantization, RDMA transfer, and a synchronization barrier implemented with scatter and ImmCounter.

In production, this architecture updates trillion-parameter models like Kimi K2 and DeepSeek V3 in approximately 1.3 seconds, transferring weights from 256 training GPUs to 128 inference GPUs.

Efficient Mixture of Experts Routing Across Heterogeneous Fabrics

The third component of pplx garden is a point-to-point Mixture of Experts dispatch and combine kernel. It leverages NVLink for intra-node communication and RDMA for inter-node transfers. Dispatch and combine phases are separated into send and receive steps, enabling the decoder to micro-batch and overlap communication with grouped general matrix multiplication (GEMM).

A host thread continuously polls GPU states and triggers TransferEngine operations when send buffers are ready. Routing information is exchanged first, followed by computation of contiguous receive offsets per expert. Tokens are written into private buffers reusable between dispatch and combine phases, minimizing memory usage and maximizing link bandwidth utilization.

On NVIDIA ConnectX 7, pplx garden’s MoE kernels achieve state-of-the-art decode latency, outperforming DeepEP on identical hardware. On AWS EFA, the same kernels deliver the first practical MoE decode latencies for trillion-parameter models, albeit with slightly higher latency. Multi-node tests on AWS H200 instances with DeepSeek V3 and Kimi K2 demonstrate latency reductions at medium batch sizes, which are typical in production environments.

Comparative Overview of RDMA Solutions for LLMs

| Feature | TransferEngine (pplx garden) | DeepEP | NVSHMEM (Generic MoE) | Mooncake |

|---|---|---|---|---|

| Primary Function | Cross-platform RDMA point-to-point for LLMs | MoE all-to-all dispatch and combine | General GPU shared memory and collectives | Distributed KV cache for LLM inference |

| Hardware Compatibility | NVIDIA ConnectX 7 & AWS EFA, multi-NIC per GPU | NVIDIA ConnectX with GPU-initiated RDMA IBGDA | NVIDIA GPUs on RDMA fabrics including EFA | RDMA NICs in KV-centric serving stacks |

| EFA Support | Full support with peak 400 Gbps throughput | No support; requires IBGDA on ConnectX | API functional but MoE use suffers severe degradation | No EFA support reported |

| Portability for LLM Systems | Cross-vendor, unified API for ConnectX 7 and EFA | Vendor-specific, ConnectX-focused | NVIDIA-centric, not viable for EFA MoE routing | KV sharing focus, lacks cross-provider support |

Summary of Key Insights

- TransferEngine delivers a unified RDMA point-to-point abstraction compatible with both NVIDIA ConnectX 7 and AWS EFA, seamlessly managing multiple NICs per GPU.

- The library supports one-sided WriteImm operations with ImmCounter notifications, achieving peak throughput of 400 Gbps on both NIC types, matching vendor-specific solutions while maintaining portability.

- Perplexity’s team employs TransferEngine in three production scenarios: disaggregated prefill-decode with KvCache streaming, rapid reinforcement learning weight transfer updating trillion-parameter models in ~1.3 seconds, and Mixture of Experts dispatch-combine for large-scale models like Kimi K2.

- On ConnectX 7 hardware, pplx garden’s MoE kernels set new standards for decode latency, outperforming DeepEP; on AWS EFA, they provide the first practical MoE decode latencies for trillion-parameter workloads.

- Being open source under the MIT license, TransferEngine and pplx garden empower teams to run massive MoE and dense models on heterogeneous H100 or H200 clusters across multiple cloud providers without rewriting networking stacks for each vendor.

Final Thoughts

The introduction of TransferEngine and the pplx garden toolkit marks a significant advancement for LLM infrastructure teams hindered by vendor-specific networking constraints and costly fabric upgrades. By offering a portable RDMA abstraction that achieves peak 400 Gbps throughput on both NVIDIA ConnectX 7 and AWS EFA, and supporting critical features like KvCache streaming, fast reinforcement learning weight updates, and Mixture of Experts routing, this solution directly addresses the challenges of serving trillion-parameter models in real-world environments.