Nvidia Launches Compact Grace-Blackwell Mini PC for Advanced AI Workloads

This week marks the arrival of Nvidia’s most compact Grace-Blackwell mini PC, a device initially previewed as Project Digits at last year’s CES event. Designed to meet the demanding needs of AI researchers and data scientists, this mini PC combines powerful GPU capabilities with a small form factor.

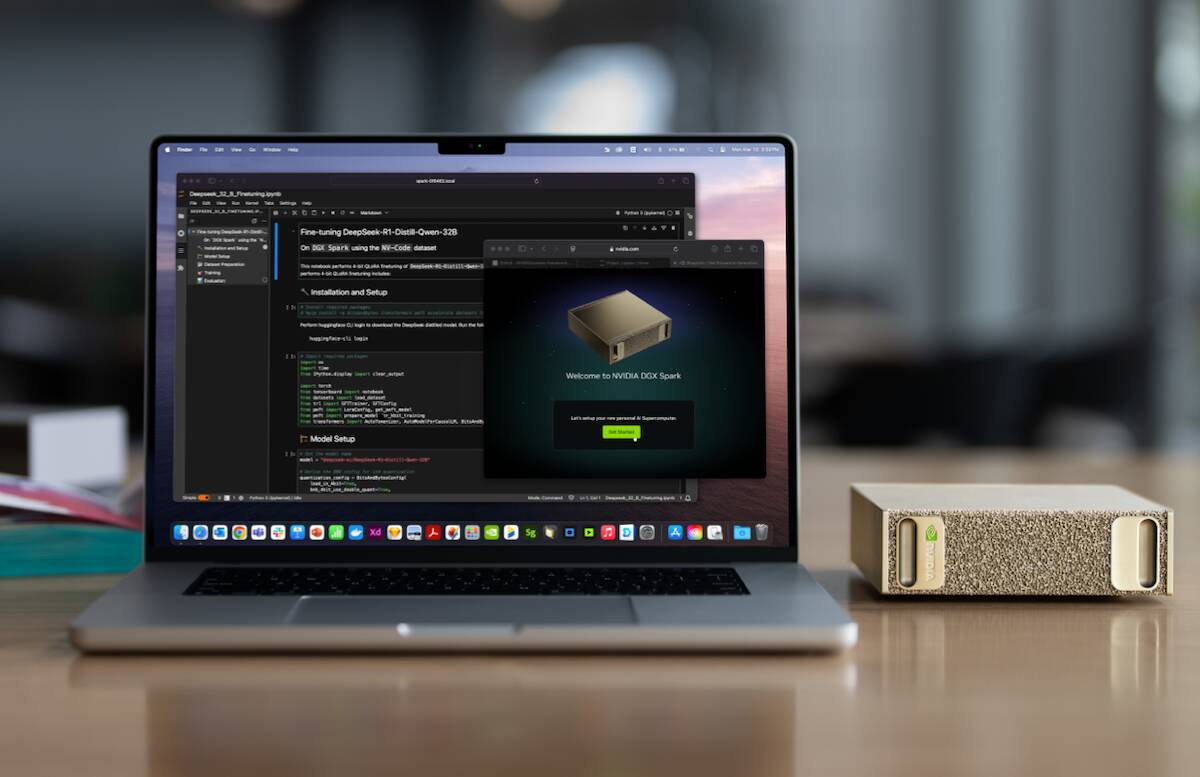

Introducing the DGX Spark: A High-Performance AI Workstation

The DGX Spark, Nvidia’s rebranded Blackwell GPU system, delivers impressive computational power, reaching up to one petaFLOP in sparse FP4 operations. It features 128 GB of unified system memory and supports ultra-fast 200 Gbps networking, enabling seamless data transfer for intensive AI tasks.

Despite its compact size, the DGX Spark is positioned as a premium workstation, starting at approximately $3,000. It is not intended for general consumer use and notably does not come with Windows pre-installed. Instead, it runs a tailored version of Ubuntu Linux and will be marketed through various OEM partners under different brand names.

Target Audience and Use Cases

The DGX Spark is tailored for professionals in AI, robotics, and machine learning who require a cost-effective yet powerful platform capable of handling models with up to 200 billion parameters. Such large-scale models demand extensive memory resources, which typical consumer GPUs cannot provide. For comparison, Nvidia’s RTX Pro 6000 offers up to 96 GB of GDDR7 memory but costs over $8,000, excluding additional system expenses.

At its launch, the DGX Spark was Nvidia’s most potent workstation GPU until the introduction of the Blackwell Ultra-based DGX Station, which now holds that title.

Miniaturizing the Superchip: The GB10 SoC

The heart of the DGX Spark is the GB10 system-on-chip (SoC), a downsized version of the Grace-Blackwell Superchips used in Nvidia’s NVL72 rack-mounted servers. This compact design integrates two compute dies linked by Nvidia’s proprietary NVLink interconnect, capable of 600 GB/s chip-to-chip communication. This same NVLink technology is set to facilitate future collaborations between Nvidia GPUs and Intel’s upcoming client CPUs.

Performance-wise, the GB10 delivers up to one petaFLOP in sparse FP4 precision and approximately 31 teraFLOPS in single-precision (FP32), comparable to the raw power of an RTX 5070 GPU. However, the consumer RTX 5070 offers double the memory bandwidth but only 12 GB of RAM, limiting its ability to handle large AI models effectively.

Innovative CPU Architecture and Memory Sharing

Unlike Nvidia’s Grace CPU, which utilizes Arm Neoverse cores, the GB10’s processor tile was developed in partnership with MediaTek and incorporates 20 ARMv9.2 cores. This includes ten high-performance X925 cores and ten Cortex A725 cores optimized for energy efficiency.

Similar to AMD’s Strix Halo SoCs and Apple’s M series chips, the GB10 combines CPU and GPU cores with a shared pool of LPDDR5x memory, achieving bandwidths exceeding twice those of traditional PC platforms. Nvidia reports a memory bandwidth of 273 GB/s for the GB10, enhancing data throughput for AI workloads.

Networking and Scalability Features

The DGX Spark’s GB10 chip integrates a ConnectX-7 network adapter equipped with dual QSFP Ethernet ports. While these ports can support high-speed networking, their primary function is to link two DGX Spark units together. This connection effectively doubles the system’s capacity for fine-tuning and inference tasks.

In this dual configuration, Nvidia claims the ability to run inference on models with up to 405 trillion parameters at 4-bit precision, showcasing the system’s scalability for cutting-edge AI research.

Availability and Market Position

Nvidia’s DGX Spark mini PCs will be available for purchase starting October 15th. This launch represents a significant step in making high-performance AI workstations more accessible to researchers and developers who require powerful yet compact solutions.