Revolutionizing AI Search with Fully Localized Processing

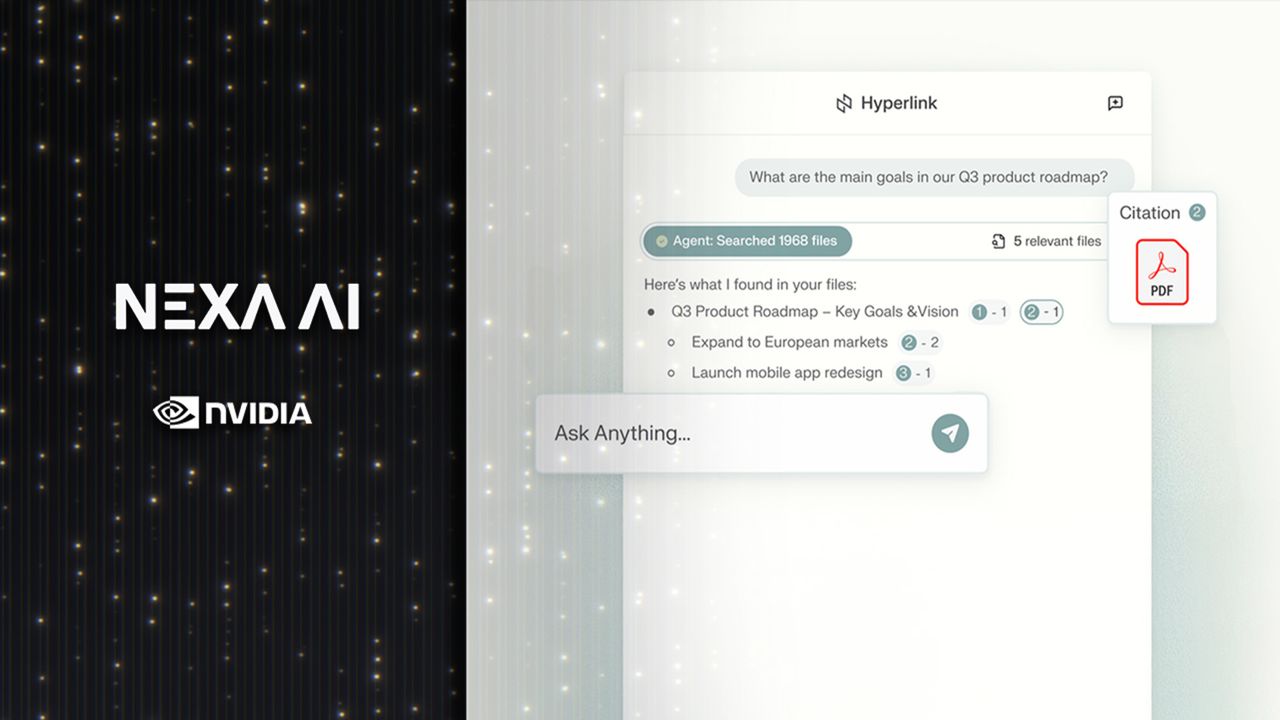

Nexa.ai has unveiled its innovative “Hyperlink” AI assistant, a groundbreaking tool that operates exclusively on local hardware. Tailored specifically for Nvidia RTX-powered PCs, this application transforms vast personal data repositories into organized, actionable insights without relying on cloud services.

Enhanced Privacy and Speed Through On-Device AI

Unlike conventional AI search tools that transmit queries to external servers, Hyperlink processes all data internally. This approach not only accelerates response times but also guarantees that sensitive information remains confined to the user’s machine, addressing growing concerns over data privacy in AI applications.

Performance Boosts on Nvidia RTX Systems

Benchmarks conducted on the latest RTX 5090 GPUs reveal that Hyperlink achieves up to three times faster data indexing and doubles the speed of large language model (LLM) inference compared to previous versions. This efficiency enables the software to swiftly scan and categorize thousands of files, including PDFs, presentations, and images, outperforming many existing AI search solutions.

Contextual Understanding Beyond Keyword Matching

Moving beyond traditional keyword-based searches, Hyperlink leverages advanced LLM reasoning to interpret user intent and extract relevant content-even when filenames are vague or unrelated. This semantic comprehension mirrors the broader trend of integrating generative AI into productivity workflows, enhancing the relevance and accuracy of search results.

Connecting Information Across Multiple Documents

The system excels at synthesizing information from various files, delivering structured answers with clear citations. This capability is particularly valuable for professionals managing confidential data who require both rapid insights and stringent privacy safeguards.

Significant Improvements in Data Indexing and Response Times

Nvidia’s latest optimizations for RTX hardware have accelerated retrieval-augmented generation (RAG) processes, enabling dense data folders of around 1GB to be indexed in approximately five minutes-a threefold improvement over previous durations. Faster inference speeds translate to quicker, more fluid interactions, streamlining tasks such as preparing for meetings, conducting research, or analyzing reports.

Balancing Convenience with Data Security

By combining local AI reasoning with GPU acceleration, Hyperlink offers a compelling solution for users who prioritize data confidentiality without sacrificing the benefits of cutting-edge generative AI. This fusion of speed, privacy, and contextual intelligence positions Hyperlink as a leading tool for secure, efficient knowledge management on personal devices.

- Hyperlink operates entirely on local hardware, ensuring complete search privacy.

- Indexes extensive data collections on RTX-equipped PCs within minutes.

- Leverages Nvidia’s latest enhancements to double LLM inference speed.