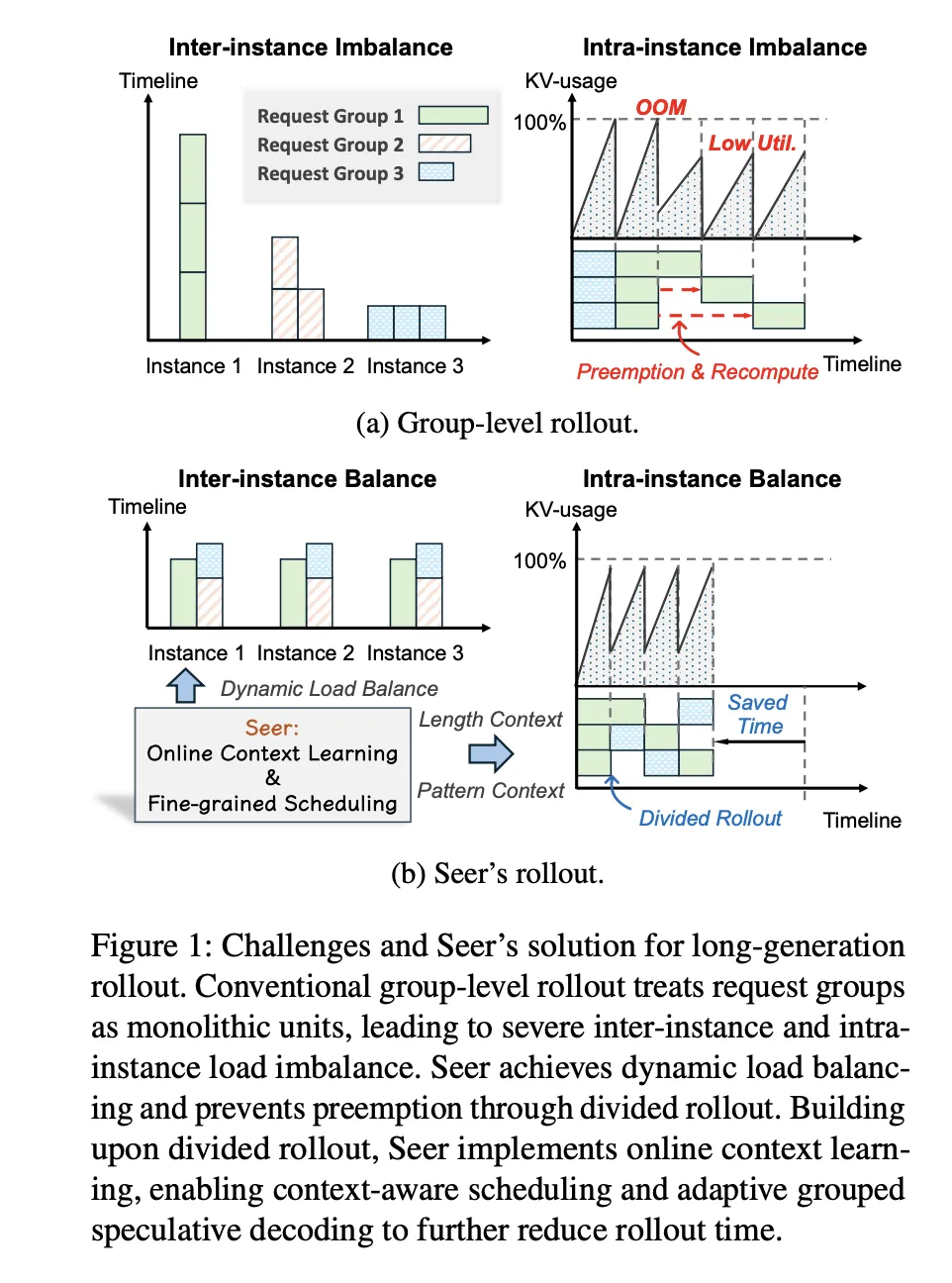

How can reinforcement learning (RL) for expansive reasoning models avoid stagnation caused by a handful of extremely lengthy and slow rollouts, while GPUs remain underutilized? Researchers from Moonshot AI and Tsinghua University have unveiled ‘Seer’, an innovative online context learning framework designed to tackle a critical bottleneck in reinforcement learning workflows for large language models. In synchronous on-policy RL setups, the rollout phase typically consumes the majority of iteration time. Seer reimagines this phase, achieving remarkable improvements with rollout throughput enhancements ranging from 74% to 97%, alongside tail latency reductions between 75% and 93%, when benchmarked against a robust synchronous baseline known as veRL.

Understanding the Challenges of Synchronous Rollouts in Reasoning Models

Contemporary reinforcement learning tasks for reasoning models often involve generating extended chain-of-thought outputs. In their experiments, the Seer team applied the GRPO algorithm across three distinct models: Moonlight, Qwen2 VL 72B, and Kimi K2. These experiments utilized a substantial hardware setup comprising 32 compute nodes, each equipped with 8 H800 GPUs. The models leveraged 32, 128, and 256 GPUs respectively, processing 400, 600, and 800 prompts per iteration, with each prompt generating 8 or 16 responses.

The maximum token generation lengths are notably large: Moonlight supports up to 65,536 tokens, Qwen2 VL 72B up to 40,960 tokens, and Kimi K2 up to 98,304 tokens. As decoding progresses, a single chain-of-thought request’s KVCache memory footprint can balloon from a few hundred megabytes to several tens of gigabytes. This exponential memory growth forces the system to either limit concurrency or preempt ongoing requests, both of which incur costly re-decoding overheads.

In this context, the researchers define “tail requests” as the slowest 10% of requests to complete during a rollout. For Moonlight and Qwen2 VL 72B, these tail requests alone can account for up to half of the total rollout duration in the baseline system. Since rollout time dominates the iteration cost, these stragglers significantly impede the overall reinforcement learning speed.

Seer’s System Design: Leveraging Mooncake and vLLM for Efficiency

Seer maintains the same RL algorithm as synchronous veRL, ensuring that each training iteration uses data exclusively from the current rollout, thereby preserving on-policy learning characteristics. The training phase employs Megatron for distributed optimization, while the rollout phase utilizes a proprietary implementation of vLLM as the inference engine.

To enable aggressive and flexible request scheduling, Seer builds upon a Global KVCache Pool, which is based on the Mooncake disaggregated KVCache architecture already deployed in production for the Kimi model. Mooncake offers a two-tiered KVCache storage system combining DRAM and SSD, shared across inference nodes. This architecture allows Seer to migrate requests seamlessly between nodes without the need to recompute prefill states.

Seer introduces three pivotal mechanisms atop this infrastructure:

- Segmented Rollout Processing

- Context-Aware Scheduling

- Adaptive Grouped Speculative Decoding

These components are coordinated through a Request Buffer, a Context Manager, and an Inference Engine Pool, all interfacing with the Global KVCache Pool.

Segmented Rollout and Dynamic Scheduling for Balanced Workloads

Traditional synchronous rollout methods assign entire GRPO groups-collections of requests sharing a prompt-to specific inference instances, which retain these groups until all responses complete. Due to significant variability in output lengths, this approach often results in load imbalances and prolonged straggler requests.

Seer innovates by decomposing each group into individual requests and further splitting each request into smaller chunks based on token generation length. For example, the scheduler might limit each chunk to 8,000 tokens. After processing a chunk, the request is re-queued until it reaches an end-of-sequence token or its maximum token limit. This fine-grained chunking enables more flexible scheduling and better resource utilization.

Because the KVCache resides in the shared Global KVCache Pool, requests can migrate between instances at chunk boundaries without re-executing expensive prefill computations. The scheduler dynamically manages concurrency to maximize memory usage while minimizing preemptions, smoothing KVCache utilization throughout the rollout.

Context-Aware Scheduling Driven by Group Length Correlations

The team observed that requests within the same prompt group tend to have correlated output lengths. Seer exploits this insight by designating one request per group as a “speculative request,” which is prioritized in a high-priority queue and scheduled using a smallest-first policy based on tokens generated so far. Shorter requests complete quickly, allowing the system to identify groups likely to produce long tail requests.

The Context Manager maintains an evolving length estimate for each group, updating it to the maximum length observed among completed requests. If no requests have finished, it conservatively uses the original maximum token limit. Once speculative requests are underway or completed, Seer schedules the remaining requests using an approximate longest-first policy at the group level. This strategy closely approximates an ideal scheduler that would have prior knowledge of all output lengths, significantly improving throughput and reducing tail latency.

Accelerating Decoding with Adaptive Grouped Speculative Decoding

To further speed up decoding, especially for long tail requests, Seer incorporates Adaptive Grouped Speculative Decoding (AGSD). This method introduces a Distributed Grouped Draft System (DGDS), which maintains a Compressed Suffix Tree for each group, aggregating token sequences from all requests within that group.

Inference instances asynchronously append generated tokens to the DGDS, periodically retrieving updated suffix trees to perform local speculative decoding based on shared token pattern statistics. The system dynamically adjusts draft length and the number of speculative paths according to model architecture, batch size, and observed acceptance lengths.

For dense and Mixture of Experts (MoE) models, Seer precomputes speculation thresholds to constrain draft depth per batch. During late tail stages, when concurrency is low, the system increases draft depth and enables multi-path drafting to maximize accepted tokens per decoding step.

Experimental ablation studies reveal that segmented rollout alone boosts throughput by up to 35% over the baseline. Adding context-aware scheduling raises this improvement to 47%, while incorporating adaptive grouped speculative decoding culminates in a total speedup of 77% to 87% in the evaluated iterations.

Comprehensive Impact on Reinforcement Learning Training Efficiency

Seer was tested on three RL tasks using Moonlight, Qwen2 VL 72B, and Kimi K2 models, each undergoing 10 rollout iterations. The system demonstrated rollout throughput improvements ranging from 74% to 97% compared to veRL, while maintaining the same RL algorithm and vLLM inference engine.

Tail latency was dramatically reduced by 75% to 93%. In memory-constrained scenarios, the baseline system spent nearly half of its rollout time processing the slowest 10% of requests. Seer effectively eliminates this bottleneck by combining segmented rollout, context-aware scheduling, and adaptive grouped speculative decoding, all supported by the Mooncake-based Global KVCache Pool.

Essential Insights and Practical Implications

- Rollout Phase Bottleneck: Seer addresses the rollout stage in synchronous RL, which can consume 63% to 87% of iteration time, primarily due to long tail requests and fragmented KVCache usage.

- Innovative Mechanisms: The system integrates segmented rollout, context-aware scheduling, and adaptive grouped speculative decoding to leverage output length correlations and token pattern similarities among GRPO responses sharing prompts.

- Fine-Grained Scheduling with Global KVCache: By splitting requests into manageable chunks and enabling migration across a shared KVCache pool, Seer maintains synchronous on-policy RL while optimizing GPU memory utilization and minimizing preemptions.

- Online Context Utilization: Length statistics derived from speculative requests guide scheduling decisions, approximating an oracle scheduler and significantly reducing tail latency.

- Proven Performance Gains: On production-scale RL workloads, Seer delivers 74% to 97% higher rollout throughput and cuts long tail latency by 75% to 93% compared to state-of-the-art synchronous vLLM baselines.

Final Thoughts

Seer represents a significant advancement in reinforcement learning system design by optimizing the rollout phase without altering the underlying GRPO algorithm, thereby preserving on-policy guarantees and reproducibility. Its combination of segmented rollout, context-aware scheduling, and adaptive grouped speculative decoding offers a scalable and practical framework for RL systems that rely on extensive chain-of-thought reasoning and large KVCache footprints. This work highlights that system-level online context learning is now as vital as model architecture innovations for efficiently scaling reasoning-based reinforcement learning.