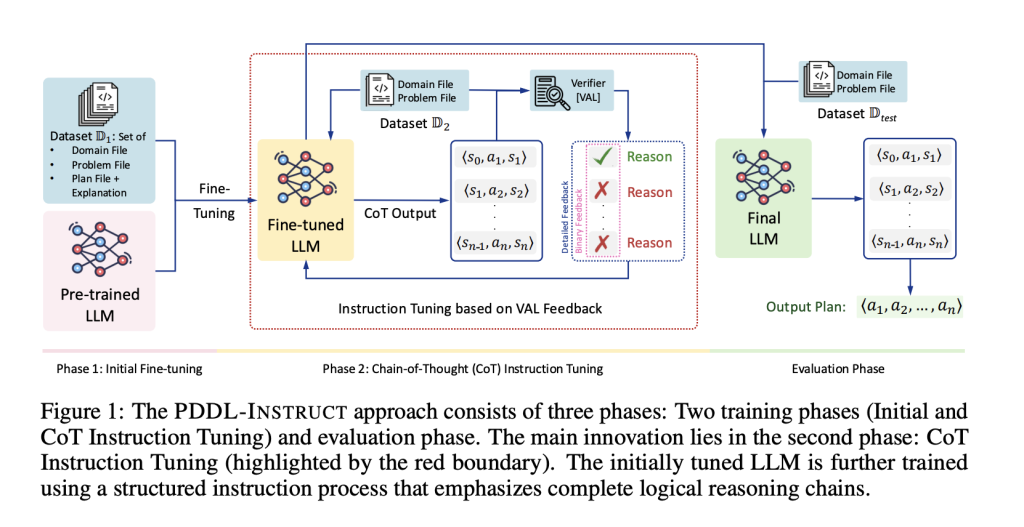

Is it possible for an 8-billion-parameter language model to generate guaranteed valid multi-step plans rather than merely producing convincing but flawed proposals? Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have developed PDDL-INSTRUCT, an innovative instruction-tuning framework that integrates logical chain-of-thought reasoning with external plan verification (VAL). This approach significantly enhances the symbolic planning capabilities of large language models (LLMs). When tested on PlanBench benchmarks, a fine-tuned Llama-3-8B model achieved an impressive 94% plan validity in the Blocksworld domain, alongside substantial improvements in the more complex Mystery Blocksworld and Logistics tasks. Overall, the framework delivers up to a 66% absolute increase in valid plan generation compared to baseline models.

Addressing Logical Flaws in LLM-Generated Plans

One of the persistent challenges in using LLMs for planning is their tendency to produce plans that sound reasonable but fail logical consistency checks. The PDDL-INSTRUCT framework confronts this issue by combining explicit semantic understanding of states and actions with rigorous ground-truth validation:

- Failure Explanation Training: The model learns to identify and articulate the reasons behind plan failures, such as unmet preconditions, incorrect effects, frame violations, or failure to achieve the goal state.

- Stepwise Logical Reasoning: Prompts are designed to enforce a detailed chain-of-thought process, requiring the model to infer preconditions and the addition or deletion of effects at each step, effectively tracing state-action-state transitions (e.g., st, at, st+1).

- External Plan Validation: Each planning step is checked using the established VAL plan validator, which provides either binary feedback (valid/invalid) or detailed diagnostics pinpointing which precondition or effect failed. The latter feedback type yields the most significant performance improvements.

- Two-Phase Optimization: The training process is split into two stages: first, refining the reasoning chains by penalizing state-transition errors; second, enhancing the overall accuracy of the final plan.

Benchmarking Performance Across Challenging Domains

The effectiveness of PDDL-INSTRUCT was evaluated using the PlanBench suite, which includes:

- Blocksworld: A classic planning domain where the tuned Llama-3-8B model achieved up to 94% valid plans.

- Mystery Blocksworld: A more difficult variant with obfuscated predicate names designed to prevent pattern matching. Prior models typically scored below 5% validity here, but PDDL-INSTRUCT demonstrated dramatic improvements, with validity rates increasing by factors as high as 64×.

- Logistics: Another complex domain where the framework significantly boosted the proportion of valid plans.

Across these diverse environments, the approach consistently outperformed untuned baselines by as much as 66% absolute in plan validity. Moreover, detailed validator feedback proved more beneficial than simple binary signals, and allowing longer feedback interactions further enhanced results.

Implications and Future Directions

PDDL-INSTRUCT exemplifies how integrating logical reasoning with external verification can substantially elevate the planning capabilities of LLMs. While the current implementation focuses on classical PDDL domains such as Blocksworld and Logistics, and depends on the VAL tool as an external oracle, the results-like achieving 94% valid plans on Blocksworld-highlight a promising neuro-symbolic training paradigm. This method grounds reasoning steps in formal semantics and automates correctness checks, making it highly applicable for agent systems that can incorporate a verifier in their workflow.

Looking ahead, extending this framework to handle more complex planning scenarios involving temporal constraints, numeric variables, and cost optimization remains an open challenge. Nonetheless, PDDL-INSTRUCT sets a strong foundation for future research aiming to combine the flexibility of LLMs with the rigor of symbolic planning.