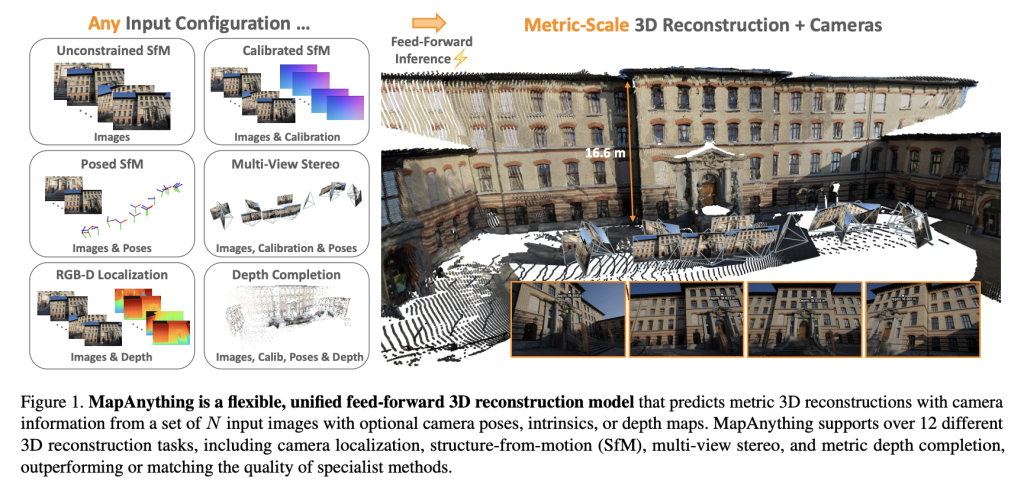

Researchers from Meta Reality Labs in collaboration with Carnegie Mellon University have unveiled MapAnything, a comprehensive transformer-based framework designed to directly infer factored metric 3D scene geometry from images and optional sensor data. Distributed under the Apache 2.0 license, this innovative model comes with complete training and evaluation code, marking a significant leap beyond traditional specialized pipelines by supporting over a dozen 3D vision tasks in a single, streamlined forward pass.

The Need for an All-in-One 3D Reconstruction Framework

Traditional image-driven 3D reconstruction techniques have long depended on segmented workflows involving feature extraction, two-view pose estimation, bundle adjustment, multi-view stereo, or monocular depth prediction. Although these modular approaches have proven effective, they often demand extensive task-specific tuning, optimization, and complex post-processing steps.

While recent transformer-based models like DUSt3R, MASt3R, and VGGT have simplified certain aspects of this process, they remain constrained by fixed view counts, rigid camera assumptions, or reliance on tightly coupled representations that require costly optimization.

MapAnything breaks these barriers by:

- Handling up to 2,000 input images simultaneously during inference.

- Incorporating auxiliary inputs such as camera intrinsics, poses, and depth maps flexibly.

- Generating direct metric 3D reconstructions without the need for bundle adjustment.

Its factored scene representation-comprising ray directions, depth, camera poses, and a global scale parameter-offers unmatched modularity and adaptability compared to previous methods.

Innovative Architecture and Scene Representation

At the heart of MapAnything lies a multi-view alternating-attention transformer. Each input image is encoded using DINOv2 ViT-L features, while optional inputs like rays, depth, and poses are embedded into the same latent space through lightweight CNNs or MLPs. A trainable scale token facilitates metric normalization across multiple views.

The model outputs a factored representation that includes:

- Per-view ray directions corresponding to camera calibration.

- Depth values along rays, predicted up to an unknown scale.

- Camera poses relative to a reference viewpoint.

- A single metric scale factor that aligns local reconstructions into a consistent global coordinate frame.

This explicit factorization eliminates redundancy and enables the model to seamlessly address tasks such as monocular depth estimation, multi-view stereo, structure-from-motion (SfM), and depth completion without requiring specialized output heads.

Robust Training Methodology

MapAnything was trained on a diverse collection of 13 datasets spanning indoor, outdoor, and synthetic environments, including BlendedMVS, Mapillary Planet-Scale Depth, ScanNet++, and TartanAirV2. Two model variants are available:

- An Apache 2.0 licensed version trained on six datasets.

- A CC BY-NC licensed version trained on all thirteen datasets, offering enhanced performance.

Key training techniques include:

- Probabilistic input dropout: Geometric inputs such as rays, depth, and poses are randomly dropped during training to improve robustness across varying input configurations.

- Covisibility-based sampling: Ensures that input views have sufficient overlap, enabling reconstruction with over 100 views.

- Factored loss functions in log-space: Depth, scale, and pose are optimized using scale-invariant and robust regression losses to enhance training stability.

Training was conducted on 64 NVIDIA H200 GPUs utilizing mixed precision, gradient checkpointing, and curriculum learning strategies, scaling input views from 4 up to 24.

Performance Benchmarks and Evaluation

Dense Multi-View Reconstruction

On challenging datasets such as ETH3D, ScanNet++ v2, and TartanAirV2-WB, MapAnything delivers state-of-the-art results across point cloud accuracy, depth estimation, pose recovery, and ray direction prediction. It outperforms established baselines like VGGT and Pow3R even when relying solely on images, with further gains when incorporating calibration or pose priors.

Illustrative results include:

- Pointmap relative error reduced to 0.16 using only images, compared to 0.20 for VGGT.

- With images plus intrinsics, poses, and depth inputs, error plummets to 0.01 alongside inlier ratios exceeding 90%.

Two-View Reconstruction Accuracy

When benchmarked against DUSt3R, MASt3R, and Pow3R, MapAnything consistently surpasses them in scale, depth, and pose precision. With additional priors, it achieves inlier ratios above 92% on two-view tasks, significantly outperforming previous feed-forward architectures.

Single-Image Camera Calibration

Although not explicitly trained for single-image calibration, MapAnything attains an average angular error of just 1.18°, outperforming specialized methods like AnyCalib (2.01°) and MoGe-2 (1.95°).

Depth Estimation Excellence

On the Robust-MVD benchmark, MapAnything sets new records for multi-view metric depth estimation. When supplemented with auxiliary inputs, its error rates rival or exceed those of dedicated depth models such as MVSA and Metric3D v2.

Overall, MapAnything demonstrates approximately a twofold improvement over previous state-of-the-art techniques across multiple 3D vision tasks, underscoring the advantages of its unified training approach.

Major Contributions and Innovations

- Comprehensive Feed-Forward Model: Capable of addressing more than 12 distinct 3D vision problems, ranging from monocular depth estimation to SfM and stereo reconstruction.

- Factored Scene Representation: Explicitly separates rays, depth, camera pose, and metric scale, enhancing modularity and interpretability.

- Superior Benchmark Performance: Achieves state-of-the-art results with reduced redundancy and improved scalability.

- Open-Source Availability: Provides full access to data processing pipelines, training scripts, evaluation benchmarks, and pretrained weights under the Apache 2.0 license.

Final Thoughts

MapAnything sets a new standard in 3D vision by integrating multiple reconstruction tasks-including SfM, stereo, depth estimation, and camera calibration-within a single transformer-based framework featuring a factored scene representation. It not only surpasses specialized methods across a variety of benchmarks but also adapts effortlessly to diverse input modalities such as intrinsics, poses, and depth maps. With its open-source release and support for over a dozen tasks, MapAnything paves the way for a versatile and scalable 3D reconstruction backbone suitable for a wide range of applications.