Can Real-Time Large Language Models Maintain Safety Standards? Alibaba’s Qwen team believes the answer is yes, unveiling Qwen3Guard-a cutting-edge multilingual safety framework designed to oversee prompts and live AI-generated responses instantaneously.

Qwen3Guard is available in two distinct forms: Qwen3Guard-Gen, a generative classifier that evaluates the entire prompt and response context, and Qwen3Guard-Stream, a token-level classifier that performs moderation dynamically as text is produced. These models come in three sizes-0.6B, 4B, and 8B parameters-and support an impressive 119 languages and dialects, making them suitable for global applications. The models are fully open-source, with weights accessible on Hugging Face and GitHub.

Innovations in Real-Time AI Safety

- Token-Level Moderation Mechanism: The streaming variant integrates two lightweight classification heads at the final transformer layer-one dedicated to analyzing the user’s prompt, and the other assessing each generated token in real time, categorizing them as Safe, Controversial, or Unsafe. This approach enables immediate policy enforcement during response generation, eliminating the need for after-the-fact filtering.

- Three-Stage Risk Classification: Moving beyond simple safe/unsafe dichotomies, Qwen3Guard introduces a Controversial category that allows for flexible moderation thresholds. This tier supports nuanced handling of borderline content, enabling escalation or review rather than outright rejection, which is particularly valuable in complex regulatory environments.

- Structured Safety Outputs for Generative Models: The generative classifier outputs standardized metadata headers-

Safety: ...,Categories: ..., andRefusal: ...-which streamline integration with downstream pipelines and reinforcement learning reward systems. The categories cover a broad spectrum including Violence, Illegal Activities, Sexual Content, Personally Identifiable Information (PII), Suicide & Self-Harm, Unethical Behavior, Politically Sensitive Issues, Copyright Infringement, and Jailbreak Attempts.

Performance Benchmarks and Reinforcement Learning Integration

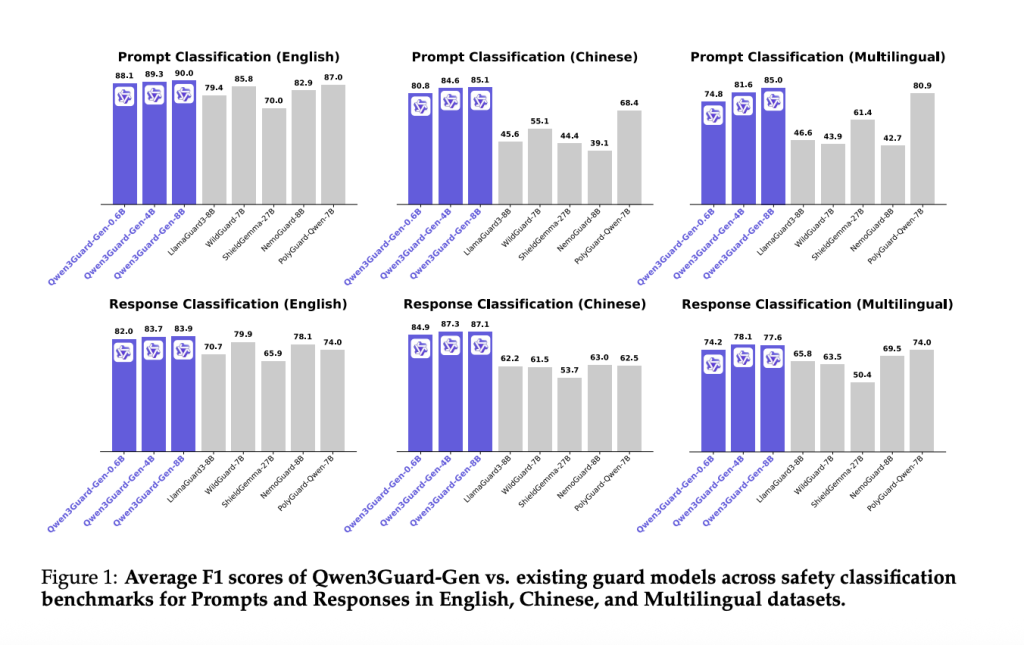

Alibaba’s research demonstrates that Qwen3Guard achieves leading F1 scores across English, Chinese, and multilingual safety datasets for both prompt and response moderation tasks. Comparative analyses show Qwen3Guard-Gen consistently outperforming previous open-source models, underscoring its robustness across diverse linguistic contexts.

In training AI assistants, the team experimented with safety-focused reinforcement learning (RL) using Qwen3Guard-Gen as a reward function. A purely safety-driven reward maximized refusal rates but slightly reduced performance on challenging benchmarks. However, a hybrid reward strategy-which balances safety penalties with quality incentives-boosted safety scores from approximately 60% to over 97% on the WildGuard metric, without compromising reasoning capabilities. This balanced approach effectively prevents the common pitfall of models defaulting to excessive refusals.

Practical Applications and Deployment Scenarios

Unlike many existing open-source safety models that only evaluate completed outputs, Qwen3Guard’s dual-head architecture and token-level scoring are tailored for real-time, streaming AI systems. This design facilitates early-stage intervention-such as blocking, redacting, or rerouting content-with minimal latency overhead compared to re-generating responses.

The introduction of the Controversial risk tier also aligns well with enterprise compliance frameworks, allowing organizations to customize moderation policies. For instance, content flagged as controversial can be treated as unsafe in highly regulated industries, while being permitted with human review in consumer-facing chatbots.

Conclusion: A Comprehensive Safety Solution for Global AI

Qwen3Guard offers a versatile and practical safety infrastructure featuring open-source weights (0.6B, 4B, 8B), dual operational modes (full-context generative and token-level streaming), tri-level risk assessment, and extensive multilingual support covering 119 languages. This makes it an excellent foundation for production teams aiming to replace traditional post-hoc filters with proactive, real-time moderation, while effectively balancing safety and user experience through reinforcement learning.