What level of performance can a sparse 8.3 billion-parameter Mixture-of-Experts (MoE) model with an active path of approximately 1.5 billion parameters achieve on mobile devices without compromising latency or memory constraints? Liquid AI has introduced a compact MoE model specifically engineered for on-device deployment, optimized to operate within stringent limits on memory, latency, and power consumption. Unlike typical MoE architectures designed primarily for cloud-based batch processing, the LFM2-8B-A1B model is tailored for smartphones, laptops, and embedded systems. It boasts a total of 8.3 billion parameters but activates only about 1.5 billion parameters per token through sparse expert routing, maintaining a lightweight computational footprint while enhancing representational power. This model is distributed under the LFM Open License v1.0 (lfm1.0).

Innovative Model Design

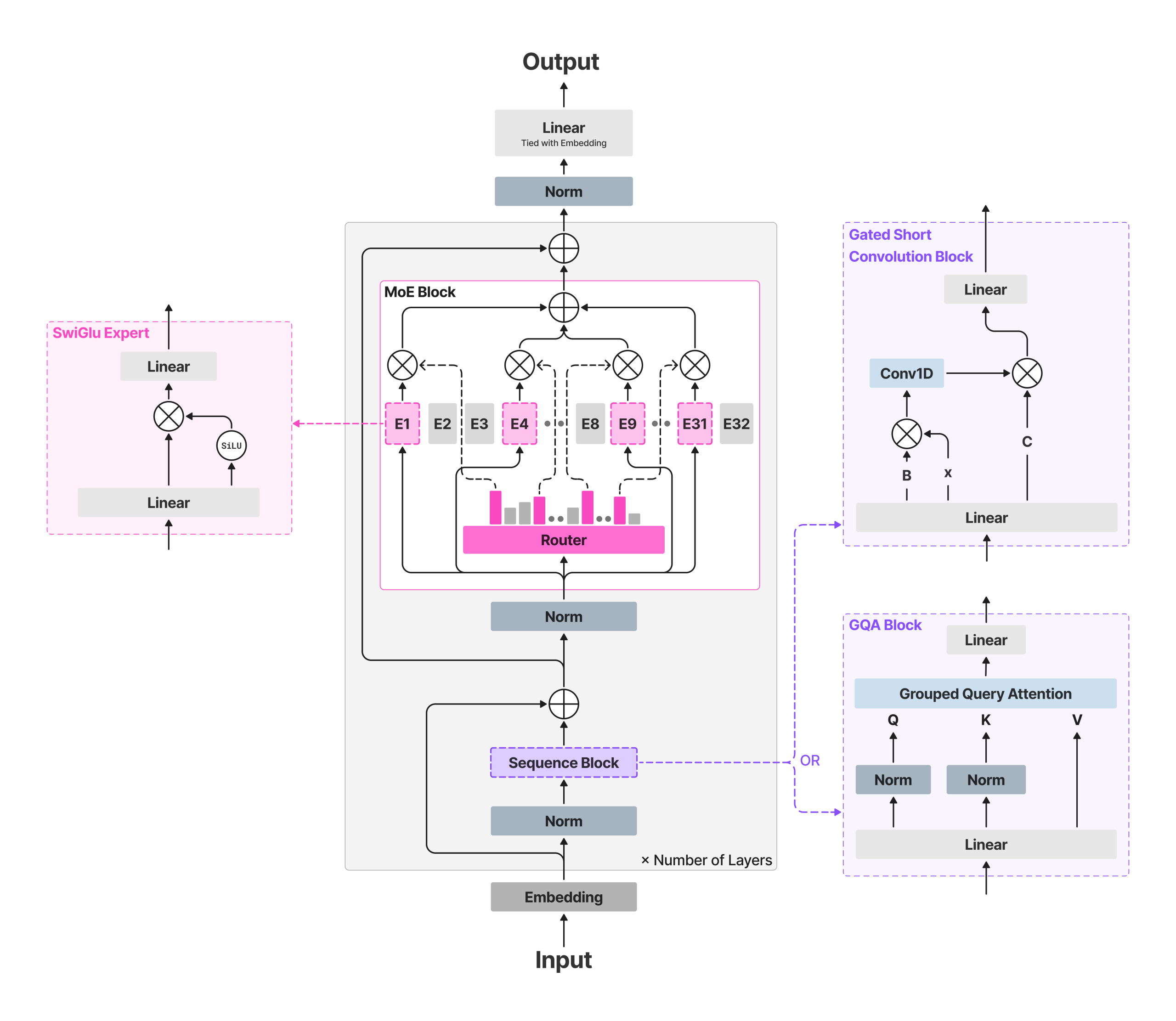

The LFM2-8B-A1B architecture builds upon the LFM2 ‘fast backbone’ framework, integrating sparse MoE feed-forward layers to expand capacity without significantly increasing active computation. The backbone consists of 18 gated short-convolution modules alongside 6 grouped-query attention (GQA) layers. Except for the initial two layers, which remain dense to ensure stability, every other layer incorporates an MoE block. Each MoE block contains 32 experts, with a router mechanism selecting the top 4 experts per token using a normalized sigmoid gating function combined with an adaptive routing bias to evenly distribute workload and stabilize training dynamics. The model supports an extensive context window of 32,768 tokens and a vocabulary size of 65,536, having been pre-trained on roughly 12 trillion tokens.

This design strategy ensures that the per-token floating-point operations (FLOPs) and cache requirements remain constrained by the active computational path-comprising attention mechanisms and four expert MLPs-while the overall parameter capacity enables the model to specialize across diverse domains such as multilingual understanding, mathematical reasoning, and coding tasks. These are areas where smaller dense models often underperform.

Benchmarking and Efficiency Insights

According to Liquid AI, the LFM2-8B-A1B model demonstrates substantially faster inference speeds compared to the Qwen3-1.7B model during CPU-based evaluations, leveraging a proprietary XNNPACK-optimized stack and a custom CPU kernel for MoE operations. Performance assessments include int4 quantization with int8 dynamic activations on platforms like the AMD Ryzen AI 9 HX370 and the Samsung Galaxy S24 Ultra. The developers claim that the model’s output quality rivals that of dense models in the 3 to 4 billion parameter range, while maintaining an active compute budget close to 1.5 billion parameters. Rather than broad cross-vendor speedup claims, the comparisons focus on device-specific performance relative to models with similar active parameter counts.

In terms of accuracy, the model’s evaluation spans 16 diverse benchmarks, including knowledge-based tests like MMLU, MMLU-Pro, and GPQA; instruction-following assessments such as IFEval, IFBench, and Multi-IF; mathematical reasoning challenges including GSM8K, GSMPlus, MATH500, and MATH-Level-5; as well as multilingual benchmarks like MGSM and MMMLU. Results indicate competitive performance in instruction adherence and mathematical problem-solving within the small-model category, alongside enhanced knowledge retention compared to the smaller LFM2-2.6B model, consistent with the increased parameter count.

Deployment Options and Toolchain Support

The LFM2-8B-A1B model is compatible with popular inference frameworks such as Transformers and vLLM for GPU acceleration, and is available in GGUF format for use with llama.cpp. The official GGUF repository offers quantized model variants ranging from Q4_0 (~4.7 GB) to F16 (~16.7 GB), suitable for local execution. Users must ensure they have llama.cpp builds version b6709+ or later, which include lfm2moe support to prevent “unknown model architecture” errors. For CPU inference, Liquid AI utilizes the Q4_0 quantization with int8 dynamic activations on devices like the AMD Ryzen AI 9 HX370 and Samsung Galaxy S24 Ultra, where LFM2-8B-A1B achieves higher decoding throughput than Qwen3-1.7B within the same active parameter class. Additionally, the ExecuTorch framework is recommended for deploying this model on mobile and embedded CPU platforms.

Summary of Core Features

- Model Architecture & Routing: Combines an LFM2 fast backbone (18 gated short-conv blocks plus 6 GQA blocks) with sparse MoE feed-forward networks in all but the first two layers, utilizing 32 experts and top-4 routing via normalized sigmoid gating and adaptive biasing; totaling 8.3 billion parameters with approximately 1.5 billion active per token.

- Designed for On-Device Use: Optimized for deployment on smartphones, laptops, and embedded CPUs/GPUs, with quantized versions that comfortably fit on high-end consumer hardware, enabling private, low-latency applications.

- Performance Profile: Demonstrates significantly faster CPU inference than Qwen3-1.7B and targets quality comparable to dense models in the 3-4 billion parameter range while maintaining a lean active compute path.

Final Thoughts

The LFM2-8B-A1B model exemplifies how sparse MoE architectures can be effectively scaled down for practical on-device applications. By integrating a convolution-attention backbone with per-layer expert MLPs (excluding the first two layers), it maintains a manageable token-level compute budget near 1.5 billion parameters while elevating performance to rival larger dense models. With broad support across standard and GGUF weight formats, compatibility with llama.cpp, ExecuTorch, and vLLM, and a permissive licensing model, LFM2-8B-A1B presents a compelling solution for developing responsive, private AI assistants and embedded copilots on consumer and edge devices.