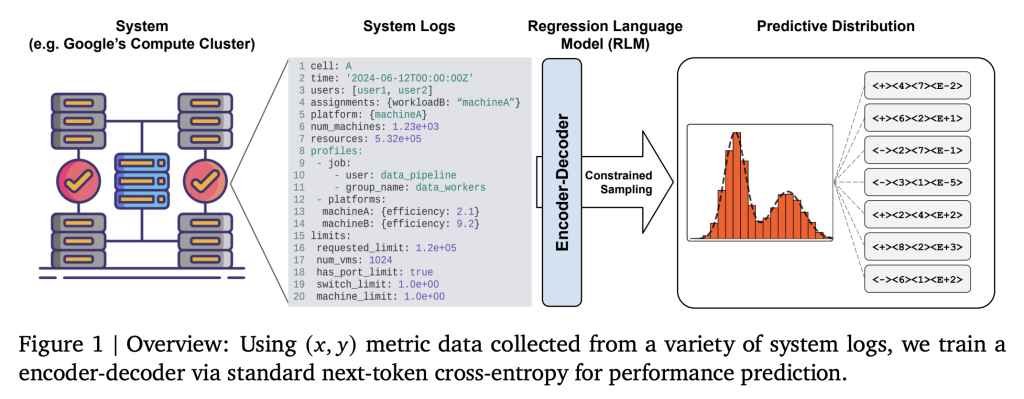

Google has introduced an innovative Regression Language Model (RLM) framework that empowers Large Language Models (LLMs) to forecast the performance of industrial systems directly from unprocessed textual data. This method bypasses the traditional dependence on intricate feature engineering and rigid tabular data structures.

Overcoming Challenges in Industrial System Performance Forecasting

Forecasting the behavior of expansive industrial infrastructures-such as Google’s Borg compute clusters-has historically depended on labor-intensive, domain-specific feature extraction and tabular data formats. These conventional approaches struggle to scale and adapt due to the complexity of system logs, configuration files, heterogeneous hardware components, and deeply nested job information, which resist straightforward flattening or normalization. Consequently, optimization and simulation processes often become fragile, expensive, and slow, particularly when novel workloads or hardware configurations emerge.

Reimagining Regression: From Tabular Data to Text Generation

The core innovation of Google’s RLM lies in reframing regression as a text-to-text generation task. Instead of relying on numerical feature vectors, all relevant system state information-including configurations, logs, workload characteristics, and hardware descriptions-is serialized into structured textual formats such as YAML or JSON. This serialized text serves as the input prompt for the model, which then generates the target numerical metric-like Millions of Instructions Per Second per Google Compute Unit (MIPS per GCU)-as a textual output.

- Elimination of Feature Engineering: By using raw text inputs, the model removes the need for predefined feature sets, normalization procedures, and fixed encoding schemes.

- Flexible and Comprehensive Input Representation: Any system state, regardless of complexity or nesting, can be naturally encoded as text, supporting dynamic and heterogeneous data.

Model Architecture and Training Methodology

The RLM employs a compact encoder-decoder LLM with approximately 60 million parameters, trained from scratch using next-token prediction loss on paired textual representations of system states and their corresponding performance metrics. Unlike conventional language models, this model is not pretrained on general language corpora but is optimized directly for the regression task.

- Specialized Numeric Tokenization: Numerical targets are encoded efficiently using techniques such as mantissa-sign-exponent tokenization, enabling precise floating-point representation within the model’s vocabulary.

- Rapid Few-Shot Fine-Tuning: Pretrained RLMs can be quickly adapted to new environments or workloads with as few as 500 training examples, reducing adaptation time from weeks to mere hours.

- Handling Extensive Input Lengths: The model supports processing of very long input sequences, often spanning thousands of tokens, ensuring that complex system states are fully captured.

Empirical Performance on Google’s Borg Cluster

When evaluated on the Borg cluster, RLMs demonstrated exceptional predictive accuracy, achieving Spearman rank correlations as high as 0.99 (with an average around 0.9) between predicted and actual MIPS per GCU. Moreover, the mean squared error was reduced by a factor of 100 compared to traditional tabular regression baselines. The model’s probabilistic output generation enables intrinsic uncertainty quantification, facilitating advanced applications such as Bayesian optimization and probabilistic system simulations.

- Comprehensive Uncertainty Modeling: RLMs effectively capture both aleatoric uncertainty (inherent randomness) and epistemic uncertainty (due to limited data or observability), surpassing most conventional black-box regression methods.

- Potential for Universal Digital Twins: The model’s ability to represent complex system state distributions positions it as a promising foundation for creating universal digital twins, accelerating infrastructure optimization and enabling real-time feedback loops.

Contrasting RLMs with Conventional Regression Techniques

| Method | Input Format | Feature Engineering | Adaptability | Predictive Accuracy | Uncertainty Handling |

|---|---|---|---|---|---|

| Traditional Tabular Regression | Flat numerical tensors | Manual and domain-specific | Limited | Constrained by feature quality | Minimal |

| Regression Language Model (Text-to-Text) | Structured, nested textual data | Not required | High | Near-perfect rank correlation | Comprehensive |

Broader Implications and Use Cases

- Cloud Infrastructure and Compute Clusters: Enables direct, scalable performance prediction and optimization for complex, evolving data center environments.

- Industrial Manufacturing and IoT Systems: Facilitates universal simulation models capable of predicting outcomes across diverse and interconnected industrial processes.

- Scientific Research and Experimentation: Supports end-to-end modeling where inputs are multifaceted, textually described, and numerically varied, enhancing experimental design and analysis.

By redefining regression ing problem, Google’s RLM framework dismantles traditional barriers in system simulation, offering rapid adaptability to new scenarios and robust, uncertainty-aware predictions. This advancement marks a significant step forward for industrial AI, promising more efficient and resilient infrastructure management in the years ahead.