Automating Machine Learning Pipelines with TPOT in Google Colab

This guide demonstrates how to leverage TPOT, an automated machine learning tool, to streamline and enhance the creation of machine learning pipelines. Utilizing Google Colab ensures a lightweight, reproducible environment accessible to all users. We will cover data loading, crafting a custom evaluation metric, customizing the search space with sophisticated models such as XGBoost, and implementing a robust cross-validation scheme. Throughout, we will observe how TPOT’s evolutionary algorithms efficiently explore pipeline configurations, providing insights via Pareto fronts and checkpointing mechanisms.

Setting Up the Environment and Dependencies

!pip install -q tpot==0.12.2 xgboost==2.0.3 scikit-learn==1.4.2 graphviz==0.20.3

import os

import time

import random

import numpy as np

import pandas as pd

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split, StratifiedKFold

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import make_scorer, f1_score, classification_report

from sklearn.pipeline import Pipeline

from tpot import TPOTClassifier

from xgboost import XGBClassifier

SEED = 7

random.seed(SEED)

np.random.seed(SEED)

os.environ["PYTHONHASHSEED"] = str(SEED)

We start by installing the necessary libraries and importing modules essential for data manipulation, model training, and pipeline optimization. Setting a fixed random seed guarantees that our experiments are reproducible across multiple runs.

Data Preparation and Custom Scoring Function

X, y = load_breast_cancer(return_X_y=True, as_frame=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.3, stratify=y, random_state=SEED

)

scaler = StandardScaler().fit(X_train)

X_train_scaled = scaler.transform(X_train)

X_test_scaled = scaler.transform(X_test)

def f1_cost_sensitive(y_true, y_pred):

return f1_score(y_true, y_pred, average='binary', pos_label=1)

custom_f1_scorer = make_scorer(f1_cost_sensitive, greater_is_better=True)

We load the breast cancer dataset, splitting it into training and testing subsets while maintaining class proportions. Feature scaling is applied to stabilize model training. A custom F1-score metric is defined to prioritize accurate identification of positive cases, which is critical in medical diagnosis contexts.

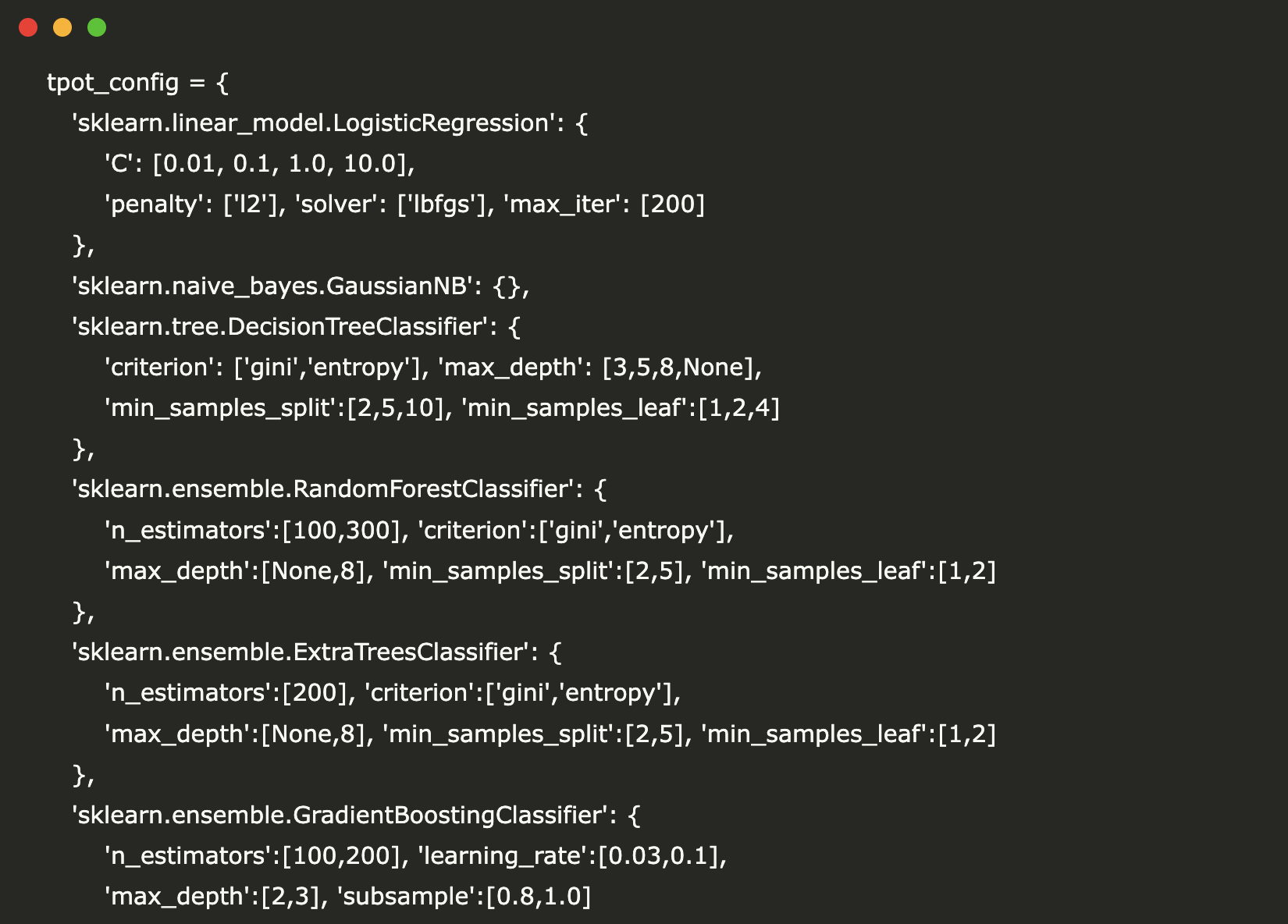

Configuring TPOT with Diverse Models and Hyperparameters

tpot_config = {

'sklearn.linear_model.LogisticRegression': {

'C': [0.01, 0.1, 1.0, 10.0],

'penalty': ['l2'],

'solver': ['lbfgs'],

'max_iter': [200]

},

'sklearn.naive_bayes.GaussianNB': {},

'sklearn.tree.DecisionTreeClassifier': {

'criterion': ['gini', 'entropy'],

'max_depth': [3, 5, 8, None],

'min_samples_split': [2, 5, 10],

'min_samples_leaf': [1, 2, 4]

},

'sklearn.ensemble.RandomForestClassifier': {

'n_estimators': [100, 300],

'criterion': ['gini', 'entropy'],

'max_depth': [None, 8],

'min_samples_split': [2, 5],

'min_samples_leaf': [1, 2]

},

'sklearn.ensemble.ExtraTreesClassifier': {

'n_estimators': [200],

'criterion': ['gini', 'entropy'],

'max_depth': [None, 8],

'min_samples_split': [2, 5],

'min_samples_leaf': [1, 2]

},

'sklearn.ensemble.GradientBoostingClassifier': {

'n_estimators': [100, 200],

'learning_rate': [0.03, 0.1],

'max_depth': [2, 3],

'subsample': [0.8, 1.0]

},

'xgboost.XGBClassifier': {

'n_estimators': [200, 400],

'max_depth': [3, 5],

'learning_rate': [0.05, 0.1],

'subsample': [0.8, 1.0],

'colsample_bytree': [0.8, 1.0],

'reg_lambda': [1.0, 2.0],

'min_child_weight': [1, 3],

'n_jobs': [0],

'tree_method': ['hist'],

'eval_metric': ['logloss'],

'gamma': [0, 1]

}

}

cv_strategy = StratifiedKFold(n_splits=5, shuffle=True, random_state=SEED)

We specify a tailored TPOT configuration dictionary that includes a variety of algorithms-from logistic regression and naive Bayes to ensemble methods and XGBoost-each with a curated set of hyperparameters. This diverse search space enables TPOT to explore a wide range of model architectures. The stratified 5-fold cross-validation ensures balanced evaluation across folds.

Executing the Evolutionary Search and Analyzing Results

start_time = time.time()

tpot = TPOTClassifier(

generations=5,

population_size=40,

offspring_size=40,

scoring=custom_f1_scorer,

cv=cv_strategy,

subsample=0.8,

n_jobs=-1,

config_dict=tpot_config,

verbosity=2,

random_state=SEED,

max_time_mins=10,

early_stop=3,

periodic_checkpoint_folder="tpot_checkpoints",

warm_start=False

)

tpot.fit(X_train_scaled, y_train)

print(f"⏱️ Initial TPOT search completed in {time.time() - start_time:.1f} seconds")

def extract_pareto_front(tpot_obj, top_k=5):

records = []

for pipeline_id, metadata in tpot_obj.pareto_front_fitted_pipelines_.items():

records.append({

"pipeline_id": pipeline_id,

"cv_score": metadata['internal_cv_score'],

"pipeline_length": len(str(metadata['pipeline']))

})

df = pd.DataFrame(records).sort_values(by="cv_score", ascending=False).head(top_k)

return df.reset_index(drop=True)

pareto_pipelines = extract_pareto_front(tpot, top_k=5)

print("Top Pareto-optimal pipelines based on CV scores:n", pareto_pipelines)

def evaluate_pipeline(pipeline, X_test, y_test, label):

predictions = pipeline.predict(X_test)

f1 = f1_score(y_test, predictions)

print(f"n[{label}] Test F1 Score: {f1:.4f}")

print(classification_report(y_test, predictions, digits=3))

print("nEvaluating leading pipelines on the test dataset:")

for idx, (pipeline_id, metadata) in enumerate(

sorted(tpot.pareto_front_fitted_pipelines_.items(),

key=lambda item: item[1]['internal_cv_score'], reverse=True)[:3], 1):

evaluate_pipeline(metadata['pipeline'], X_test_scaled, y_test, label=f"Pareto Pipeline #{idx}")

We initiate TPOT’s genetic programming search with constraints on runtime and population size to balance thoroughness and efficiency. The Pareto front is extracted to highlight pipelines that offer the best trade-offs between complexity and performance. The top pipelines are then assessed on the unseen test set, with detailed classification metrics reported.

Refining the Search with Warm Start and Exporting the Best Model

print("n🔄 Continuing optimization with warm start for enhanced refinement...")

warm_start_time = time.time()

tpot_refined = TPOTClassifier(

generations=3,

population_size=40,

offspring_size=40,

scoring=custom_f1_scorer,

cv=cv_strategy,

subsample=0.8,

n_jobs=-1,

config_dict=tpot_config,

verbosity=2,

random_state=SEED,

warm_start=True,

periodic_checkpoint_folder="tpot_checkpoints"

)

try:

tpot_refined._population = tpot._population

tpot_refined._pareto_front = tpot._pareto_front

except Exception:

pass

tpot_refined.fit(X_train_scaled, y_train)

print(f"⏱️ Warm-started search completed in {time.time() - warm_start_time:.1f} seconds")

best_pipeline = getattr(tpot_refined, "fitted_pipeline_", None) or tpot.fitted_pipeline_

evaluate_pipeline(best_pipeline, X_test_scaled, y_test, label="Best Pipeline After Warm Start")

export_file = "tpot_optimized_pipeline.py"

(tpot_refined if hasattr(tpot_refined, "fitted_pipeline_") else tpot).export(export_file)

print(f"n📦 Best pipeline exported to: {export_file}")

from importlib import util

spec = util.spec_from_file_location("tpot_optimized", export_file)

tpot_module = util.module_from_spec(spec)

spec.loader.exec_module(tpot_module)

reloaded_model = tpot_module.exported_pipeline_

deployment_pipeline = Pipeline([("scaler", scaler), ("model", reloaded_model)])

deployment_pipeline.fit(X_train, y_train)

evaluate_pipeline(deployment_pipeline, X_test, y_test, label="Reloaded Exported Pipeline")

model_summary = {

"dataset": "Breast Cancer Wisconsin (Diagnostic)",

"training_samples": int(X_train.shape[0]),

"testing_samples": int(X_test.shape[0]),

"cross_validation": "StratifiedKFold with 5 splits",

"evaluation_metric": "Custom binary F1 score",

"search_parameters": {

"initial_generations": 5,

"warm_start_generations": 3,

"population_size": 40,

"subsample_ratio": 0.8

},

"pipeline_preview": str(reloaded_model)[:120] + "..."

}

import json

print("n📝 Model Card:n", json.dumps(model_summary, indent=2))

To further enhance pipeline quality, we resume the evolutionary search using a warm start, preserving prior knowledge to accelerate convergence. The top-performing pipeline is exported as a Python script, then reloaded and combined with the scaler to simulate deployment conditions. This step confirms that the pipeline maintains its predictive power outside the training environment. Finally, a concise model card is generated, summarizing key details for reproducibility and documentation.

Summary

This tutorial illustrates how TPOT empowers data scientists to transcend manual trial-and-error in model selection by automating pipeline optimization with evolutionary algorithms. The process is reproducible, transparent, and adaptable, enabling the export and deployment of robust models. By integrating advanced learners like XGBoost and employing custom scoring metrics, TPOT facilitates the discovery of high-quality solutions tailored to specific problem domains. This framework is well-suited for scaling to more complex datasets and real-world applications, combining efficiency with interpretability.