When a large language model (LLM) generates text, it does so incrementally, selecting one token at a time rather than producing the entire response in a single step. At each stage, the model estimates the likelihood of possible next tokens based on the context accumulated so far. However, simply knowing these probabilities is insufficient; the model must also employ a method to choose which token to output next.

The choice of decoding strategy profoundly influences the style and quality of the generated text. Some methods prioritize precision and clarity, while others encourage creativity and diversity. This article delves into four widely used text generation techniques in LLMs: Greedy Search, Beam Search, Top-p Sampling (Nucleus Sampling), and Temperature Sampling, outlining their mechanisms and typical applications.

Greedy Search: The Straightforward Approach

Greedy Search is the most basic decoding technique, where the model consistently selects the token with the highest probability at each step. This approach is computationally efficient and easy to implement but often falls short in producing nuanced or engaging text. It operates like choosing the best immediate option without considering future possibilities, which can lead to suboptimal overall results.

Because it follows a single path through the probability space, greedy search may generate repetitive or bland outputs, making it less suitable for tasks requiring rich, open-ended language generation.

Beam Search: Exploring Multiple Paths

Beam Search enhances the greedy approach by maintaining several candidate sequences simultaneously, known as beams. At each token generation step, it expands the top K most probable sequences, allowing the model to explore multiple promising continuations rather than committing to just one. The beam width K balances output quality against computational cost-larger beams tend to yield better results but require more processing power.

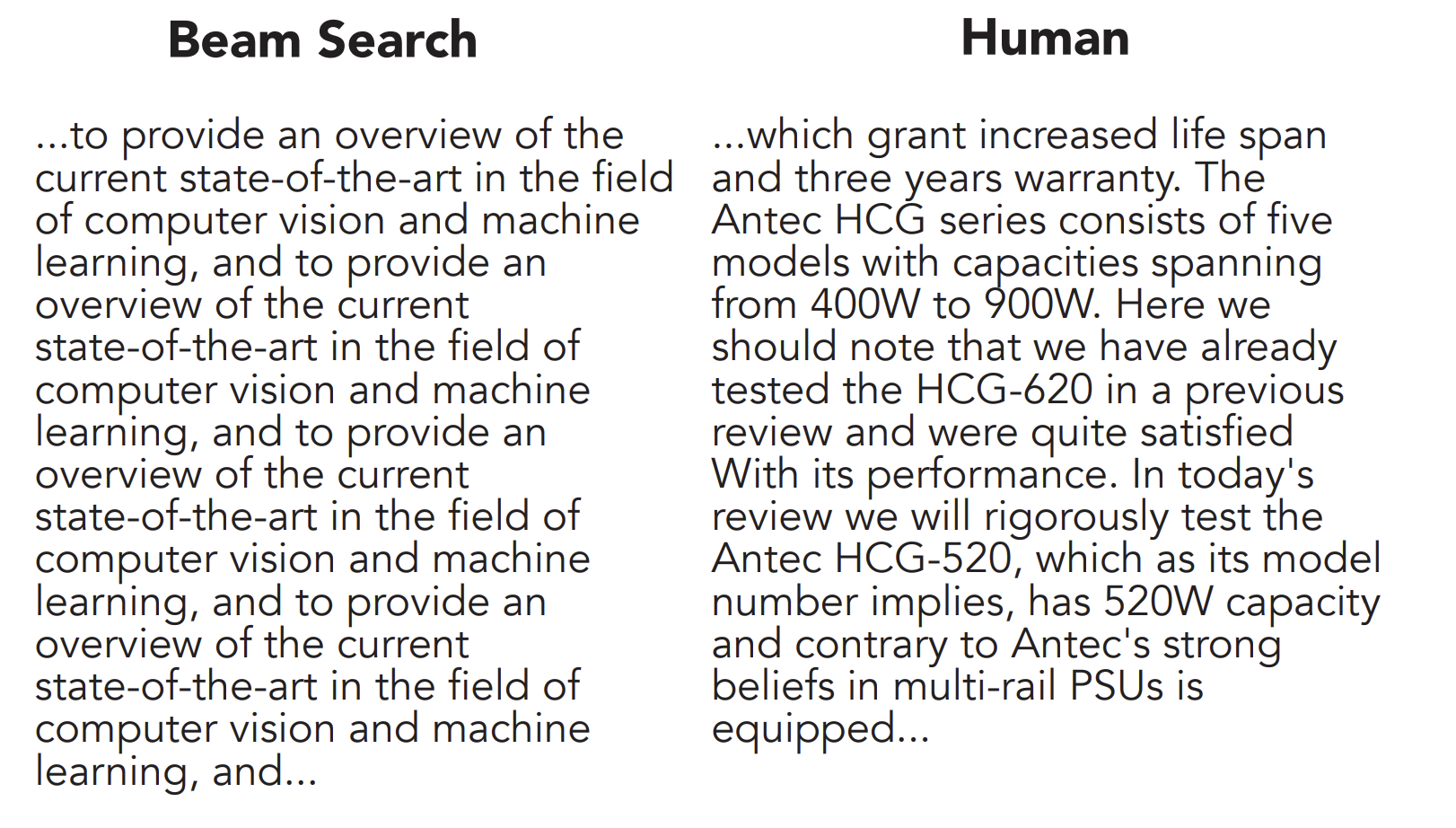

This method excels in structured language tasks like machine translation, where accuracy and coherence are paramount. However, in creative or open-ended generation, beam search can produce repetitive and predictable text due to its preference for high-probability continuations, a phenomenon sometimes called “neural text degeneration.”

For example, consider generating the sentence starting with “The.” Greedy search might pick “slow” because it has the highest immediate probability, resulting in “The slow dog barks.” Beam search, with a beam width of 2, keeps both “slow” and “fast” options alive and eventually selects “The fast cat purrs,” which has a higher overall probability despite a lower initial token choice.

Top-p Sampling (Nucleus Sampling): Dynamic Diversity

Top-p Sampling, also known as Nucleus Sampling, introduces a probabilistic method that dynamically adjusts the candidate token pool based on cumulative probability. Instead of selecting from a fixed number of top tokens, it identifies the smallest set of tokens whose combined probability exceeds a threshold p (e.g., 0.7). The next token is then randomly sampled from this “nucleus” after normalizing their probabilities.

This adaptive approach balances creativity and coherence by broadening the selection when the probability distribution is flat (many tokens have similar likelihoods) and narrowing it when the distribution is peaked (few tokens dominate). Consequently, top-p sampling often produces more natural and varied text compared to rigid methods like greedy or beam search.

Temperature Sampling: Controlling Randomness

Temperature Sampling modulates the randomness of token selection by adjusting the temperature parameter t in the softmax function that converts logits into probabilities. Lower temperatures (t < 1) sharpen the distribution, making the model more likely to pick high-probability tokens and resulting in focused but potentially repetitive text. A temperature of 1 corresponds to sampling directly from the model’s original probability distribution.

Higher temperatures (>1) flatten the distribution, increasing randomness and diversity but potentially reducing coherence. This technique allows fine-tuning the trade-off between creativity and precision: lower temperatures favor deterministic, reliable outputs, while higher temperatures encourage imaginative and varied language.

For instance, in 2024, creative writing applications often use temperatures between 0.8 and 1.2 to generate engaging narratives, whereas technical documentation generation typically employs temperatures below 0.7 to maintain accuracy.

Summary: Choosing the Right Strategy

Each decoding method offers distinct advantages depending on the use case:

- Greedy Search is fast but can produce dull, repetitive text.

- Beam Search improves quality by exploring multiple options but may reduce diversity.

- Top-p Sampling adapts dynamically to balance coherence and variety.

- Temperature Sampling fine-tunes randomness to suit creative or factual tasks.

Understanding these strategies enables developers and researchers to tailor LLM outputs to specific needs, whether for precise translations, engaging storytelling, or informative content generation.