Contents Overview

- Understanding Catastrophic Forgetting in Foundation Models

- Why Reinforcement Learning Retains Knowledge Better Than Supervised Fine-Tuning

- Methods to Quantify Forgetting

- Insights from Large Language Model Experiments

- Comparing RL and SFT in Robotic Manipulation Tasks

- The ParityMNIST Toy Problem: A Controlled Analysis

- The Critical Role of On-Policy Updates in RL

- Evaluating Other Hypotheses Behind Forgetting

- Broader Implications for AI Development

- Summary and Future Directions

Understanding Catastrophic Forgetting in Foundation Models

Foundation models have revolutionized AI by excelling across a wide range of tasks, yet they typically remain fixed after deployment. When these models undergo fine-tuning to adapt to new challenges, they often suffer from catastrophic forgetting-a phenomenon where previously acquired knowledge deteriorates or vanishes. This presents a significant obstacle for creating AI systems capable of continuous learning and long-term adaptability.

Why Reinforcement Learning Retains Knowledge Better Than Supervised Fine-Tuning

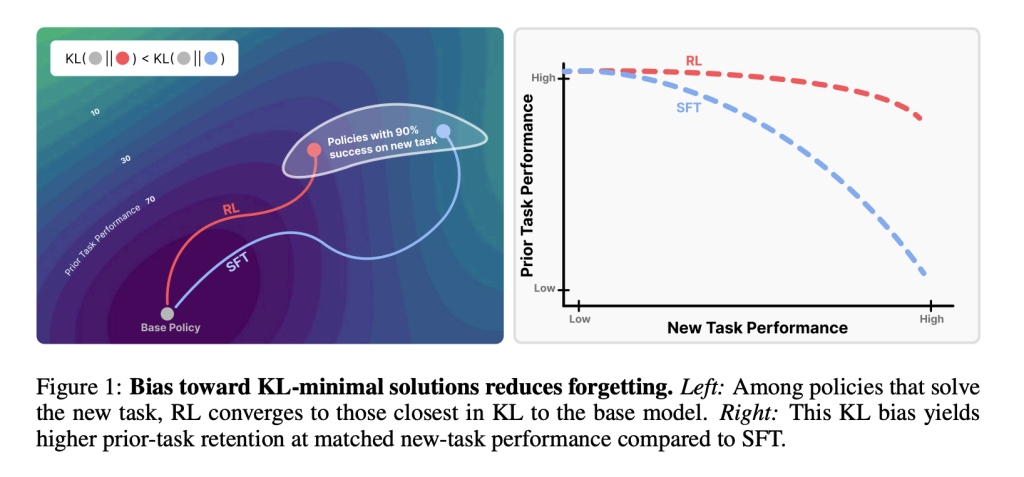

Recent research from MIT highlights a fundamental difference between reinforcement learning (RL) and supervised fine-tuning (SFT) in how they affect a model’s memory. While both approaches can enhance performance on new tasks, SFT tends to overwrite existing skills, leading to forgetting. In contrast, RL preserves prior competencies more effectively. This disparity arises from how each method modifies the model’s output distribution relative to its original policy, with RL favoring smaller, more conservative shifts.

Methods to Quantify Forgetting

The study introduces a practical formula to measure forgetting based on the forward Kullback-Leibler (KL) divergence between the original model policy (π₀) and the fine-tuned policy (π):

This metric, calculated on the new task’s data, reliably predicts the extent of forgetting without requiring access to data from previous tasks. This approach offers a scalable way to monitor knowledge retention during model updates.

Insights from Large Language Model Experiments

Using the Qwen 2.5 3B-Instruct model as a baseline, fine-tuning was conducted on diverse domains:

- Mathematical reasoning (Open-Reasoner-Zero dataset),

- Scientific question answering (SciKnowEval subset),

- Tool utilization (ToolAlpaca dataset).

Performance was assessed on established benchmarks including HellaSwag, MMLU, TruthfulQA, and HumanEval. The results demonstrated that RL not only enhanced new-task accuracy but also maintained stable performance on prior tasks. Conversely, SFT improved new-task results at the expense of degrading earlier capabilities.

Comparing RL and SFT in Robotic Manipulation Tasks

In robotic control scenarios using the OpenVLA-7B model fine-tuned on the SimplerEnv pick-and-place environment, RL preserved general manipulation skills across multiple tasks. Although SFT achieved success on the newly introduced task, it compromised the robot’s previously learned manipulation abilities. This further underscores RL’s tendency to conserve existing knowledge during adaptation.

The ParityMNIST Toy Problem: A Controlled Analysis

To better understand the underlying mechanisms, researchers designed a simplified benchmark called ParityMNIST. Both RL and SFT reached comparable accuracy on the new task, but SFT caused a more pronounced drop in performance on an auxiliary dataset, FashionMNIST. Importantly, plotting forgetting against forward KL divergence revealed a unified predictive relationship, confirming KL divergence as a key factor governing forgetting.

The Critical Role of On-Policy Updates in RL

On-policy reinforcement learning updates the model by sampling from its own output distribution and reweighting these samples based on received rewards. This incremental adjustment keeps the updated policy close to the original, limiting drastic changes. In contrast, supervised fine-tuning optimizes against fixed target labels, which may be far removed from the base model’s behavior. Theoretical analysis confirms that policy gradient methods converge to KL-minimal optimal solutions, explaining RL’s superior ability to minimize forgetting.

Evaluating Other Hypotheses Behind Forgetting

The team also explored alternative explanations such as changes in model weights, shifts in hidden layer representations, sparsity of parameter updates, and other divergence metrics like reverse KL, total variation distance, and L2 norm. None of these factors correlated as strongly with forgetting as the forward KL divergence, reinforcing the conclusion that maintaining distributional proximity is essential to preserving prior knowledge.

Broader Implications for AI Development

- Evaluation Metrics: Future model assessments should incorporate measures of KL divergence conservatism alongside traditional accuracy metrics to better capture knowledge retention.

- Hybrid Training Approaches: Combining the efficiency of supervised fine-tuning with explicit KL divergence constraints could balance rapid learning with memory preservation.

- Continual Learning Frameworks: The concept of RL’s Razor offers a quantifiable guideline for designing AI agents that acquire new skills without sacrificing existing ones, advancing lifelong learning capabilities.

Summary and Future Directions

This research reframes catastrophic forgetting as a problem of distributional divergence, specifically governed by the forward KL divergence between the original and updated policies. Reinforcement learning’s on-policy updates inherently favor minimal KL divergence, which explains its robustness against forgetting. The principle, termed RL’s Razor, not only clarifies why RL outperforms supervised fine-tuning in preserving knowledge but also provides a strategic foundation for developing future post-training methods that enable foundation models to learn continuously and sustainably.

Essential Highlights

- Reinforcement learning (RL) excels at retaining prior knowledge compared to supervised fine-tuning (SFT), even when both achieve similar new-task performance.

- Forward KL divergence serves as a reliable predictor of catastrophic forgetting, quantifying how much a model’s behavior shifts during fine-tuning.

- RL’s on-policy updates converge to KL-minimal solutions, naturally limiting forgetting by keeping changes close to the original model.

- Empirical studies across language models and robotics confirm RL’s superior memory retention, while SFT often sacrifices old knowledge for new gains.

- Controlled experiments with ParityMNIST validate the generality of the KL divergence principle, extending beyond large-scale models.

- Future training algorithms should balance accuracy with KL conservatism, potentially through hybrid RL-SFT techniques to optimize continual learning.