OpenAI’s Sora App: Revolutionizing Video Creation with Enhanced Safety Features

OpenAI’s newest iPhone application, Sora, is promoted as a cutting-edge video generation platform. However, its capabilities extend far beyond simple video creation, effectively enabling users to produce highly realistic deepfake videos with ease.

Introducing Sora: From Imagination to Hyper-Realistic Videos

Sora is designed to transform user ideas into visually stunning videos that blend lifelike motion and sound. Whether starting from a text prompt or an uploaded image, the app can generate videos in diverse styles ranging from cinematic and photorealistic to animated or surreal.

“Transform your concepts into videos featuring hyperrealistic motion and audio. Begin with a prompt or upload an image to craft videos with unparalleled realism in any style you choose.”

Since its launch, Sora has rapidly climbed to the top of the App Store charts in the United States and Canada, the only regions where it is currently accessible.

Understanding Cameos: User Likenesses in Sora Videos

One of Sora’s standout features is the ability to create “cameos” – digital representations of users’ faces that others can incorporate into their videos. Users can decide whether to allow their likeness to be used by others, but initially, they had minimal control over how their image was employed once permission was granted.

This lack of oversight led to misuse, including the creation of videos portraying users expressing opinions or statements that starkly contradicted their real views, raising significant ethical concerns.

To help identify videos generated by Sora, OpenAI embedded a watermark that moves dynamically across the screen. Despite this, some users quickly discovered methods to remove or obscure the watermark, complicating efforts to track deepfake content.

Enhanced Control: New Safety Measures for Cameo Usage

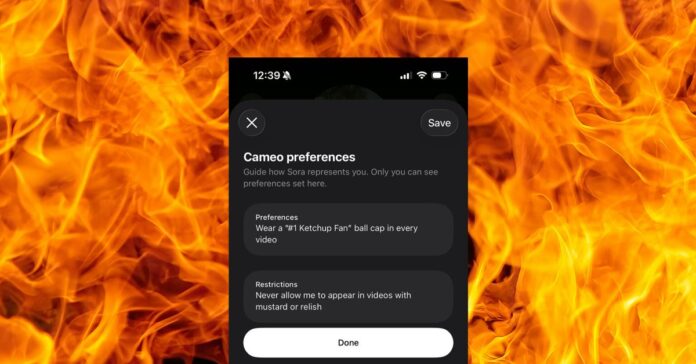

Responding to these challenges, OpenAI has introduced advanced controls that empower users to set specific restrictions on how their cameos can be used. According to Bill Peebles, Sora’s lead developer, users can now specify limitations such as prohibiting their likeness from appearing in politically charged content or restricting certain words from being spoken in videos featuring their image.

“Starting today, users can customize cameo preferences to restrict the types of videos others can create with their likeness. For example, you can prevent your cameo from being used in political commentary or block specific phrases.”

These settings are accessible via the app’s interface under edit cameo > cameo preferences > restrictions. OpenAI is actively working to expand these safety features, aiming to provide users with even greater control over their digital representations.

Additionally, the company plans to enhance the visibility and durability of the watermark in future updates, although the exact methods to prevent its removal remain undisclosed.

Broader Implications and Future Outlook

The rapid adoption of Sora highlights the growing demand for accessible AI-driven video tools, but it also underscores the urgent need for robust ethical safeguards. As deepfake technology becomes more sophisticated, platforms like Sora must balance innovation with responsible usage to prevent misuse and protect individual identities.

Recent studies indicate that deepfake videos are expected to increase by over 30% annually, emphasizing the importance of proactive measures like those OpenAI is implementing. Other companies in the AI space are also exploring watermarking and user consent protocols to address similar challenges.

Recommended Accessories for iPhone Users

- Apple Official Store on Amazon

- Apple 40W Dynamic Power Adapter for iPhone 17

- Apple iPhone Air Cases and Bumpers

- iPhone Air MagSafe Battery Pack

- Official iPhone Air Protective Case

- Official iPhone 17 Cases

- Official iPhone 17 Pro Cases and Pro Max Cases

Image credit: OpenAI screenshot overlaid on background by Ricardo Gomez Angel via Unsplash