The Allen Institute for AI (AI2) has unveiled OLMoASR, an open-source collection of automatic speech recognition (ASR) models that stand toe-to-toe with proprietary systems like OpenAI’s Whisper. Unlike many releases that only share model weights, AI2 has gone further by providing comprehensive transparency: they have published training data references, detailed filtering procedures, training methodologies, and benchmarking tools. This level of openness is rare in the ASR domain, positioning OLMoASR as a highly adaptable and popular platform for advancing speech recognition research.

The Importance of Open-Source Speech Recognition

Currently, most leading speech recognition technologies-offered by companies such as OpenAI, Google, and Microsoft-are accessible solely through APIs. While these services deliver impressive accuracy, they function as black-box systems, with undisclosed training datasets, opaque data cleaning methods, and evaluation protocols that often diverge from academic standards.

This opacity hinders reproducibility and slows scientific advancement. Researchers face difficulties verifying results, experimenting with model variations, or tailoring systems to niche applications without reconstructing massive datasets from scratch. OLMoASR tackles these issues by fully opening the entire development pipeline. Its release is not merely about enabling transcription but about fostering a transparent, research-driven foundation for ASR.

Architecture and Model Variants

OLMoASR employs a transformer-based encoder-decoder framework, which has become the standard in contemporary speech recognition.

- The encoder processes raw audio signals into latent feature representations.

- The decoder then produces textual output tokens conditioned on these encoded features.

This architecture mirrors that of Whisper but distinguishes itself by being fully open-source and customizable.

OLMoASR offers six model sizes, all trained exclusively on English speech:

- tiny.en – 39 million parameters, optimized for low-resource environments

- base.en – 74 million parameters

- small.en – 244 million parameters

- medium.en – 769 million parameters

- large.en-v1 – 1.5 billion parameters, trained on 440,000 hours of audio

- large.en-v2 – 1.5 billion parameters, trained on 680,000 hours of audio

This spectrum allows users to balance between computational efficiency and transcription accuracy. Smaller models are ideal for embedded systems or real-time applications, while larger models deliver superior precision suited for research or batch processing.

Data Collection and Curation Strategies

A standout feature of OLMoASR is its transparent release of training datasets, which is uncommon in the ASR field.

OLMoASR-Pool (~3 Million Hours)

This extensive dataset comprises weakly supervised speech-text pairs harvested from web sources, totaling approximately 3 million hours of audio and 17 million transcripts. Similar to Whisper’s original corpus, it contains noise such as misaligned captions, duplicates, and transcription inaccuracies.

OLMoASR-Mix (~1 Million Hours)

To enhance data quality, AI2 implemented stringent filtering techniques:

- Alignment heuristics to verify synchronization between audio and text

- Fuzzy deduplication to eliminate redundant or low-variance samples

- Cleaning protocols to remove duplicate lines and inconsistent transcripts

The outcome is a high-fidelity dataset of one million hours that significantly improves zero-shot generalization, a crucial factor for handling real-world audio that deviates from training distributions.

This dual-stage data approach reflects best practices in large-scale language model training: leveraging vast, noisy datasets for scale, followed by refined subsets to boost quality.

Benchmarking and Comparative Performance

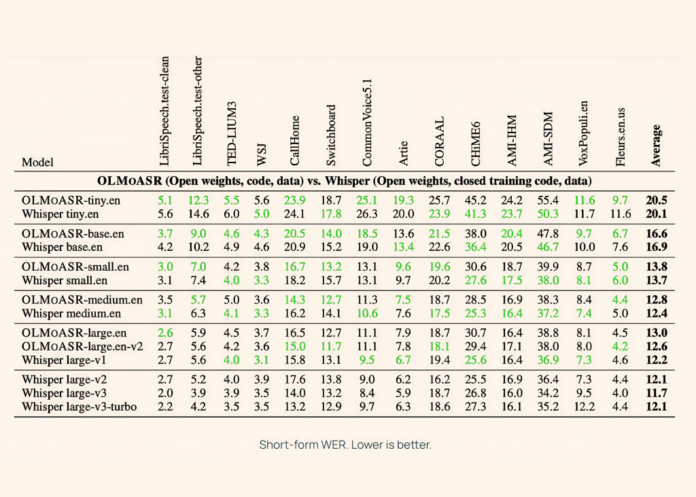

AI2 evaluated OLMoASR against Whisper on a variety of speech recognition benchmarks, including LibriSpeech, TED-LIUM3, Switchboard, AMI, and VoxPopuli, covering both short and long-form audio.

Medium Model (769M Parameters)

- Achieves a 12.8% word error rate (WER) on short-form speech

- Scores 11.0% WER on long-form speech

These results closely rival Whisper-medium.en, which records 12.4% and 10.5% WER respectively.

Large Models (1.5B Parameters)

- large.en-v1 (trained on 440K hours): 13.0% WER on short-form, compared to Whisper large-v1’s 12.2%

- large.en-v2 (trained on 680K hours): 12.6% WER, narrowing the gap to under 0.5%

Compact Models

Even the smaller variants demonstrate competitive accuracy:

- tiny.en: approximately 20.5% WER on short-form, 15.6% on long-form

- base.en: roughly 16.6% WER short-form, 12.9% long-form

This flexibility enables developers to select models tailored to their computational and latency constraints.

Getting Started with OLMoASR

Implementing speech transcription is straightforward and requires minimal code:

import olmoasr

model = olmoasr.load_model("medium", inference=True)

result = model.transcribe("audio.mp3")

print(result)

The output includes both the transcribed text and time-aligned segments, making it ideal for applications such as captioning, meeting transcription, and integration with downstream natural language processing workflows.

Customizing Models for Specific Domains

Thanks to the open availability of training scripts and configurations, OLMoASR can be fine-tuned to specialized fields:

- Healthcare transcription – adapting models using datasets like MIMIC-III or proprietary clinical recordings

- Legal proceedings – training on courtroom audio or legal depositions

- Underrepresented accents – fine-tuning on dialects and speech patterns not well covered in the original datasets

This adaptability is vital because ASR accuracy often declines when models encounter domain-specific vocabulary or acoustic conditions. Open pipelines simplify the process of domain adaptation.

Practical Use Cases and Research Opportunities

OLMoASR unlocks numerous possibilities across both academic and industrial landscapes:

- Academic Exploration: Enables detailed studies on how model design, dataset quality, and filtering impact speech recognition outcomes.

- Human-Computer Interaction: Facilitates embedding speech recognition into conversational agents, real-time transcription tools, and accessibility software without reliance on proprietary APIs.

- Multimodal AI Systems: When paired with large language models, OLMoASR supports the development of sophisticated assistants capable of understanding spoken input and generating context-aware responses.

- Benchmarking and Standardization: The open release of data and evaluation scripts establishes OLMoASR as a reproducible baseline for future ASR research.

Final Thoughts

OLMoASR represents a significant step forward in democratizing high-quality speech recognition by emphasizing transparency and reproducibility. Although currently focused on English and requiring substantial computational resources for training, it lays a robust groundwork for further adaptation and innovation. This initiative sets a new benchmark for open ASR development, empowering researchers and developers to explore, evaluate, and deploy speech recognition technologies across diverse applications.