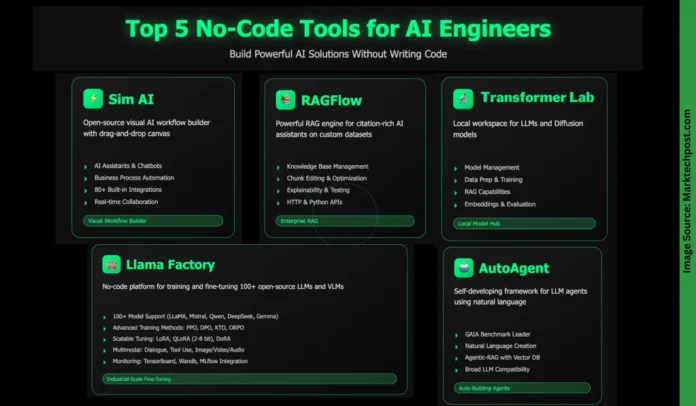

In the rapidly evolving landscape of artificial intelligence, no-code platforms are revolutionizing the way intelligent applications are developed and deployed. These tools empower users of all technical backgrounds to swiftly create sophisticated AI-driven solutions without writing a single line of code. Whether you’re building enterprise-level retrieval-augmented generation (RAG) systems, orchestrating complex multi-agent workflows, or fine-tuning numerous large language models (LLMs), no-code environments significantly cut down development time and complexity. This article highlights five standout no-code platforms that are accelerating AI innovation and making advanced technology accessible to everyone.

Sim AI: Visual Workflow Builder for AI Agents

Sim AI is an open-source, no-code platform designed to visually construct and deploy AI agent workflows through an intuitive drag-and-drop interface. It enables seamless integration of AI models, APIs, databases, and business applications to create a variety of intelligent solutions, including:

- Conversational AI & Chatbots: Develop agents capable of web searches, calendar management, email automation, and interaction with enterprise software.

- Automated Business Processes: Simplify repetitive tasks such as data entry, report generation, customer service, and content creation.

- Data Analytics & Processing: Extract actionable insights, analyze datasets, generate reports, and synchronize data across platforms.

- API Orchestration: Design complex workflows that unify multiple services and automate event-driven operations.

Noteworthy features include:

- A visual canvas equipped with “smart blocks” for AI, API calls, logic, and output handling.

- Support for diverse triggers such as chat inputs, REST APIs, webhooks, schedulers, and events from Slack or GitHub.

- Real-time collaborative editing with granular permission controls.

- Over 80 pre-built integrations spanning AI models, communication tools, productivity suites, developer platforms, search engines, and databases.

- Modular Custom Plugin (MCP) support for extending functionality.

Deployment flexibility:

- Cloud-hosted option offering managed infrastructure with automatic scaling and monitoring.

- Self-hosted deployment via Docker, including local model support to ensure data privacy and compliance.

RAGFlow: Advanced Retrieval-Augmented Generation Engine

RAGFlow is a robust engine tailored for building AI assistants that deliver grounded, citation-backed responses by leveraging your proprietary datasets. Compatible with x86 CPUs and NVIDIA GPUs-and optionally ARM architectures-it offers both full and lightweight Docker images for rapid setup. Once deployed locally, you can connect large language models through APIs or local runtimes like Ollama to handle chat interactions, embeddings, or image-to-text conversions. RAGFlow supports a wide array of popular LLMs, allowing customization per assistant or default configurations.

Core functionalities include:

- Comprehensive knowledge base management: Upload and parse diverse file formats such as PDFs, Word documents, CSVs, images, and presentations into searchable datasets.

- Content chunking and refinement: Review and edit parsed data chunks, add keywords, and optimize content for improved retrieval accuracy.

- AI-powered chat assistants: Build conversational agents linked to one or multiple knowledge bases, with customizable fallback responses and prompt tuning.

- Explainability and quality assurance: Utilize built-in tools to monitor retrieval effectiveness, validate outputs, and display real-time citations.

- Extensive integration options: Access HTTP and Python APIs for seamless app integration, plus an optional sandbox environment for secure code execution within chats.

Transformer Lab: Versatile Workspace for LLMs and Diffusion Models

Transformer Lab is a free, open-source environment designed to run large language models and diffusion-based image generators locally or in the cloud. Compatible with GPUs, TPUs, and Apple M-series Macs, it offers a unified platform to download, interact with, and evaluate LLMs, generate images, and compute embeddings.

Key capabilities include:

- Model management: Easily download and engage with a variety of LLMs and cutting-edge diffusion models for image synthesis.

- Data handling and training: Prepare datasets, fine-tune models, and support advanced training techniques such as reinforcement learning with human feedback (RLHF) and preference tuning.

- Retrieval-augmented generation: Empower conversations with your own documents to ensure contextually accurate and grounded responses.

- Embeddings and performance evaluation: Generate embeddings and benchmark model outputs across different inference engines.

- Community-driven extensibility: Develop plugins, contribute to the core project, and collaborate with an active Discord community.

LLaMA-Factory: Comprehensive No-Code Platform for Model Training and Fine-Tuning

LLaMA-Factory offers a powerful no-code interface for training and fine-tuning a broad spectrum of open-source large language and vision-language models. Supporting over 100 models, it facilitates multimodal fine-tuning, advanced optimization algorithms, and scalable resource management. Tailored for researchers and AI practitioners, it provides tools for pre-training, supervised fine-tuning, reward modeling, and reinforcement learning techniques such as PPO and DPO, alongside streamlined experiment tracking and accelerated inference.

Highlights include:

- Extensive model compatibility: Supports LLaMA, Mistral, Qwen, DeepSeek, Gemma, ChatGLM, Phi, Yi, Mixtral-MoE, among others.

- Diverse training methodologies: Enables continuous pre-training, multimodal supervised fine-tuning, reward modeling, PPO, DPO, KTO, ORPO, and more.

- Flexible tuning strategies: Offers full-tuning, freeze-tuning, LoRA, QLoRA (2-8 bit), OFT, DoRA, and other resource-efficient approaches.

- Cutting-edge algorithms and optimizations: Incorporates GaLore, BAdam, APOLLO, Muon, FlashAttention-2, RoPE scaling, NEFTune, rsLoRA, and others.

- Multimodal task support: Handles dialogue systems, tool usage, image/video/audio understanding, visual grounding, and beyond.

- Monitoring and inference tools: Integrates with LlamaBoard, TensorBoard, Wandb, MLflow, SwanLab, and supports fast inference via OpenAI-style APIs, Gradio UI, or CLI with vLLM/SGLang workers.

- Robust infrastructure compatibility: Works seamlessly with PyTorch, Hugging Face Transformers, Deepspeed, BitsAndBytes, and supports both CPU and GPU environments with memory-efficient quantization.

AutoAgent: Natural Language-Driven Automated Agent Framework

AutoAgent is a fully automated framework that enables the creation and deployment of LLM-powered agents using only natural language commands. Designed to simplify the construction of complex workflows, it allows users to build, customize, and operate intelligent assistants and tools without any coding.

Key advantages include:

- Exceptional performance: Achieves leading results on the GAIA benchmark, competing with sophisticated deep research agents.

- Intuitive agent and workflow design: Construct tools, agents, and workflows effortlessly through natural language prompts.

- Agentic-RAG with integrated vector database: Features a self-managing vector database that outperforms traditional retrieval frameworks like LangChain.

- Wide-ranging LLM support: Compatible with top models including OpenAI, Anthropic, DeepSeek, vLLM, Grok, Hugging Face, and more.

- Versatile interaction modes: Supports both function-calling and ReAct-style reasoning to accommodate diverse use cases.

Lightweight and highly extensible, AutoAgent serves as a dynamic personal AI assistant that is easy to customize while maintaining resource efficiency.