SwiReasoning: An Innovative Framework for Adaptive Reasoning in Large Language Models

Adaptive Reasoning Through Entropy-Guided Latent and Explicit Thought

SwiReasoning introduces a novel decoding-time strategy that empowers reasoning large language models (LLMs) to dynamically alternate between latent-space thinking and explicit chain-of-thought (CoT) generation. This approach leverages block-level confidence signals derived from entropy trends in next-token probability distributions to decide when to pause token emission and internally deliberate versus when to articulate reasoning steps explicitly. Notably, SwiReasoning is model-agnostic and requires no additional training, making it a versatile tool for enhancing reasoning efficiency and accuracy on complex mathematics and STEM problem sets.

How SwiReasoning Operates During Inference

At the core of SwiReasoning is a controller that continuously tracks the entropy of the model’s next-token predictions. When entropy rises-indicating uncertainty-the system enters a latent reasoning phase, allowing the model to internally explore multiple reasoning paths without producing output tokens. Conversely, when entropy decreases, signaling increased confidence, the model switches back to explicit reasoning, generating CoT tokens to solidify and commit to a particular solution trajectory. To prevent excessive toggling and over-deliberation, a switch count limit is imposed, ensuring the reasoning process remains efficient and focused. This dynamic interplay between silent internal thought and explicit explanation underpins SwiReasoning’s superior balance of accuracy and token economy.

Performance Highlights: Enhanced Accuracy and Token Efficiency

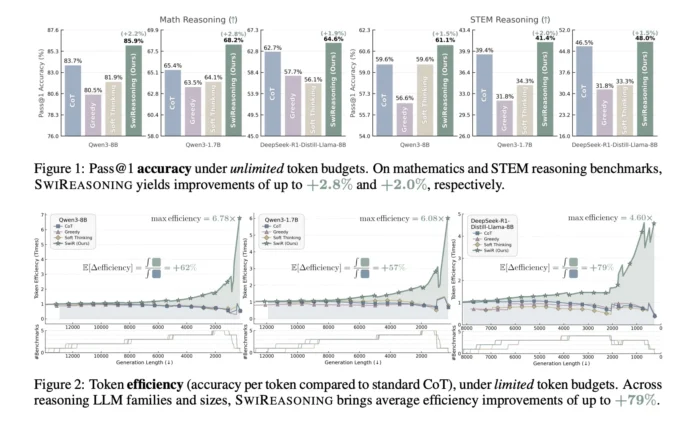

Extensive evaluations on standard mathematics and STEM benchmarks demonstrate SwiReasoning’s effectiveness:

- Accuracy Gains with Unlimited Tokens: SwiReasoning achieves an average accuracy improvement ranging from +1.5% to +2.8% over traditional CoT methods, with peak gains observed in advanced math challenges.

- Token Efficiency Under Budget Constraints: When operating with limited token budgets, SwiReasoning delivers remarkable efficiency improvements, boosting token utilization by 56% to 79% on average. In fact, it outperforms competing methods in 13 out of 15 benchmark scenarios, achieving up to +84% better token efficiency compared to standard CoT.

- Accelerated Convergence on AIME 2024/2025: Using the Qwen3-8B model, SwiReasoning reaches peak reasoning accuracy approximately 50% faster than conventional CoT, indicating more rapid convergence with fewer sampled reasoning paths.

Why Alternating Between Latent and Explicit Reasoning Matters

Traditional explicit CoT approaches, while interpretable, often commit prematurely to a single reasoning path, potentially overlooking alternative solutions. On the other hand, purely latent reasoning methods, which operate without token emission, can suffer from diluted probability distributions that hinder decisive conclusions. SwiReasoning’s entropy-driven switching mechanism strikes a balance by:

- Exploring broadly during uncertain phases through latent reasoning, allowing the model to consider multiple hypotheses internally.

- Exploiting confidence surges by generating explicit CoT tokens only when the model’s certainty improves, thereby consolidating the reasoning path.

- Regulating oscillations with a capped switch count to avoid excessive silent deliberation and token wastage.

This strategy effectively mitigates the common pitfalls of both extremes, enhancing both accuracy and efficiency without requiring additional training.

Comparative Analysis Against Leading Baselines

SwiReasoning has been benchmarked against prominent decoding strategies including CoT with sampling, greedy CoT, and Soft Thinking. The results reveal:

- A consistent +2.17% average accuracy improvement at unlimited token budgets.

- Substantial shifts in the Pareto frontier, enabling either higher accuracy at fixed token costs or comparable accuracy with fewer tokens across diverse model architectures and sizes.

- On the AIME 2024/2025 datasets, SwiReasoning’s Pass@k curves demonstrate faster attainment of top performance levels with fewer samples, reflecting enhanced convergence dynamics rather than just improved final accuracy ceilings.

Summary of Key Advantages

- Training-Free Confidence Controller: Utilizes next-token entropy trends to seamlessly toggle between latent and explicit reasoning modes.

- Significant Efficiency Boosts: Achieves up to 79% token efficiency improvements under constrained decoding budgets.

- Improved Accuracy: Delivers 1.5% to 2.8% accuracy gains on challenging math and STEM benchmarks without additional training.

- Faster Reasoning Convergence: Demonstrates earlier peak accuracy on competitive exams like AIME, reducing computational overhead.

Practical Implications and Future Directions

SwiReasoning represents a pragmatic advancement in decoding-time reasoning control, offering a lightweight, training-free mechanism that integrates smoothly with existing tokenizers and LLM architectures. Its open-source BSD-licensed implementation, complete with configurable parameters such as --max_switch_count and --alpha, facilitates easy adoption and experimentation. Moreover, SwiReasoning’s focus on maximizing accuracy per token rather than solely pushing raw accuracy benchmarks aligns well with real-world constraints like inference cost, latency, and batch processing efficiency.

Looking ahead, combining SwiReasoning with complementary efficiency techniques-such as model quantization, speculative decoding, and optimized key-value caching-could further enhance its practical utility. As LLM applications continue to expand in STEM education, scientific research, and technical problem-solving, adaptive reasoning frameworks like SwiReasoning are poised to play a critical role in balancing performance and resource consumption.