What if editing speech could be as straightforward and intuitive as revising a line of text? StepFun AI has unveiled Step-Audio-EditX, an open-source audio model powered by a 3-billion-parameter large language model (LLM). This innovative system transforms expressive speech editing into a token-based operation, akin to text editing, rather than relying on traditional waveform-level signal processing.

Enhancing Control in Text-to-Speech Systems

Conventional zero-shot text-to-speech (TTS) models typically replicate emotion, accent, style, and voice timbre directly from brief reference audio clips. While this approach can produce natural-sounding speech, it often lacks precise control. Text-based style prompts tend to be effective only within the model’s trained voice domain, and cloned voices frequently fail to accurately reflect the intended emotion or speaking style.

Previous research has attempted to isolate these speech attributes using additional encoders, adversarial training, or intricate model architectures. In contrast, Step-Audio-EditX adopts a more integrated representation, focusing on innovative data preparation and post-training objectives. The model gains control capabilities by training on numerous pairs and triplets where the textual content remains constant, but one speech attribute varies significantly.

Innovative Architecture: Dual Codebook Tokenizer and Compact Audio LLM

At the core of Step-Audio-EditX lies the Step-Audio dual codebook tokenizer, which converts speech into two interleaved token streams: a linguistic stream operating at 16.7 Hz with a 1024-entry codebook, and a semantic stream at 25 Hz with a 4096-entry codebook. This tokenizer preserves prosody and emotional nuances, maintaining a partially entangled representation rather than fully separating speech factors.

Building on this tokenizer, the StepFun team developed a 3-billion-parameter audio LLM. Initially derived from a text-based LLM, it is trained on a balanced dataset combining pure text and dual codebook audio tokens formatted as chat-style prompts. This design enables the model to process text tokens, audio tokens, or both, consistently generating dual codebook audio tokens as output.

Audio reconstruction is managed by a dedicated decoder. A diffusion transformer-based flow matching module predicts Mel spectrograms from audio tokens, reference audio, and speaker embeddings. These spectrograms are then converted into waveforms using the BigVGANv2 vocoder. The flow matching component benefits from training on approximately 200,000 hours of high-quality speech data, enhancing pronunciation accuracy and timbre fidelity.

Leveraging Large Margin Synthetic Data for Superior Control

The cornerstone of Step-Audio-EditX’s control mechanism is large margin learning. The model undergoes post-training on carefully curated triplets and quadruplets where the text remains unchanged, but a single speech attribute exhibits a pronounced difference.

For zero-shot TTS, the model is trained on an extensive proprietary dataset featuring around 60,000 speakers, primarily in Chinese and English, with smaller subsets in Cantonese and Sichuanese. This dataset captures a broad spectrum of intra- and inter-speaker variations in style and emotion.

To facilitate emotion and speaking style editing, the team generates synthetic large margin triplets consisting of (text, neutral audio, emotional or styled audio). Voice actors record approximately 10-second clips for various emotions and styles, which are then cloned into neutral and emotional renditions using StepTTS zero-shot cloning. A margin scoring model, trained on a small human-labeled dataset, rates these pairs on a scale from 1 to 10, retaining only those with scores above 6 to ensure quality.

Paralinguistic features-such as breathing, laughter, and filled pauses-are addressed through a semi-synthetic approach based on the NVSpeech dataset. Quadruplets are constructed where the target is the original NVSpeech audio and transcript, and the input is a cloned version with paralinguistic tags removed from the text. This method provides time-domain editing supervision without relying on margin scoring.

Reinforcement learning data incorporates two preference sources: human annotators who rate 20 candidates per prompt on correctness, prosody, and naturalness (retaining pairs with a margin above 3), and a comprehension model that scores emotion and speaking style on a 1 to 10 scale (keeping pairs with margins above 8).

Refining the Model: Supervised Fine-Tuning and Reinforcement Learning

The post-training process unfolds in two phases: supervised fine-tuning (SFT) followed by Proximal Policy Optimization (PPO).

During supervised fine-tuning, system prompts unify zero-shot TTS and editing tasks within a chat-based framework. For TTS, the prompt waveform is encoded into dual codebook tokens, converted into string format, and embedded as speaker information. The user input contains the target text, and the model generates corresponding audio tokens. For editing tasks, the user message includes the original audio tokens alongside natural language instructions, with the model producing the edited audio tokens.

Reinforcement learning further enhances the model’s ability to follow instructions. A 3-billion-parameter reward model, initialized from the SFT checkpoint, is trained using Bradley-Terry loss on large margin preference pairs. This reward is computed directly on token sequences without decoding to waveforms. PPO training balances output quality and adherence to the SFT policy through clip thresholds and KL divergence penalties.

Step-Audio-Edit-Test: Benchmarking Iterative Editing and Generalization

To rigorously assess control capabilities, the researchers introduced Step-Audio-Edit-Test, employing Gemini 2.5 Pro as an LLM-based evaluator for emotion, speaking style, and paralinguistic accuracy. The benchmark features eight speakers sourced from Wenet Speech4TTS, GLOBE V2, and LibriLight datasets, evenly split between two languages.

The emotion category includes five classes with 50 prompts each in Chinese and English. The speaking style set comprises seven styles with 50 prompts per language per style. Paralinguistic evaluation covers ten labels such as breathing, laughter, surprise exclamations, and filler words, with 50 prompts per label and language.

Editing performance is measured across multiple iterations. Starting from iteration zero (initial zero-shot cloning), the model undergoes three rounds of editing guided by text instructions. In Chinese, emotion accuracy improves from 57.0% at iteration zero to 77.7% by iteration three, while speaking style accuracy rises from 41.6% to 69.2%. English results show similar trends. An ablation study using fixed prompts across iterations still demonstrates accuracy gains, supporting the effectiveness of large margin learning.

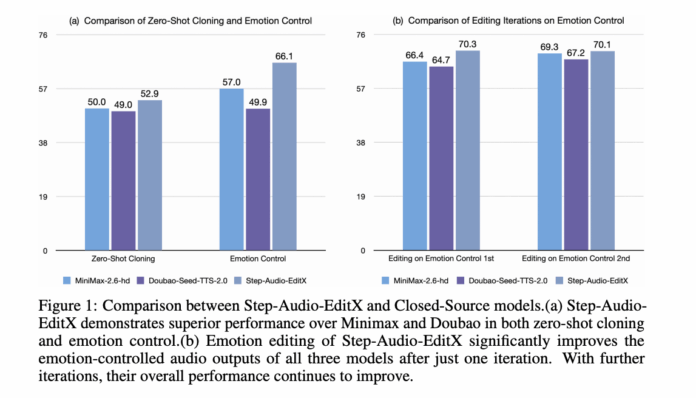

Moreover, Step-Audio-EditX’s editing capabilities extend to enhancing outputs from four closed-source TTS systems-GPT 4o mini TTS, ElevenLabs v2, Doubao Seed TTS 2.0, and MiniMax Speech 2.6 HD. A single editing iteration improves emotion and style accuracy across all systems, with further iterations yielding additional benefits.

Paralinguistic editing is rated on a 1 to 3 scale, with average scores increasing from 1.91 at iteration zero to 2.89 after one edit in both Chinese and English. These results are comparable to the paralinguistic synthesis quality found in leading commercial TTS solutions.

Summary of Innovations and Impact

- Step-Audio-EditX employs a dual codebook tokenizer combined with a 3-billion-parameter audio LLM, enabling speech to be treated as discrete tokens and edited similarly to text.

- Instead of complex disentangling encoders, the model leverages large margin synthetic datasets to control emotion, speaking style, paralinguistic elements, speech rate, and noise.

- Supervised fine-tuning paired with PPO and a token-level reward model aligns the audio LLM to accurately follow natural language editing commands for both TTS and audio editing tasks.

- The Step-Audio-Edit-Test benchmark, utilizing Gemini 2.5 Pro as an evaluator, demonstrates significant improvements in emotion, style, and paralinguistic control over multiple editing iterations in both Chinese and English.

- Step-Audio-EditX can effectively post-process and enhance speech generated by proprietary TTS systems, with the entire framework-including code and model checkpoints-available as open source for developers.

Final Thoughts

Step-Audio-EditX marks a significant advancement in controllable speech synthesis by integrating a dual codebook tokenizer with a compact yet powerful 3-billion-parameter audio LLM. Its innovative use of large margin synthetic data and reinforcement learning techniques enables precise and iterative audio editing that closely mirrors text editing workflows. The introduction of a robust evaluation benchmark with Gemini 2.5 Pro as a judge provides transparent and quantifiable metrics for emotion, style, and paralinguistic control. By releasing the full system as open source, StepFun AI is lowering barriers for researchers and developers, bringing us closer to seamless, user-friendly speech editing.