Contents Overview

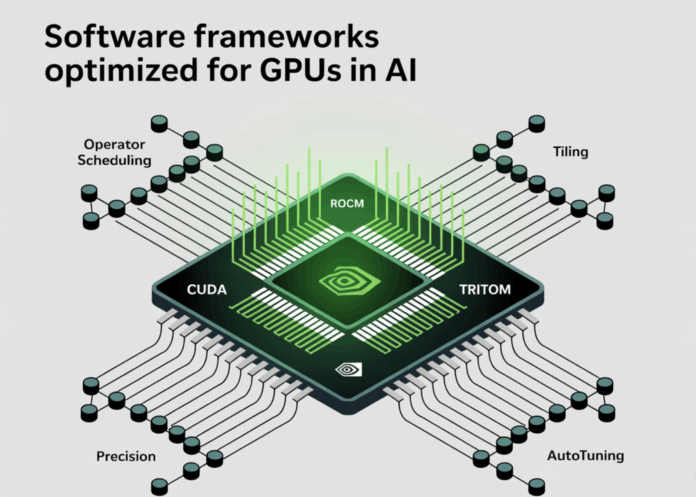

Achieving optimal throughput in deep learning heavily depends on how well a compiler stack translates tensor computations into efficient GPU execution. This involves strategies for thread and block scheduling, memory management, and instruction selection, such as leveraging Tensor Core MMA pipelines. This article delves into four leading compiler stacks-CUDA, ROCm, Triton, and TensorRT-analyzing their optimization techniques and practical impact on performance.

Key Factors Influencing GPU Performance Today

Common optimization strategies across platforms include:

- Operator Fusion and Scheduling: Minimizing kernel invocations and reducing data transfers to high-bandwidth memory (HBM) by fusing operations and extending producer-consumer chains to maximize register and shared memory reuse. For example, TensorRT and cuDNN implement runtime fusion engines that optimize attention mechanisms and convolutional blocks.

- Data Tiling and Layout Optimization: Designing tile dimensions that align with native fragment sizes of Tensor Cores or equivalent units (e.g., WGMMA/WMMA), while avoiding shared memory bank conflicts and partition camping. NVIDIA’s CUTLASS library provides detailed warp-level GEMM tiling strategies for both Tensor Cores and CUDA cores.

- Precision and Quantization Techniques: Utilizing lower-precision formats such as FP16, BF16, and FP8 for training and inference, and INT8 or INT4 (via calibration or quantization-aware training) for inference. TensorRT automates calibration and kernel selection to optimize performance under these precisions.

- Graph Capture and Runtime Specialization: Employing graph execution to reduce kernel launch overheads and dynamically fusing common subgraphs like attention layers. cuDNN 9 introduced graph support to enhance attention fusion engines, improving memory locality and execution efficiency.

- Autotuning: Automatically searching for optimal tile sizes, loop unroll factors, and pipelining depths tailored to specific architectures or SKUs. Triton and CUTLASS provide explicit autotuning interfaces, while TensorRT performs tactic selection during engine building.

With these principles in mind, we explore how each compiler stack applies these optimizations.

CUDA Ecosystem: nvcc, ptxas, cuDNN, CUTLASS, and CUDA Graphs

Compilation Workflow. CUDA source code is compiled by nvcc into PTX intermediate representation, which is then translated into architecture-specific machine code (SASS) by ptxas. Effective optimization requires passing appropriate flags to both host and device compilation stages; notably, the -Xptxas flag controls device-side optimizations, while -O3 alone only affects host code.

Kernel Libraries and Generation.

- CUTLASS offers highly parameterized templates for GEMM and convolution operations, implementing warp-level tiling, Tensor Core MMA pipelines, and shared memory iterators designed to avoid bank conflicts. It serves as a canonical reference for writing high-performance kernels, including support for Hopper’s WGMMA instructions.

- cuDNN 9 introduced runtime fusion engines, particularly for attention mechanisms, integrated CUDA Graph support to reduce dispatch overhead, and updates for new compute capabilities, significantly enhancing Transformer workload efficiency.

Performance Insights.

- Transitioning from unfused PyTorch operations to cuDNN’s attention fusion reduces kernel launches and global memory traffic. When combined with CUDA Graphs, this approach alleviates CPU bottlenecks during short-sequence inference.

- On Hopper and Blackwell architectures, aligning tile sizes with WGMMA and Tensor Core native fragments is critical; CUTLASS tutorials demonstrate how improper tile sizing can severely degrade tensor core utilization.

Ideal Use Cases for CUDA. When fine-grained control over instruction selection, occupancy, and shared memory management is required, or when extending kernels beyond existing libraries on NVIDIA GPUs, CUDA remains the preferred choice.

ROCm Platform: HIP/Clang Toolchain, rocBLAS, MIOpen, and Version 6.x Enhancements

Compilation Process. ROCm compiles HIP code-a CUDA-like programming model-using Clang/LLVM into AMD’s GCN or RDNA instruction sets. The 6.x releases have emphasized performance improvements and expanded framework support, with detailed release notes documenting component-level optimizations and hardware compatibility.

Library Ecosystem.

- rocBLAS and MIOpen provide GEMM and convolution primitives with architecture-aware tiling and algorithm selection, paralleling the design philosophy of cuBLAS and cuDNN. Continuous performance tuning is evident across recent versions.

- Recent developments include enhanced Triton support on AMD GPUs, enabling Python-level kernel development that compiles through LLVM to AMD hardware backends.

Performance Considerations.

- On AMD GPUs, aligning local data share (LDS) bank widths and vectorized global memory accesses with matrix tile shapes is as crucial as shared memory bank alignment on NVIDIA. Compiler-assisted fusion in frameworks and autotuning in rocBLAS/MIOpen significantly narrow the performance gap with hand-optimized kernels, depending on architecture and driver versions. ROCm 6.0 to 6.4.x releases show steady autotuner enhancements.

When to Choose ROCm. For native AMD GPU support with HIP portability from CUDA-style kernels and a robust LLVM-based toolchain, ROCm is the optimal solution.

Triton: A Domain-Specific Language and Compiler for Custom GPU Kernels

Compilation Approach. Triton is a Python-embedded DSL that compiles through LLVM, managing vectorization, memory coalescing, and register allocation while allowing explicit control over block sizes and program IDs. Documentation highlights LLVM dependencies and custom build options. NVIDIA’s developer resources discuss Triton’s tuning for recent architectures like Blackwell, including FP16 and FP8 GEMM enhancements.

Optimization Features.

- Autotuning capabilities explore tile dimensions, warp counts, and pipelining stages; static masking handles boundary conditions without resorting to scalar fallbacks; shared memory staging and software pipelining overlap global memory loads with computation.

- Triton’s design philosophy automates complex CUDA-level optimizations while leaving block-level tiling decisions to the developer, streamlining kernel development.

Performance Benefits.

- Triton excels when creating fused, shape-specialized kernels beyond standard library coverage, such as custom attention variants or normalization-activation-matmul sequences. Collaborations with hardware vendors have yielded architecture-specific backend improvements, narrowing the performance gap with CUTLASS-style kernels on modern NVIDIA GPUs.

Best Use Cases for Triton. When seeking near-CUDA performance for custom fused operations without writing low-level assembly or WMMA code, and valuing Python-first development with autotuning support.

TensorRT and TensorRT-LLM: Graph-Level Optimization for Inference

Compilation Workflow. TensorRT consumes ONNX or framework graphs and generates hardware-optimized inference engines. During engine building, it performs layer and tensor fusion, precision calibration (supporting INT8, FP8, FP16), and kernel tactic selection. TensorRT-LLM extends these capabilities with optimizations tailored for large language models.

Optimization Techniques.

- Graph-Level Optimizations: constant folding, canonicalization of concat and slice operations, fusion of convolution, bias, and activation layers, and attention fusion.

- Precision Handling: post-training calibration methods (entropy, percentile, MSE), per-tensor quantization, and advanced workflows like smooth quantization and quantization-aware training in TensorRT-LLM.

- Runtime Enhancements: paged key-value caching, in-flight batching, and scheduling strategies for multi-stream and multi-GPU deployments.

Performance Impact.

- Significant gains arise from comprehensive INT8 or FP8 quantization (on Hopper/Blackwell architectures), eliminating framework overhead by consolidating execution into a single engine, and aggressive fusion of attention layers. TensorRT’s builder generates architecture-specific execution plans to avoid generic kernels at runtime.

When TensorRT Fits Best. For production-grade inference on NVIDIA GPUs where pre-compiling optimized engines and leveraging quantization and graph fusion yield substantial performance improvements.

Practical Recommendations for Selecting and Optimizing Compiler Stacks

- Distinguish Between Training and Inference Workloads.

- For training and experimental kernel development, use CUDA with CUTLASS on NVIDIA or ROCm with rocBLAS/MIOpen on AMD; employ Triton for custom fused kernels.

- For production inference on NVIDIA hardware, TensorRT and TensorRT-LLM provide comprehensive graph-level optimizations.

- Leverage Architecture-Specific Instructions.

- On NVIDIA Hopper and Blackwell GPUs, ensure tile sizes align with WGMMA and WMMA native fragments; CUTLASS documentation offers detailed guidance on warp-level GEMM and shared memory iterator design.

- On AMD platforms, align LDS usage and vector widths with compute unit datapaths; utilize ROCm 6.x autotuners and Triton-on-ROCm for specialized kernel shapes.

- Prioritize Fusion Before Quantization.

- Fusing kernels and graphs reduces memory bandwidth demands, while quantization decreases data size and increases computational density. TensorRT’s builder-time fusion combined with INT8 or FP8 quantization often results in multiplicative performance gains.

- Utilize Graph Execution for Short Sequence Inference.

- Integrating CUDA Graphs with cuDNN attention fusion amortizes kernel launch overheads, enhancing autoregressive inference throughput.

- Treat Compiler Flags as Critical Tuning Parameters.

- For CUDA, always specify device-side optimization flags such as

-Xptxas -O3,-vand use-Xptxas -O0for debugging; relying solely on host-side-O3is insufficient for device code optimization.

- For CUDA, always specify device-side optimization flags such as