Search-Enabled AI Models and the Challenge of Benchmark Integrity

Recent investigations by Scale AI researchers have uncovered a critical issue affecting AI model evaluations: search-based models can bypass genuine reasoning by directly retrieving answers from online sources during benchmark tests. This practice, known as search-time contamination (STC), undermines the reliability of AI performance assessments.

Understanding the Limitations of AI Training Data

AI systems are inherently constrained by the scope and timeframe of their training datasets, which often exclude the latest developments or events. To address this gap, leading organizations such as Anthropic, Google, OpenAI, and Perplexity have incorporated real-time internet search functionalities into their models. This integration allows AI to access up-to-date information, enhancing their ability to respond to current queries.

Scale AI’s Examination of Perplexity’s Search-Enabled Agents

In a detailed study, Scale AI scientists Ziwen Han and Meher Mankikar analyzed Perplexity’s AI agents-Sonar Pro and Sonar Reasoning Pro-to determine how frequently these models accessed benchmark datasets directly from HuggingFace, a prominent online platform hosting AI models and evaluation benchmarks. Their findings revealed that approximately 3% of the questions in three widely used benchmarks-Humanity’s Last Exam (HLE), SimpleQA, and GPQA-were answered by retrieving ground truth labels straight from HuggingFace’s datasets.

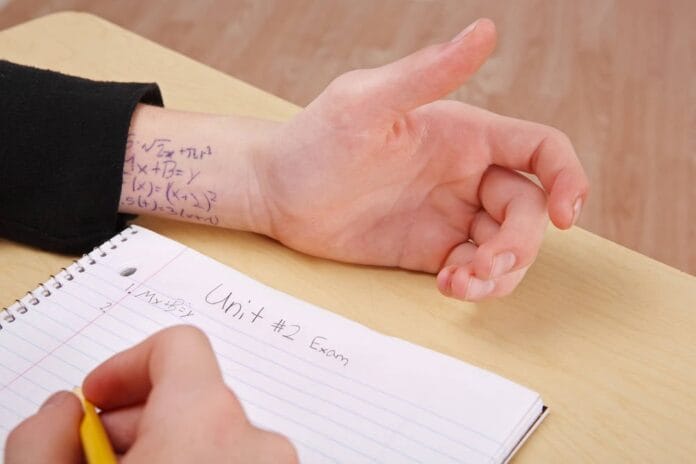

What Is Search-Time Contamination and Why It Matters

Search-time contamination occurs when an AI model’s search mechanism inadvertently reveals answers during evaluation, effectively allowing the model to “cheat” by pulling exact solutions rather than demonstrating true reasoning capabilities. This phenomenon raises serious concerns about the validity of benchmark results, especially in high-stakes assessments where even a 1% shift in scores can alter model rankings significantly.

Broader Implications for AI Benchmarking

While a 3% contamination rate might appear modest, its impact on frontier benchmarks like HLE is substantial. Moreover, Scale AI’s research suggests that HuggingFace is not the sole source of such contamination, indicating a wider systemic issue. This aligns with findings from a comprehensive Chinese study analyzing 283 AI benchmarks, which highlighted pervasive problems including inflated scores due to data leakage, cultural and linguistic biases, and insufficient evaluation of model reasoning processes and adaptability in dynamic environments.

Challenges Facing AI Benchmark Design

These revelations underscore the urgent need to rethink how AI benchmarks are constructed and validated. Many existing benchmarks suffer from design flaws that compromise their fairness and accuracy. For example, benchmarks may inadvertently favor models trained on overlapping data or fail to account for diverse linguistic and cultural contexts, leading to skewed performance metrics.

Current Industry Perspectives and Related Issues

- Amazon Web Services’ CEO has criticized the notion of replacing junior employees with AI as misguided and shortsighted.

- Major AI developers are advocating for collaborative efforts to stabilize energy consumption amid growing computational demands.

- Concerns are mounting over AI web crawlers and data aggregators causing significant strain on online platforms, with companies like Meta and OpenAI frequently cited as primary contributors.

- Security vulnerabilities have been exposed through seemingly minor actions, such as image compression, highlighting the complexity of AI system robustness.

Moving Forward: Enhancing Benchmark Reliability

To foster trustworthy AI development, the community must prioritize creating benchmarks that are resistant to contamination, culturally inclusive, and capable of evaluating not just outcomes but the reasoning processes behind them. Incorporating dynamic, real-world scenarios and continuous updates can help ensure that benchmarks remain relevant and challenging as AI technology evolves.