Samsung Introduces TRUEBench: A New Standard for Evaluating AI Productivity in Business

Samsung is pioneering a novel approach to measuring the practical effectiveness of AI models within corporate environments by launching TRUEBench, a benchmark designed to overcome the shortcomings of traditional AI evaluation methods. This innovative system aims to close the gap between theoretical AI capabilities and their actual impact on enterprise productivity.

Addressing the Limitations of Conventional AI Benchmarks

As organizations worldwide increasingly integrate large language models (LLMs) to streamline operations, a critical challenge has surfaced: accurately assessing these models’ real-world utility. Existing benchmarks predominantly focus on academic knowledge or simple Q&A tasks, often restricted to English and lacking the complexity of business scenarios. This narrow scope leaves enterprises without a dependable framework to evaluate AI performance on multifaceted, multilingual, and context-sensitive tasks typical in professional settings.

TRUEBench: Tailored for Real-World Enterprise Applications

TRUEBench, which stands for Trustworthy Real-world Usage Evaluation Benchmark, was developed by Samsung to fill this evaluation void. It offers a robust set of metrics that reflect the diverse and practical demands of corporate workflows. Drawing from Samsung’s extensive internal use of AI across various business functions, TRUEBench ensures its criteria are deeply rooted in authentic workplace challenges.

The benchmark assesses key enterprise activities such as content generation, data analysis, document summarization, and translation. These are categorized into 10 primary groups and further divided into 46 subcategories, enabling a detailed and nuanced evaluation of an AI model’s productivity across different tasks.

Multilingual and Context-Rich Testing for Global Enterprises

Recognizing the global nature of modern business, TRUEBench incorporates 2,485 diverse test sets spanning 12 languages, including cross-lingual scenarios. This multilingual design is essential for multinational companies where information exchange occurs across linguistic boundaries. Test inputs vary widely-from concise instructions as brief as eight characters to complex documents exceeding 20,000 characters-mirroring the spectrum of real workplace demands.

Beyond Accuracy: Measuring AI’s Understanding of Implicit Business Needs

Samsung identified that in practical business interactions, user intentions are often implicit rather than explicitly stated. TRUEBench evaluates AI models not just on correctness but on their ability to interpret and fulfill these unspoken requirements, emphasizing helpfulness and contextual relevance over mere accuracy.

Innovative Human-AI Collaboration in Benchmark Development

The creation of TRUEBench’s scoring system involved a unique iterative process combining human expertise and AI assistance. Initially, human evaluators define the standards for each task. Subsequently, AI reviews these criteria to detect inconsistencies, errors, or unrealistic constraints. Human annotators then refine the standards based on AI feedback. This cyclical collaboration ensures the evaluation framework is both precise and aligned with real-world expectations.

Automated, Objective Scoring for Consistent AI Assessment

TRUEBench employs an automated scoring mechanism that applies the refined criteria to assess LLM performance, significantly reducing subjective bias inherent in human-only evaluations. The benchmark uses a stringent “all-or-nothing” scoring approach, requiring AI models to meet every condition of a test to pass. This method provides a rigorous and transparent measure of AI capabilities across various enterprise functions.

Open Access and Community Engagement via Hugging Face

To promote transparency and foster widespread adoption, Samsung has made TRUEBench’s datasets and leaderboards publicly accessible on the Hugging Face platform. This open-source environment enables developers, researchers, and businesses to compare the productivity of up to five AI models side-by-side, offering a clear snapshot of their relative strengths on practical tasks.

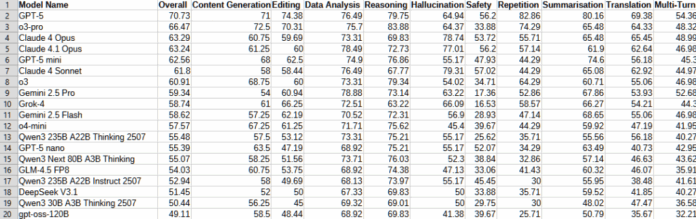

Current Leaderboard Highlights

As of the latest update, the top 20 AI models ranked by TRUEBench demonstrate a range of capabilities in handling enterprise-relevant tasks. Alongside performance scores, the benchmark also reports the average length of AI-generated responses, providing insights into both effectiveness and efficiency-key factors for organizations balancing operational costs and response speed.

Transforming AI Evaluation to Drive Enterprise Adoption

With TRUEBench, Samsung is redefining how AI performance is measured by shifting the focus from abstract knowledge tests to tangible productivity outcomes. This benchmark equips organizations with a reliable tool to make informed decisions about integrating AI models into their workflows, ultimately bridging the divide between AI’s theoretical promise and its demonstrated business value.