OpenAI has unveiled GPT-5.1-Codex-Max, an advanced agentic coding model engineered to handle extensive software development projects that span millions of tokens and extend over multiple hours. This cutting-edge model is currently accessible within the Codex ecosystem, including the command-line interface (CLI), integrated development environment (IDE) extensions, cloud platforms, and code review tools, with API availability expected soon.

Optimized Capabilities of GPT-5.1-Codex-Max

Built upon an enhanced foundational reasoning architecture, GPT-5.1-Codex-Max is specifically tailored for agentic tasks across diverse fields such as software engineering, mathematics, and research. Its training incorporates authentic software development workflows, including pull request generation, code review, frontend development, and technical Q&A, ensuring practical applicability.

Unlike general-purpose conversational models, GPT-5.1-Codex-Max is designed for specialized coding environments like Codex and similar platforms. It is not intended to replace GPT-5.1 for everyday dialogue but excels in autonomous coding tasks requiring deep reasoning and sustained focus.

Notably, this iteration is the first Codex model optimized for Windows operating systems, enhancing its collaboration capabilities within the Codex CLI. It demonstrates improved command execution and file management within the Codex sandbox, making it a more effective partner in complex development workflows.

Handling Extended Coding Sessions with Compaction

A standout feature of GPT-5.1-Codex-Max is its native support for compaction, enabling it to manage long-duration tasks that exceed the constraints of a single context window. The model intelligently condenses its interaction history, retaining critical information while discarding less relevant details, thus maintaining continuity over extended periods.

Within Codex applications, this compaction process activates automatically as the context window nears capacity. The model initiates a new context window that preserves the essential state of the ongoing task, allowing uninterrupted progression. This cycle can repeat multiple times, facilitating autonomous coding sessions that last beyond 24 hours.

Internal assessments reveal that GPT-5.1-Codex-Max can independently iterate on complex projects, debug failing tests, and ultimately deliver successful outcomes after prolonged operation, showcasing its robustness for real-world software engineering challenges.

Enhanced Reasoning Efficiency and Performance Metrics

GPT-5.1-Codex-Max incorporates the reasoning effort control mechanism introduced with GPT-5.1, fine-tuned for coding applications. This feature determines the number of “thinking” tokens the model allocates before finalizing an output, balancing accuracy and computational cost.

On the SWE-bench Verified benchmark, GPT-5.1-Codex-Max operating at medium reasoning effort surpasses GPT-5.1-Codex in accuracy while utilizing approximately 30% fewer thinking tokens. For tasks where latency is less critical, an Extra High (xhigh) reasoning effort mode is available, allowing the model to deliberate longer and produce superior results. Medium effort remains the default recommendation for most scenarios.

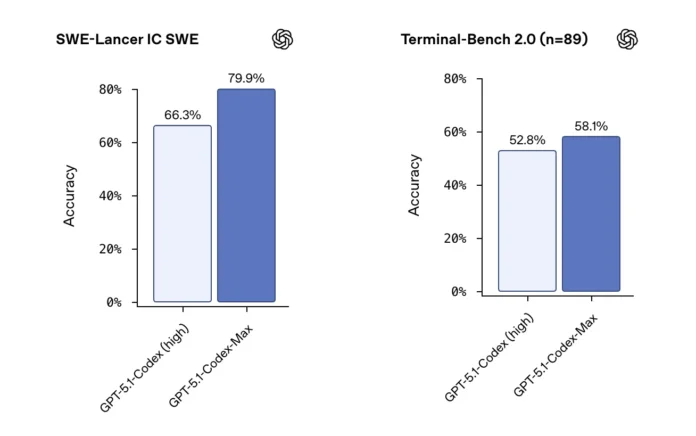

Benchmark comparisons with compaction enabled demonstrate significant improvements: on 500 SWE-bench Verified issues, GPT-5.1-Codex-Max achieves 77.9% accuracy at xhigh effort versus 73.7% for GPT-5.1-Codex at high effort. Similarly, on SWE-Lancer IC SWE, scores rise from 66.3% to 79.9%, and on Terminal-Bench 2.0, from 52.8% to 58.1%. Terminal-Bench 2.0 tests utilize the Codex CLI within the Laude Institute Harbor environment.

Qualitative evaluations highlight GPT-5.1-Codex-Max’s ability to generate frontend designs that match the functionality and aesthetics of previous models but with greater token efficiency, thanks to more streamlined reasoning processes.

Summary of Key Innovations

- GPT-5.1-Codex-Max is a state-of-the-art agentic coding model based on an upgraded reasoning framework, trained extensively on practical software engineering tasks such as pull request creation, code review, frontend development, and Q&A. It is currently integrated across Codex CLI, IDEs, cloud services, and code review platforms, with API access forthcoming.

- The model introduces a novel compaction technique that compresses its memory over multiple context windows, enabling continuous, autonomous coding sessions that can last over 24 hours and process millions of tokens while maintaining task coherence.

- Retaining the reasoning effort control from GPT-5.1, GPT-5.1-Codex-Max achieves higher accuracy with fewer thinking tokens at medium effort and offers an Extra High reasoning mode for complex challenges.

- On leading-edge coding benchmarks with compaction active, GPT-5.1-Codex-Max significantly outperforms its predecessor, improving accuracy across SWE-bench Verified, SWE-Lancer IC SWE, and Terminal-Bench 2.0 datasets.

Insights and Future Outlook

GPT-5.1-Codex-Max marks a strategic shift by OpenAI towards supporting prolonged, autonomous coding workflows rather than brief, isolated code edits. Its compaction capability, combined with rigorous frontier coding benchmarks and adjustable reasoning effort, exemplifies a new paradigm in scaling computational reasoning for practical software development.

As this technology integrates into production environments, frameworks like the Preparedness Framework and the Codex sandbox will be essential to ensure reliability and security. Overall, GPT-5.1-Codex-Max represents a significant advancement in agentic coding models, operationalizing sustained, high-level reasoning within developer tools to meet the demands of modern software engineering.