Introducing Reinforcement Learning Pretraining (RLP): A Novel Approach to Enhance AI Reasoning

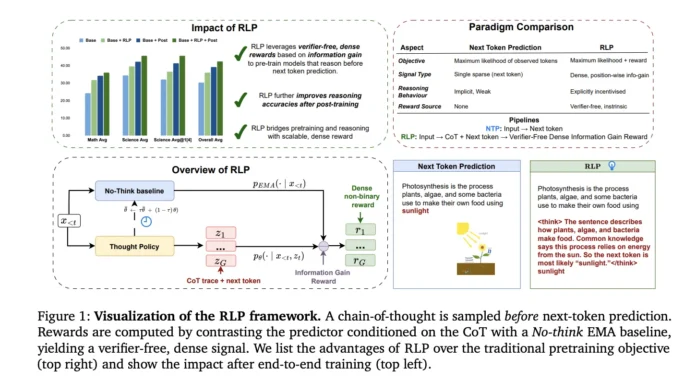

NVIDIA AI has pioneered a groundbreaking training paradigm called Reinforcement Learning Pretraining (RLP), which integrates reinforcement learning directly into the pretraining phase of language models, rather than postponing it until after initial training. The fundamental concept is straightforward yet innovative: treat a brief chain-of-thought (CoT) sequence as an action taken before predicting the next token, and assign it a reward based on the information gain it provides about the upcoming token. This reward is calculated relative to a no-think Exponential Moving Average (EMA) baseline, resulting in a dense, position-specific, and verifier-free reward signal that can be applied efficiently across large-scale text corpora during pretraining.

How RLP Works: Leveraging Information Gain with an EMA Baseline

RLP employs a single neural network with shared parameters to perform two key steps: (1) generate a CoT policy that samples intermediate reasoning steps before the next token prediction, and (2) evaluate the likelihood of the next token conditioned on this reasoning. A slowly updated EMA model acts as a “no-think” teacher, providing a counterfactual baseline by predicting the next token without any intermediate reasoning. The reward for each token is computed as the log-likelihood ratio between the CoT-conditioned prediction and the EMA baseline, effectively quantifying the information gain contributed by the reasoning step.

Training updates focus exclusively on the thought tokens, using a clipped surrogate objective with per-token importance weights and group-relative advantages. Multiple sampled reasoning paths per context help reduce variance. Theoretically, this objective maximizes expected information gain, which correlates with reductions in cross-entropy loss and is bounded through marginalization over possible thoughts.

Technical Advantages: Dense Rewards Without External Verifiers

Unlike previous reinforcement learning approaches that depend on sparse, binary correctness signals or require external verification, RLP’s reward is dense and position-wise, providing credit assignment at every token where reasoning improves prediction. This verifier-free design enables seamless scaling to vast, uncurated datasets such as web crawls, academic texts, and textbooks, without the brittleness associated with narrow, hand-labeled corpora.

Empirical Performance: Significant Gains Across Models and Benchmarks

Qwen3-1.7B-Base Model

When pretrained with RLP, the Qwen3-1.7B-Base model demonstrated an impressive ~19% improvement on combined math and science benchmarks compared to its base version, and a ~17% increase over compute-matched continuous pretraining (CPT). After identical post-training involving supervised fine-tuning (SFT) and reinforcement learning with value regularization (RLVR), the RLP-initialized model maintained a robust 7-8% relative advantage, particularly excelling on reasoning-intensive tests such as AIME25 and MMLU-Pro.

Nemotron-Nano-12B v2 Model

Applying RLP to the 12-billion-parameter hybrid Mamba-Transformer checkpoint resulted in a remarkable jump in overall average accuracy from 42.81% to 61.32%, including an absolute +23 percentage point boost in scientific reasoning tasks. Notably, this improvement was achieved despite training on approximately 200 billion fewer tokens (19.8 trillion vs. 20 trillion tokens), underscoring RLP’s data efficiency and its adaptability across different architectures.

Comparative Analysis: RLP vs. Reinforcement Pretraining (RPT)

In controlled experiments matching data volume and computational resources, RLP outperformed Reinforcement Pretraining (RPT) on math, science, and overall metrics. This superiority is attributed to RLP’s continuous, information-gain-based reward signal, which contrasts with RPT’s reliance on sparse binary feedback and entropy-filtered token selection. The continuous reward mechanism enables more nuanced and effective learning during pretraining.

Integration with Post-Training and Data Curation Strategies

RLP complements rather than replaces existing post-training methods such as supervised fine-tuning (SFT) and reinforcement learning with value regularization (RLVR), delivering additive benefits when combined. Because its reward is derived internally from model log-likelihoods rather than external evaluators, RLP scales effortlessly to diverse, domain-agnostic datasets including web crawls, academic literature, and reasoning-focused corpora. Even when compared to compute-matched CPT runs with 35 times more tokens, RLP consistently leads in performance, indicating that its gains stem from superior objective design rather than increased training budget.

Essential Insights and Best Practices

- Reasoning as a Pretraining Objective: RLP encourages models to “think before predicting” by sampling chains of thought and rewarding them based on the information gain over a no-think baseline.

- Verifier-Free, Dense Feedback: The method provides position-wise credit assignment without requiring external graders, enabling scalable updates on every token in large text streams.

- Qwen3-1.7B Results: Achieved +19% improvement over base and +17% over compute-matched CPT during pretraining; sustained ~7-8% gains after identical post-training, with strongest results on reasoning benchmarks.

- Nemotron-Nano-12B v2 Outcomes: Overall accuracy increased from 42.81% to 61.32% (+18.51 percentage points, ~35-43% relative improvement), including a +23 point surge in scientific reasoning, using significantly fewer tokens.

- Training Nuances: Gradient updates are restricted to thought tokens using a clipped surrogate loss and group-relative advantages; increasing rollout samples (~16) and thought length (~2048 tokens) enhances performance; token-level KL regularization showed no added benefit.

Final Thoughts: RLP as a Scalable Upgrade to Next-Token Pretraining

Reinforcement Learning Pretraining (RLP) reimagines the pretraining process by directly incentivizing “think-before-predict” behavior through a verifier-free, information-theoretic reward. This approach yields persistent improvements in reasoning capabilities that endure through standard post-training procedures and generalize across diverse model architectures such as Qwen3-1.7B and Nemotron-Nano-12B v2. By contrasting chain-of-thought-conditioned likelihoods against a no-think EMA baseline, RLP integrates smoothly into large-scale training pipelines without the need for curated verification datasets, making it a practical and effective enhancement to traditional next-token prediction objectives.