Heard about Artificial General Intelligence (AGI)? Meet its auditory counterpart—Audio General Intelligence. With Audio Flamingo 3 (AF3), NVIDIA introduces a major leap in how machines understand and reason about sound. While past models could transcribe speech or classify audio clips, they lacked the ability to interpret audio in a context-rich, human-like way—across speech, ambient sound, and music, and over extended durations. AF3 changes that.

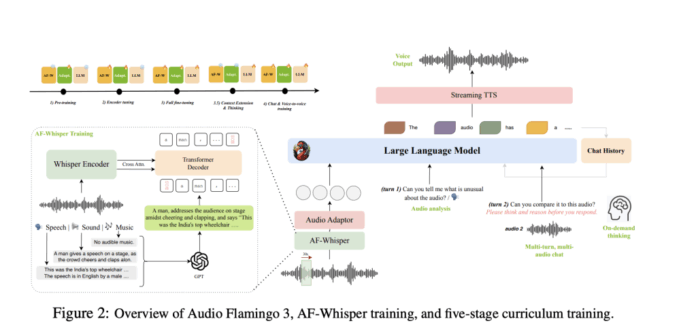

With Audio Flamingo 3, NVIDIA introduces a fully open-source large audio-language model (LALM) that not only hears but also understands and reasons. Built on a five-stage curriculum and powered by the AF-Whisper encoder, AF3 supports long audio inputs (up to 10 minutes), multi-turn multi-audio chat, on-demand thinking, and even voice-to-voice interactions. This sets a new bar for how AI systems interact with sound, bringing us a step closer to AGI.

The Core Innovations Behind Audio Flamingo 3

- AF-Whisper: A Unified Audio Encoder AF3 uses AF-Whisper, a novel encoder adapted from Whisper-v3. It processes speech, ambient sounds, and music using the same architecture—solving a major limitation of earlier LALMs which used separate encoders, leading to inconsistencies. AF-Whisper leverages audio-caption datasets, synthesized metadata, and a dense 1280-dimension embedding space to align with text representations.

- Chain-of-Thought for Audio: On-Demand Reasoning Unlike static QA systems, AF3 is equipped with ‘thinking’ capabilities. Using the AF-Think dataset (250k examples), the model can perform chain-of-thought reasoning when prompted, enabling it to explain its inference steps before arriving at an answer—a key step toward transparent audio AI.

- Multi-Turn, Multi-Audio Conversations Through the AF-Chat dataset (75k dialogues), AF3 can hold contextual conversations involving multiple audio inputs across turns. This mimics real-world interactions, where humans refer back to previous audio cues. It also introduces voice-to-voice conversations using a streaming text-to-speech module.

- Long Audio Reasoning AF3 is the first fully open model capable of reasoning over audio inputs up to 10 minutes. Trained with LongAudio-XL (1.25M examples), the model supports tasks like meeting summarization, podcast understanding, sarcasm detection, and temporal grounding.

State-of-the-Art Benchmarks and Real-World Capability

AF3 surpasses both open and closed models on over 20 benchmarks, including:

- MMAU (avg): 73.14% (+2.14% over Qwen2.5-O)

- LongAudioBench: 68.6 (GPT-4o evaluation), beating Gemini 2.5 Pro

- LibriSpeech (ASR): 1.57% WER, outperforming Phi-4-mm

- ClothoAQA: 91.1% (vs. 89.2% from Qwen2.5-O)

These improvements aren’t just marginal; they redefine what’s expected from audio-language systems. AF3 also introduces benchmarking in voice chat and speech generation, achieving 5.94s generation latency (vs. 14.62s for Qwen2.5) and better similarity scores.

The Data Pipeline: Datasets That Teach Audio Reasoning

NVIDIA didn’t just scale compute—they rethought the data:

- AudioSkills-XL: 8M examples combining ambient, music, and speech reasoning.

- LongAudio-XL: Covers long-form speech from audiobooks, podcasts, meetings.

- AF-Think: Promotes short CoT-style inference.

- AF-Chat: Designed for multi-turn, multi-audio conversations.

Each dataset is fully open-sourced, along with training code and recipes, enabling reproducibility and future research.

Open Source

AF3 is not just a model drop. NVIDIA released:

- Model weights

- Training recipes

- Inference code

- Four open datasets

This transparency makes AF3 the most accessible state-of-the-art audio-language model. It opens new research directions in auditory reasoning, low-latency audio agents, music comprehension, and multi-modal interaction.

Conclusion: Toward General Audio Intelligence

Audio Flamingo 3 demonstrates that deep audio understanding is not just possible but reproducible and open. By combining scale, novel training strategies, and diverse data, NVIDIA delivers a model that listens, understands, and reasons in ways previous LALMs could not.

Check out the , and . All credit for this research goes to the researchers of this project.

Ready to connect with 1 Million+ AI Devs/Engineers/Researchers? See how NVIDIA, LG AI Research, and top AI companies leverage MarkTechPost to reach their target audience