NVIDIA has unveiled a major advancement in AI inference performance through its collaboration with Mistral AI, coinciding with the launch of the Mistral 3 frontier open model family. This partnership marks a transformative step in accelerating AI workloads, particularly for enterprise applications.

Revolutionizing AI Inference: Up to 10x Speed Boost on Blackwell Architecture

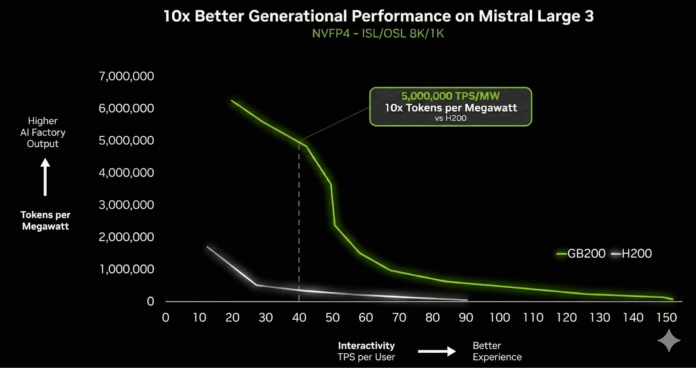

As enterprises increasingly demand AI systems capable of complex reasoning and handling extensive context, inference speed has become a critical challenge. The new Mistral 3 models, optimized specifically for NVIDIA’s cutting-edge Blackwell architecture, address this by delivering up to a tenfold increase in inference speed compared to the previous H200 generation.

This leap is not just about raw throughput; it also brings substantial improvements in energy efficiency. The NVIDIA GB200 NVL72 GPU system achieves remarkable performance gains, supporting user interaction rates of 40 tokens per second while significantly reducing power consumption. For data centers facing strict energy budgets, this balance of speed and efficiency is a game-changer, lowering the cost per token and enabling real-time AI applications at scale.

The Mistral 3 Model Family: Versatility from Data Centers to Edge Devices

The driving force behind this performance surge is the newly introduced Mistral 3 family, a collection of models designed to deliver top-tier accuracy, efficiency, and adaptability across diverse deployment scenarios.

Mistral Large 3: The Premier Mixture of Experts Model

- Parameters: 675 billion total, with 41 billion active parameters

- Context Window: Supports up to 256,000 tokens

Trained on extensive datasets, Mistral Large 3 excels in complex reasoning tasks, matching the capabilities of leading proprietary models while maintaining the openness and flexibility of an open-weight architecture.

Ministral 3: Compact, Dense Models for Edge AI

- Model Sizes: 3 billion, 8 billion, and 14 billion parameters

- Variants: Base, Instruct, and Reasoning versions for each size, totaling nine models

- Context Window: Uniform 256,000-token support

These smaller, dense models are optimized for speed and versatility, achieving superior accuracy on benchmarks like GPQA Diamond while requiring 100 fewer tokens than comparable models. This efficiency makes them ideal for deployment on edge devices and local workstations.

Engineering Excellence: A Multi-Faceted Optimization Strategy

The impressive 10x speed improvement stems from a deep integration of hardware and software innovations, developed jointly by NVIDIA and Mistral engineers through an “extreme co-design” methodology.

TensorRT-LLM Wide Expert Parallelism (Wide-EP)

Wide-EP technology maximizes the capabilities of the GB200 NVL72 by optimizing mixture-of-experts (MoE) operations with advanced GroupGEMM kernels, expert workload distribution, and dynamic load balancing. Leveraging the NVL72’s coherent memory and NVLink interconnect, Wide-EP minimizes communication delays, ensuring that even massive MoE models operate without bottlenecks.

Native NVFP4 Quantization for Precision and Efficiency

A key breakthrough is the adoption of NVFP4, a quantization format native to the Blackwell GPU architecture. This allows Mistral Large 3 to be quantized offline using the open-source llm-compressor tool, significantly reducing computational and memory demands while preserving model accuracy. NVFP4’s enhanced FP8 scaling and fine-grained block scaling techniques specifically target MoE weights, enabling seamless deployment on GB200 NVL72 systems with minimal precision loss.

Disaggregated Inference with NVIDIA Dynamo

NVIDIA Dynamo, a low-latency distributed inference framework, separates the input processing (prefill) and output generation (decode) phases. This disaggregation optimizes resource allocation and throughput, particularly for long-context scenarios such as 8K input tokens with 1K output tokens, fully leveraging the Mistral 3 family’s extensive 256K token context window.

Extending AI Performance from Cloud to Edge

Recognizing the growing importance of on-device AI, the Ministral 3 series is tailored for edge environments, delivering high-speed inference on platforms like NVIDIA GeForce RTX and Jetson modules.

Accelerated Edge AI on RTX and Jetson

- RTX 5090: Ministral-3B models achieve blazing-fast inference speeds, bringing workstation-level AI capabilities to local PCs, enhancing privacy and enabling rapid experimentation.

- Jetson Thor: For robotics and embedded AI, the Ministral-3-3B-Instruct model runs at 52 tokens per second with single concurrency, scaling efficiently to higher throughput with multiple concurrent requests.

Robust Ecosystem Support

NVIDIA has partnered with the open-source community to ensure broad compatibility and ease of use:

- Llama.cpp & Ollama: Integration with these frameworks accelerates local development cycles and reduces latency.

- SGLang: Supports Mistral Large 3 with features like disaggregated serving and speculative decoding.

- vLLM: Enhances kernel support, including speculative decoding (EAGLE), Blackwell architecture compatibility, and expanded parallelism.

Enterprise-Ready Deployment with NVIDIA NIM

To facilitate seamless enterprise adoption, Mistral Large 3 and Ministral-14B-Instruct models are accessible via the NVIDIA API catalog and preview API. Soon, downloadable NVIDIA NIM microservices will offer containerized, production-ready deployments compatible with any GPU-accelerated infrastructure.

This approach democratizes access to cutting-edge AI, enabling organizations to harness the GB200 NVL72’s 10x performance advantage without the need for complex custom engineering.

Setting a New Benchmark for Open AI Innovation

The NVIDIA-accelerated Mistral 3 family establishes a new paradigm for open-source AI, combining state-of-the-art performance with flexible, open licensing. From massive data center deployments to edge-friendly models running on RTX 5090 GPUs, this collaboration paves the way for scalable, efficient AI solutions.

Looking ahead, upcoming enhancements such as multitoken prediction (MTP) and EAGLE-3 speculative decoding promise to further elevate performance, positioning the Mistral 3 family as a cornerstone for next-generation AI applications.

Try It Yourself

Developers interested in benchmarking these advancements can access the Mistral 3 models directly on Hugging Face or explore hosted, deployment-free versions to evaluate latency and throughput tailored to their specific needs.