Image Credit: VentureBeat with ChatGPT

Subscribe to our weekly newsletters and get the latest news on enterprise AI, data security, and data analytics. Subscribe Now Researchers at Katanemo Labs (19459056) has introduced Arch-Router ( ), a new routing framework and model designed to intelligently map users’ queries to the best large language model.

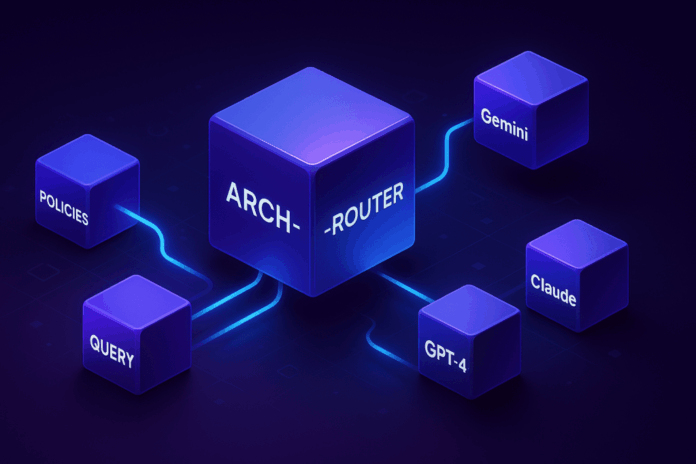

Arch-Router is a routing model and framework that helps enterprises build products that rely upon multiple LLMs. It aims to solve the key challenge of how to direct user queries to the most suitable large language model (LLM) without having to rely on rigid logic, or costly retraining each time something changes.

The challenges of LLM Routing

As LLMs grow in number, developers are moving away from single-model setups and towards multi-model systems, which use the strengths of each model to perform specific tasks (e.g. code generation, text summary, or image editing). LLM routing is a key technique to build and deploy these systems. It acts as a traffic control system that directs every user query to the appropriate model.

Routing methods fall into two main categories: “task based routing,” in which queries are routed according to predefined tasks; and “performance based routing,” a method that aims to achieve the best balance between cost-effectiveness and performance.

Task-based routing is not able to handle changing or unclear user intentions, especially in multi-turn conversations. Performance-based routing, by contrast, is rigidly based on benchmark scores and ignores real-world preferences. It also adapts poorly to changes in models without costly fine-tuning.

Fundamentally, as the Katanemo Labs Researchers note in their They typically optimize for benchmark performance while neglecting human preferences driven by subjective evaluation criteria. They tend to optimize for benchmark performance, while neglecting subjective evaluation criteria.

A new framework for preference aligned routing

In order to address these limitations, researchers propose a “preference aligned routing” framework which matches queries with routing policies based upon user-defined preferences.

This framework allows users to define their routing policies using natural language, using a “Domain – Action Taxonomy.” The two-level hierarchy reflects the way people describe tasks in real life, starting with an overarching topic (the Domain), such as “finance” or “legal”and then narrowing down to a specific task, such “summarization” (or “code generation”)).

Each policy is then linked to a favorite model, allowing developers make routing decisions based more on real-world requirements than benchmark scores. According to the paper, “This taxonomy is a mental model that helps users define clear and structure routing policies.”

Routing happens in two stages. First, a preference aligned router model selects the best policy based on the user query. A mapping function then connects the selected policy with its designated LLM.

Since the logic for selecting the model is separate from the policy, it’s possible to add, remove, or swap models by editing the routing policy. This can be done without retraining or modifying the router. This decoupling allows for the flexibility needed in practical deployments where models and use-cases are constantly changing.

The policy selection is powered by Arch-Router, a compact 1.5B parameter language model fine-tuned for preference-aligned routing. Arch-Router receives the user query and the complete set of policy descriptions within its prompt. It then generates the identifier of the best-matching policy.

Since the policies are part of the input, the system can adapt to new or modified routes at inference time through in-context learning and without retraining. This generative approach allows Arch-Router to use its pre-trained knowledge to understand the semantics of both the query and the policies, and to process the entire conversation history at once.

A common concern with including extensive policies in a prompt is the potential for increased latency. However, the researchers designed Arch-Router to be highly efficient. “While the length of routing policies can get long, we can easily increase the context window of Arch-Router with minimal impact on latency,” explains Salman Paracha, co-author of the paper and Founder/CEO of Katanemo Labs. He notes that latency is primarily driven by the length of the output, and for Arch-Router, the output is simply the short name of a routing policy, like “image_editing” or “document_creation.”

Arch-Router is in action

In order to build Arch-Router the researchers fine-tuned the 1.5B parameter version on the Qwen2.5 model using a curated dataset with 43,000 examples. They then compared its performance with proprietary models from OpenAI Anthropic, and Google using four public datasets that were designed to evaluate conversational AI.

According to the results, Arch-Router achieved the highest overall routing scores of 93.17%. It also beats all other models by an average of 7.71%. The model’s advantages grew as conversations grew longer, demonstrating a strong ability to track the context across multiple turns.

In practice, this approach is already being applied in several scenarios, according to Paracha. For example, in open-source coding tools, developers use Arch-Router to direct different stages of their workflow, such as “code design,” “code understanding,” and “code generation,” to the LLMs best suited for each task. Similarly, enterprises can route document creation requests to a model like Claude 3.7 Sonnet while sending image editing tasks to Gemini 2.5 Pro.

The system is also ideal “for personal assistants in various domains, where users have a diversity of tasks from text summarization to factoid queries,” Paracha said, adding that “in those cases, Arch-Router can help developers unify and improve the overall user experience.”

This framework is integrated with Katanemo Labs AI-native proxy servers for agents like Archallow developers to implement sophisticated rules that shape traffic. When integrating a LLM, for example, a team could send a small amount of traffic to verify the performance using internal metrics and then transition traffic fully with confidence. The company is also working on integrating its tools with evaluation platforms in order to streamline this process further for enterprise developers.

The ultimate goal is to move past siloed AI deployments. Paracha says that Arch-Router, and Arch in general, helps developers and enterprises move away from fragmented LLM systems to a unified policy-driven system. “In scenarios with diverse user tasks, our framework helps transform that task and LLM segmentation into a unified, seamless experience for the end user.”

Want to impress your boss? VB Daily can help. We provide you with the inside scoop on what companies do with generative AI. From regulatory shifts to practical implementations, we give you the insights you need to maximize ROI.

Read our privacy policy

Thank you for subscribing. Click here to view more VB Newsletters.

An error occured.