The realm of software engineering automation is undergoing swift transformation, largely propelled by breakthroughs in Large Language Models (LLMs). Yet, many existing strategies for training proficient agents depend heavily on proprietary models or expensive teacher-guided techniques, leaving open-weight LLMs with constrained effectiveness in practical applications. Addressing this gap, researchers from Nebius AI and Humanoid have unveiled a reinforcement learning framework tailored for training software engineering agents capable of handling extended, multi-turn interactions. Their approach leverages a customized Decoupled Advantage Policy Optimization (DAPO) algorithm, marking a significant advancement in applying reinforcement learning (RL) to open-source LLMs for authentic, iterative software engineering challenges-surpassing the prevalent single-turn, bandit-style RL paradigms.

Moving Past Single-Step Reinforcement Learning

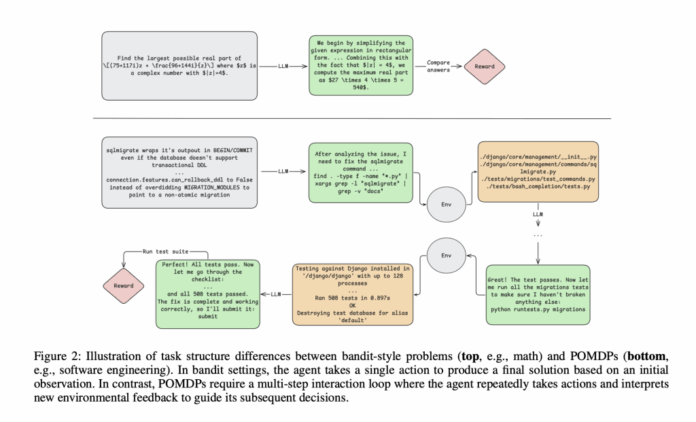

Traditional RL techniques for LLMs often focus on tasks like one-off code generation or mathematical problem-solving, where rewards are granted only after task completion and intermediate feedback is absent. In contrast, software engineering (SWE) demands agents to engage in extended sequences of actions, interpret complex feedback such as compiler diagnostics and test results, and maintain contextual awareness over extraordinarily long token sequences-sometimes exceeding 100,000 tokens-far beyond typical single-step interaction frameworks.

Fundamental Obstacles in Applying RL to Software Engineering

- Extended Reasoning Horizons: Agents must preserve logical consistency across numerous steps, often requiring context windows that surpass 100k tokens.

- Dynamic, Stateful Feedback: Each action produces meaningful observations-like shell command outputs or test suite feedback-that inform subsequent decisions.

- Delayed and Sparse Rewards: Success signals usually appear only after complex, multi-step interactions, complicating the process of attributing credit to individual actions.

- Complex Evaluation Metrics: Assessing progress necessitates unfolding entire interaction trajectories, which can be noisy due to intermittent test failures.

Innovative Approach: Enhanced DAPO and Agent Architecture

The team developed a two-phase training strategy for a Qwen2.5-72B-Instruct agent, designed to tackle the intricacies of long-context software engineering tasks:

Phase 1: Rejection Fine-Tuning (RFT)

This initial stage involves supervised fine-tuning on 7,249 meticulously curated software engineering tasks from the SWE-REBENCH dataset. The model learns from successful interaction sequences where it passes the environment’s test suite, with special attention to masking invalid environment-formatting actions during training. This process alone elevates the baseline accuracy from 11% to 20% on the SWE-bench Verified benchmark.

Phase 2: Reinforcement Learning with a Modified DAPO Algorithm

Building upon the Decoupled Advantage Policy Optimization framework, the researchers introduced several critical enhancements to improve scalability and training stability:

- Asymmetric Clipping: Maintains policy entropy to prevent premature convergence and encourage exploration.

- Dynamic Sample Filtering: Concentrates learning on trajectories that provide meaningful feedback signals.

- Length Penalties: Discourages excessively long episodes, helping the agent avoid infinite loops.

- Token-Level Gradient Averaging: Ensures every token in a trajectory equally influences gradient updates, empowering the model to learn from longer sequences effectively.

The agent operates within a ReAct-style loop, integrating reasoning with tool usage. Its toolkit includes executing arbitrary shell commands, precise code modifications, navigation and search utilities, and a submission action to mark episode completion. All interactions occur within a secure sandbox environment, initialized from authentic repository snapshots and guided by GitHub-style issue prompts.

Scaling Context Lengths and Benchmarking Real-World Performance

Initially, the agent was trained with a context window of 65,000 tokens-already twice the length of most open models-but performance plateaued at 32% accuracy. A subsequent RL phase expanded the context length to 131,000 tokens and doubled the maximum episode length, focusing training on the most impactful tasks. This extension allowed the agent to handle longer stack traces and code diffs typical in real-world debugging and patching scenarios.

Performance Highlights: Narrowing the Gap with Leading Models

- The final RL-enhanced agent achieved a 39% Pass@1 accuracy on the SWE-bench Verified benchmark, effectively doubling the rejection fine-tuned baseline and rivaling state-of-the-art open-weight models like DeepSeek-V3-0324-all without relying on teacher supervision.

- On unseen SWE-REBENCH splits, the agent maintained robust performance, scoring 35% in May and 31.7% in June evaluations.

- When benchmarked against top open-source baselines and specialized software engineering agents, the RL-trained model matched or exceeded several competitors, underscoring the efficacy of the reinforcement learning approach in this domain.

| Pass@1 SWE-bench Verified | Pass@10 | Pass@1 SWE-REBENCH May | Pass@10 | |

|---|---|---|---|---|

| Qwen2.5-72B-Instruct (RL, final) | 39.04% | 58.4% | 35.0% | 52.5% |

| DeepSeek-V3-0324 | 39.56% | 62.2% | 36.75% | 60.0% |

| Qwen3-235B no-thinking | 25.84% | 54.4% | 27.25% | 57.5% |

| Llama4 Maverick | 15.84% | 47.2% | 19.0% | 50.0% |

Note: Pass@1 scores represent averages over 10 runs, reported with mean ± standard error.

Critical Takeaways and Future Directions

- Credit Assignment Complexity: Sparse reward environments continue to pose challenges for RL. Future research may explore reward shaping, step-wise critics, or prefix-based rollout strategies to provide more granular feedback.

- Confidence and Uncertainty Estimation: Practical agents must discern when to abstain or express uncertainty. Incorporating measures like output entropy or explicit confidence scores is a promising avenue.

- Robust Infrastructure: Training leveraged context parallelism across 16 NVIDIA H200 GPUs, orchestrated via Kubernetes and Tracto AI, with vLLM enabling rapid inference-highlighting the importance of scalable infrastructure for long-context RL training.

Final Thoughts

This study establishes reinforcement learning as a powerful framework for developing autonomous software engineering agents using open-weight LLMs. By mastering long-horizon, multi-turn interactions within realistic environments, this approach opens new pathways for scalable, teacher-free agent training that capitalizes on interactive learning rather than static instruction. With ongoing enhancements, such RL-driven pipelines hold great promise for delivering efficient, dependable, and adaptable automation solutions that will shape the future of software development.