Is it possible for a compact 3-billion-parameter (3B) language model to achieve reasoning capabilities comparable to models with over 30 billion parameters simply by optimizing its training methodology rather than increasing its size? The Nanbeige LLM Lab at Boss Zhipin introduces Nanbeige4-3B, a family of 3B parameter language models meticulously trained with a strong focus on data quality, curriculum learning, knowledge distillation, and reinforcement learning techniques.

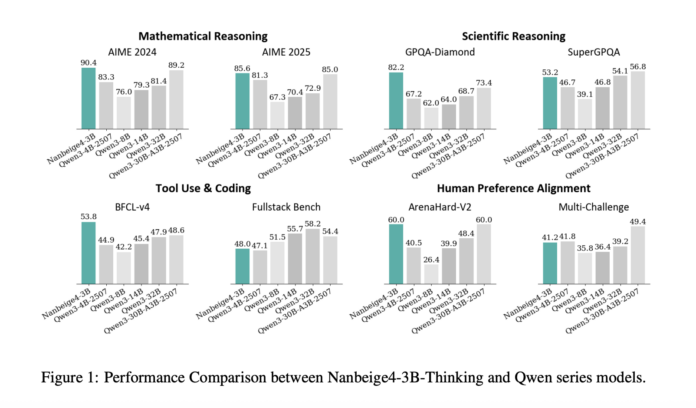

The team provides two main model versions: Nanbeige4-3B-Base and Nanbeige4-3B-Thinking. They benchmark the reasoning-optimized Nanbeige4-3B-Thinking against the Qwen3 series, which ranges from 4B to 32B parameters, to evaluate performance across various reasoning tasks.

Performance Highlights Across Benchmarks

On the challenging AIME 2024 benchmark, Nanbeige4-3B-2511 achieves an impressive average score of 90.4, surpassing the Qwen3-32B-2504’s 81.4. Similarly, on the GPQA-Diamond dataset, Nanbeige4-3B-2511 scores 82.2, significantly outperforming Qwen3-14B-2504’s 64.0 and Qwen3-32B-2504’s 68.7. These results strongly support the claim that a 3B model can outperform counterparts ten times its size in specific reasoning tasks.

In addition, Nanbeige4-3B demonstrates superior tool utilization on the BFCL-V4 benchmark, scoring 53.8 compared to 47.9 and 48.6 for Qwen3-32B and Qwen3-30B-A3B respectively. On Arena-Hard V2, it matches the highest recorded score of 60.0. However, it does not dominate every category; for instance, on Fullstack-Bench, it scores 48.0, trailing behind Qwen3-14B’s 55.7 and Qwen3-32B’s 58.2, and on SuperGPQA, it narrowly misses the top spot with 53.2 versus Qwen3-32B’s 54.1.

Innovative Training Strategies Driving 3B Model Excellence

Advanced Data Filtering and Strategic Resampling

During pretraining, the researchers employ a hybrid approach combining multi-dimensional tagging with similarity-based scoring. They condense their labeling framework to 20 dimensions, discovering that content-related labels are more predictive than format-based ones. Moreover, a nuanced 0-to-9 scoring scale outperforms simple binary labels. For similarity scoring, they construct a massive retrieval database containing hundreds of billions of entries, enabling hybrid text and vector-based retrieval.

The training corpus is carefully curated by filtering down to 12.5 trillion high-quality tokens, then selecting a 6.5 trillion token subset of even higher quality, which is upsampled for multiple epochs to form a final 23 trillion token dataset. This meticulous pipeline goes beyond mere data cleaning-it incorporates scoring, retrieval, and resampling based on explicit utility metrics, setting it apart from conventional small model training approaches.

Fine-Grained Data Utility Scheduling (FG-WSD)

Unlike typical models that apply uniform data sampling during training, Nanbeige4-3B introduces Fine-Grained Warmup-Stable-Decay (FG-WSD), a curriculum learning strategy that progressively emphasizes higher-quality data as training advances. This approach integrates a data curriculum within the stable training phase rather than treating warmup, stable, and decay solely as learning rate schedules.

In ablation studies with a 1B parameter model trained on 1 trillion tokens, FG-WSD improved performance on GSM8K from 27.1 to 34.3, with consistent gains across other benchmarks such as CMATH, BBH, MMLU, and MMLU-Pro. For the full 3B model, training is segmented into Warmup, Diversity-Enriched Stable, High-Quality Stable, and Decay phases, with Adaptive Block Frequency (ABF) applied during decay to extend the context window to 64,000 tokens.

Multi-Phase Supervised Fine-Tuning and Enhanced Supervision Quality

Post-pretraining fine-tuning begins with a “cold start” phase using approximately 30 million question-answer pairs focused on mathematics, science, and coding tasks, leveraging a 32K token context length. This phase comprises roughly 50% math reasoning, 30% scientific reasoning, and 20% coding challenges. Notably, increasing cold start instruction data from 0.5 million to 35 million samples continues to improve performance on AIME 2025 and GPQA-Diamond benchmarks without signs of early plateauing.

The subsequent fine-tuning phase expands to a 64K context length and incorporates a diverse mix of general conversation, writing, agent-style tool use, complex reasoning targeting model weaknesses, and coding tasks. This stage introduces iterative solution refinement combined with Chain-of-Thought (CoT) reconstruction. The model generates, critiques, and revises responses guided by a dynamic checklist, then reconstructs coherent CoT sequences aligned with the final refined answers, preventing the model from learning flawed reasoning paths after extensive editing.

Dual-Level Preference Distillation and Reinforcement Learning with Verifiers

Knowledge distillation employs Dual-Level Preference Distillation (DPD), where the student model learns token-level probability distributions from the teacher model, while a sequence-level Direct Preference Optimization (DPO) objective maximizes the margin between preferred and non-preferred outputs. Positive samples are drawn from the teacher Nanbeige3.5-Pro, and negatives from the 3B student model itself, with distillation applied to both to reduce confident errors and enhance alternative responses.

Reinforcement learning is conducted in stages tailored to specific domains, each utilizing on-policy Generalized Reward Policy Optimization (GRPO). The team implements on-policy data filtering based on average pass rates, retaining samples with success rates between 10% and 90% to exclude trivial or unsolvable cases. For STEM tasks, an agentic verifier executes Python code to verify semantic equivalence beyond simple string matching. Coding tasks are validated through sandboxed synthetic test functions, with pass/fail rewards guiding learning. Human preference alignment uses a pairwise reward model designed to generate concise preference signals and minimize reward hacking risks compared to generic language model rewarders.

Performance Comparison Overview

| Benchmark (Metric) | Qwen3-14B-2504 | Qwen3-32B-2504 | Nanbeige4-3B-2511 |

|---|---|---|---|

| AIME 2024 (avg@8) | 79.3 | 81.4 | 90.4 |

| AIME 2025 (avg@8) | 70.4 | 72.9 | 85.6 |

| GPQA-Diamond (avg@3) | 64.0 | 68.7 | 82.2 |

| SuperGPQA (avg@3) | 46.8 | 54.1 | 53.2 |

| BFCL-V4 (avg@3) | 45.4 | 47.9 | 53.8 |

| Fullstack Bench (avg@3) | 55.7 | 58.2 | 48.0 |

| ArenaHard-V2 (avg@3) | 39.9 | 48.4 | 60.0 |

Summary of Insights

- Compact 3B models can outperform much larger open-source models in reasoning tasks when trained with a carefully designed data and training regimen. Nanbeige4-3B-Thinking notably surpasses Qwen3-32B on AIME 2024 and GPQA-Diamond benchmarks under averaged sampling evaluation.

- Evaluation metrics are based on average top-k sampling with specific decoding parameters (temperature 0.6, top-p 0.95), emphasizing robust performance over single-shot accuracy.

- Pretraining improvements stem from a sophisticated data curriculum that prioritizes higher-quality data progressively, rather than simply increasing token counts. FG-WSD scheduling demonstrates significant gains in reasoning benchmarks.

- Post-training emphasizes enhancing supervision quality through iterative solution refinement, chain-of-thought reconstruction, and preference-aware distillation. This multi-stage approach ensures the model learns from coherent and high-quality reasoning traces.