Contents Overview

- Introduction to LEGO: A Novel Compiler Framework

- Revolutionizing Hardware Generation Beyond Templates

- Affine Relation-Based Intermediate Representation

- Front-End Design: Functional Unit Graph and Memory Architecture

- Back-End Compilation and Optimization Techniques

- Performance Evaluation and Real-World Applications

- Significance Across Research, Industry, and Product Development

- Stepwise Workflow of the AI Chip Compiler

- Positioning LEGO Within the AI Accelerator Ecosystem

- Concluding Insights

Introducing LEGO: An Innovative Compiler for AI Accelerators

Researchers at MIT’s Han Lab have developed LEGO, a cutting-edge compiler framework designed to transform tensor computations-such as GEMM, Conv2D, attention mechanisms, and MTTKRP-into fully synthesizable RTL code tailored for spatial accelerators. Unlike traditional methods relying on manually crafted templates, LEGO automates hardware generation from high-level workload descriptions. Its front end leverages a relation-centric affine representation to articulate workloads and dataflows, enabling the construction of functional unit (FU) interconnects and optimized on-chip memory layouts that maximize data reuse. Additionally, LEGO supports the fusion of multiple spatial dataflows within a single hardware design. The back end translates these abstractions into primitive-level graphs, applying linear programming and graph transformations to optimize pipeline registers, broadcast wiring, reduction trees, and overall area and power consumption. Evaluations on foundational AI models and popular CNNs and Transformers demonstrate LEGO’s hardware achieves an average of 3.2× faster execution and 2.4× improved energy efficiency compared to the Gemmini accelerator under equivalent resource constraints.

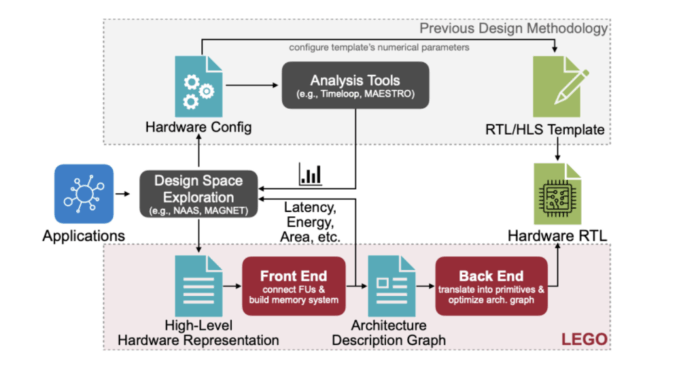

Advancing Hardware Synthesis Without Relying on Templates

Conventional hardware generation pipelines typically fall into two categories: (1) tools that analyze dataflows but do not produce hardware, and (2) RTL generators that depend on fixed, hand-optimized templates with limited configurability. These approaches restrict architectural flexibility and struggle to accommodate modern AI workloads that require dynamic switching between diverse dataflows-for example, alternating between convolution, depthwise convolution, and attention layers. LEGO breaks free from these constraints by directly supporting arbitrary dataflows and their combinations, synthesizing both the architecture and RTL from a high-level, template-free specification. This paradigm shift enables seamless adaptation to complex, multi-layer AI models without manual template redesign.

Affine, Relation-Centric Intermediate Representation: The Core Input

At the heart of LEGO’s compilation process is an affine-only intermediate representation (IR) that models tensor programs as nested loops with three distinct index categories:

- Temporal indices: Representing sequential for-loops.

- Spatial indices: Denoting parallel functional units (FUs).

- Computation indices: Corresponding to the iteration domain before tiling.

Two key affine mappings govern the compiler’s operation:

- Data mapping (fI→D): Translates computation indices to tensor indices.

- Dataflow mapping (fTS→I): Maps temporal and spatial indices to computation indices.

This affine framework eliminates complex operations like modulo and division during analysis, reducing reuse detection and address generation to linear algebra problems. Furthermore, LEGO separates control flow from dataflow by encoding control signal propagation and delays in a vector c, enabling shared control logic across multiple FUs and significantly lowering control overhead.

Front-End Synthesis: Designing FU Interconnects and Memory Systems

LEGO’s front end focuses on maximizing data reuse and on-chip bandwidth while minimizing the complexity and power consumption of interconnects and multiplexers. The process involves three main steps:

- Interconnect synthesis: By solving linear systems derived from affine relations, LEGO identifies both direct and delay-based (FIFO) connections between FUs. It then applies minimum-spanning arborescence algorithms (Chu-Liu/Edmonds) to prune unnecessary edges, optimizing for FIFO depth cost. A breadth-first search heuristic further refines direct interconnects when multiple dataflows coexist, prioritizing reuse chains and delay-fed nodes to reduce mux count and data node complexity.

- Banked memory design: LEGO calculates the required number of memory banks per tensor dimension based on maximum index differences, optionally reducing bank counts by dividing with the greatest common divisor (GCD). It then integrates data-distribution switches to route data between banks and FUs, delegating FU-to-FU reuse to the interconnect network.

- Dataflow fusion: Multiple spatial dataflows are merged into a unified Architecture Description Graph (ADG). Strategic planning avoids naive mux-heavy combinations, achieving up to 20% energy savings compared to straightforward fusion methods.

Back-End Compilation: From Architecture Graphs to Optimized RTL

The ADG is transformed into a Detailed Architecture Graph (DAG) composed of hardware primitives such as FIFOs, multiplexers, adders, and address generators. LEGO applies several optimization passes using linear programming and graph algorithms:

- Delay matching: A linear program minimizes the number of pipeline registers inserted by aligning output delays across edges, balancing timing requirements with storage overhead.

- Broadcast rewiring: A two-phase optimization converts costly broadcast signals into efficient forward chains, enabling register sharing and reducing latency. A subsequent LP pass fine-tunes delay balancing.

- Reduction tree optimization: Sequential adder chains are restructured into balanced trees. A 0-1 integer linear program remaps reducer inputs across dataflows to minimize physical pin usage, replacing adders with multiplexers where possible. This reduces both logic depth and register count.

These back-end optimizations primarily target the datapath, which accounts for approximately 40% of area and 60% of power consumption in the FU array, resulting in around 35% area reduction compared to naive RTL generation.

Performance Highlights and Practical Applications

Implementation details: LEGO is developed in C++ and utilizes the HiGHS solver for linear programming. It outputs SpinalHDL code, which is then converted to Verilog. The framework has been tested on a variety of tensor kernels and full AI models, including AlexNet, MobileNetV2, ResNet-50, EfficientNetV2, BERT, GPT-2, CoAtNet, DDPM, Stable Diffusion, and LLaMA-7B. A single LEGO-MNICOC accelerator instance supports all models, with a mapper selecting optimal tiling and dataflow per layer. The Gemmini accelerator serves as the baseline, matched for resources such as 256 MAC units, 256 KB on-chip buffer, and a 128-bit bus at 16 GB/s bandwidth.

Speed and energy efficiency: Across benchmarks, LEGO delivers an average 3.2× speedup and 2.4× energy efficiency improvement over Gemmini. These gains arise from an accurate performance model guiding workload mapping and the ability to dynamically switch spatial dataflows-such as selecting OH-OW-IC-OC ordering for depthwise convolution layers. Both LEGO and Gemmini designs are bandwidth-limited on GPT-2 workloads.

Resource utilization: In a system-on-chip style configuration, the FU array and network-on-chip (NoC) dominate area and power consumption, while programmable processing units (PPUs) contribute only about 2-5%. This validates the focus on optimizing datapath and control reuse.

Generative AI models: On a scaled-up 1024-FU configuration, LEGO maintains over 80% utilization for models like DDPM and Stable Diffusion. LLaMA-7B remains bandwidth-bound due to its inherently low operational intensity.

Impact Across Research, Industry, and Product Development

- For researchers: LEGO offers a mathematically rigorous framework that bridges loop-nest specifications and spatial hardware design, incorporating provable linear programming optimizations. It abstracts away low-level RTL details while exposing critical parameters such as tiling, spatial mapping, and reuse patterns for systematic exploration.

- For engineers and practitioners: Functioning as a form of hardware-as-code, LEGO enables targeting of arbitrary dataflows and their fusion within a single accelerator. The compiler automatically derives interconnects, buffers, and control logic, minimizing mux and FIFO overheads. This facilitates energy-efficient, multi-operation pipelines without manual template adjustments.

- For product managers and leaders: By lowering the complexity barrier for custom silicon design, LEGO empowers the creation of task-specific, power-efficient edge accelerators suitable for wearables and IoT devices. This approach aligns hardware capabilities with rapidly evolving AI models, reversing the traditional paradigm where models must adapt to fixed silicon.

Stepwise Breakdown: How LEGO Compiles AI Accelerators

- Deconstruction via Affine IR: Define tensor operations as loop nests, specifying affine mappings for data and dataflow, along with control flow vectors. This declaratively captures both computation and spatialization without relying on templates.

- Architectural Synthesis: Solve reuse equations to generate FU interconnects (direct and delay), prune edges using minimum spanning arborescences and heuristics, and design banked memory with distribution switches to handle concurrent accesses.

- Compilation and Optimization: Lower the architecture to a primitive DAG and apply delay-matching linear programming, broadcast rewiring, reduction tree extraction, pin reuse via integer linear programming, bit-width inference, and optional power gating. These steps collectively yield significant area and energy savings.

LEGO’s Role in the AI Accelerator Landscape

Unlike analysis-only tools such as Timeloop or MAESTRO, and template-dependent generators like Gemmini, DNA, and MAGNET, LEGO is fully template-free and supports arbitrary dataflows and their combinations. It produces synthesizable RTL that matches or surpasses the area and power efficiency of expert-designed accelerators under comparable dataflows and technology nodes. Moreover, LEGO’s one-architecture-for-many-models approach simplifies deployment across diverse AI workloads.

Final Thoughts

LEGO redefines hardware generation for tensor programs by treating it as a compilation problem. Its affine front end enables reuse-aware interconnect and memory synthesis, while the LP-driven back end minimizes datapath complexity. Demonstrated improvements of 3.2× performance, 2.4× energy efficiency, and approximately 35% area reduction over leading open-source generators establish LEGO as a promising solution for creating application-specific AI accelerators optimized for edge computing and beyond.