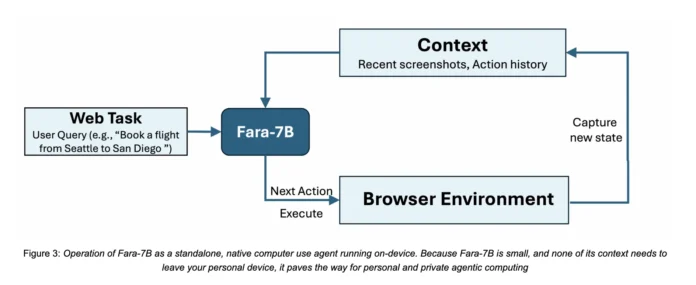

How can we empower AI agents to autonomously perform real-world web activities such as booking appointments, conducting searches, and completing forms directly on personal devices-while ensuring data privacy by avoiding cloud transmission? Microsoft Research introduces Fara-7B, a 7-billion-parameter agentic model tailored for seamless computer interaction. This open-weight Computer Use Agent interprets screenshots, anticipates mouse and keyboard inputs, and is optimized to run efficiently on individual user machines, minimizing latency and safeguarding browsing information locally.

Advancing Beyond Traditional Chatbots: The Rise of Computer Use Agents

Unlike conventional large language models (LLMs) that primarily generate text responses, Computer Use Agents like Fara-7B actively manipulate browser and desktop interfaces to accomplish complex tasks such as travel reservations, price comparisons, and form submissions. These agents analyze visual content on the screen, understand page layouts, and execute granular commands including clicks, scrolling, typing, web searches, and URL navigation.

Many current solutions depend on bulky multimodal models combined with intricate frameworks that parse accessibility trees and coordinate multiple auxiliary tools, resulting in increased response times and often necessitating cloud-based deployment. In contrast, Fara-7B consolidates the functionalities of multi-agent systems into a single, streamlined multimodal decoder model based on Qwen2.5-VL-7B. It processes browser screenshots alongside textual context, then sequentially produces internal reasoning followed by precise tool invocations with grounded parameters such as pixel coordinates, text inputs, or web addresses.

FaraGen: Generating Synthetic Web Interaction Data at Scale

A major challenge in developing Computer Use Agents is the scarcity of high-quality datasets capturing multi-step human web interactions. To address this, the Fara project presents FaraGen, an innovative synthetic data generation pipeline that creates and validates realistic web navigation trajectories on live websites.

The FaraGen workflow unfolds in three phases. First, the Task Proposal stage selects seed URLs from public datasets like ClueWeb22 and Tranco, categorizing them into sectors such as e-commerce, travel, entertainment, and forums. Advanced language models then transform each URL into plausible user tasks-for instance, reserving concert tickets or assembling a shopping list with specific product criteria. These tasks are carefully designed to be executable without requiring logins or paywalls, fully detailed, practical, and automatically verifiable.

Next, the Task Solving phase employs a multi-agent system inspired by Magentic-One and Magentic-UI. An Orchestrator agent devises the overarching strategy and maintains task progress. A WebSurfer agent interprets accessibility trees and annotated screenshots to perform browser actions via Playwright, including clicking, typing, scrolling, URL visits, and web searches. Meanwhile, a UserSimulator agent provides clarifications when tasks demand additional instructions.

Finally, Trajectory Verification leverages three specialized LLM-based evaluators: an Alignment Verifier ensures the executed actions align with the task goals; a Rubric Verifier breaks down the task into subgoals and assesses partial completions; and a Multimodal Verifier cross-examines screenshots and final outputs to detect hallucinations and confirm evidence of success. These verifiers achieve an 83.3% agreement rate with human annotations, with false positive and negative rates near 17-18%.

After rigorous filtering, FaraGen produces an extensive dataset comprising 145,603 verified trajectories totaling over 1 million interaction steps across 70,117 distinct domains. Trajectories vary from 3 to 84 steps, averaging 6.9 steps and involving approximately 0.5 unique domains per task, highlighting the diversity and novelty of the data. Utilizing state-of-the-art models like GPT-5 and o3, the cost to generate each verified trajectory is approximately one dollar.

Innovative Model Design and Training

Fara-7B is built as a multimodal decoder-only architecture, leveraging Qwen2.5-VL-7B as its foundation. It ingests a user’s objective, the latest browser screenshots, and a comprehensive history of prior thoughts and actions within a vast 128,000-token context window. At each interaction step, the model first articulates a chain of thought outlining the current status and intended plan, then issues a tool command specifying the next action and its parameters.

The model’s action repertoire aligns with the Magentic-UI computer_use interface, encompassing commands such as key presses, typing, mouse movements, left clicks, scrolling, URL visits, web searches, history navigation, pausing to memorize facts, waiting, and task termination. Crucially, Fara-7B predicts exact pixel coordinates on screenshots for interaction points, enabling it to function independently of accessibility trees during inference.

Training involves supervised fine-tuning on roughly 1.8 million samples drawn from diverse sources. These include FaraGen-generated trajectories segmented into observe-think-act sequences, grounding and UI localization tasks, screenshot-based visual question answering and captioning, as well as datasets focused on safety and refusal behaviors.

Performance Benchmarks and Cost Efficiency

Microsoft rigorously tested Fara-7B across four dynamic web benchmarks: WebVoyager, Online-Mind2Web, DeepShop, and the newly introduced WebTailBench. The latter emphasizes underrepresented domains such as restaurant bookings, job applications, real estate searches, price comparisons, and multi-site composite tasks.

Fara-7B demonstrated impressive success rates: 73.5% on WebVoyager, 34.1% on Online-Mind2Web, 26.2% on DeepShop, and 38.4% on WebTailBench. These results surpass the 7B parameter baseline UI-TARS-1.5-7B, which scored 66.4%, 31.3%, 11.6%, and 19.5% respectively, and are competitive with larger models like OpenAI’s computer-use-preview and SoM Agent variants built on GPT-4o.

On WebVoyager, Fara-7B processes an average of 124,000 input tokens and generates about 1,100 output tokens per task, executing roughly 16.5 actions. Based on current market token pricing, this translates to an estimated cost of $0.025 per task-significantly more economical than SoM agents powered by proprietary models such as GPT-5 and o3, which average around $0.30 per task. Notably, Fara-7B achieves this efficiency by maintaining similar input token usage but reducing output tokens by approximately 90% compared to these larger systems.

Summary of Key Insights

- Fara-7B is a 7-billion-parameter, open-weight Computer Use Agent built on Qwen2.5-VL-7B that operates directly from screenshots and textual context, producing grounded actions like clicking, typing, and navigation without relying on accessibility trees during inference.

- The model is trained on an extensive dataset of 145,603 verified browser interaction trajectories comprising over 1 million steps, generated via the FaraGen pipeline which integrates multi-agent task proposal, execution, and LLM-based verification across more than 70,000 unique domains.

- Fara-7B achieves substantial performance improvements over the 7B UI-TARS-1.5 baseline across four live web benchmarks, including a 73.5% success rate on WebVoyager and notable gains on Online-Mind2Web, DeepShop, and WebTailBench.

- Its efficient token usage results in an estimated cost of $0.025 per task on WebVoyager, making it roughly ten times more cost-effective in output token consumption than comparable SoM agents powered by GPT-5-class models.

Concluding Remarks

Fara-7B represents a significant advancement toward practical, privacy-conscious Computer Use Agents capable of running locally with reduced inference costs. By combining the Qwen2.5 VL 7B architecture, the innovative FaraGen synthetic data generation framework, and the comprehensive WebTailBench evaluation suite, this approach offers a transparent and scalable pathway from multi-agent data synthesis to a compact, high-performing model. It matches or exceeds the capabilities of larger systems on critical benchmarks while incorporating essential safeguards for task accuracy and refusal handling.