Contents

Rethinking Extended Reasoning in AI

Recent advancements in large language models (LLMs) have showcased remarkable improvements in mathematical problem-solving by expanding their Chain-of-Thought (CoT) reasoning-essentially encouraging the model to “think longer” through more elaborate step-by-step logic. Despite these gains, this method encounters intrinsic challenges. When subtle mistakes arise within the reasoning chain, models tend to amplify these errors rather than identify and rectify them. Attempts at internal self-correction often falter, particularly when the foundational reasoning strategy is flawed from the outset.

Microsoft’s latest research introduces a novel paradigm with rStar2-Agent, which shifts focus from merely prolonging thought processes to enhancing reasoning quality. This is achieved by empowering the model to actively employ programming tools, such as coding environments, to validate, investigate, and refine its problem-solving steps.

Introducing the Agentic Reinforcement Learning Framework

rStar2-Agent embodies a transformative agentic reinforcement learning (RL) approach, leveraging a 14-billion parameter model that interacts dynamically with a Python execution environment during its reasoning. Instead of relying solely on introspective evaluation, the model generates executable code, runs it, interprets the outcomes, and iteratively adjusts its reasoning based on tangible feedback.

This interactive problem-solving mirrors human mathematicians’ workflows, who often utilize computational tools to test hypotheses and explore alternative solution strategies. For example, when tackling a complex algebraic problem, the model might draft initial reasoning, script Python code to verify calculations, analyze the results, and refine its approach accordingly.

Overcoming Technical Barriers: Scalable Infrastructure Design

Implementing agentic RL at scale introduces significant engineering challenges. Training involves processing tens of thousands of simultaneous code execution requests per batch, which can create severe bottlenecks and reduce GPU efficiency. To address this, the research team developed two critical infrastructure innovations.

First, they engineered a distributed code execution platform capable of managing up to 45,000 concurrent tool invocations with sub-second response times. This system decouples code execution from the main training loop and balances workloads across multiple CPU workers to maintain high throughput.

Second, a dynamic rollout scheduler was introduced, which allocates computational tasks based on real-time GPU cache availability rather than fixed assignments. This adaptive scheduling prevents GPU idle periods caused by uneven computational demands across different reasoning trajectories.

Thanks to these advancements, the entire training regimen was completed within a week using 64 AMD MI300X GPUs, illustrating that cutting-edge reasoning performance can be achieved without exorbitant computational resources when infrastructure is optimized.

GRPO-RoC: Enhancing Learning from Quality Reasoning Examples

The core algorithmic breakthrough is Group Relative Policy Optimization with Resampling on Correct (GRPO-RoC). Traditional reinforcement learning methods often reward models for correct final answers even if the reasoning process contains multiple errors or inefficient tool usage, which can degrade learning quality.

GRPO-RoC tackles this by employing an asymmetric sampling technique during training that:

- Oversamples initial reasoning attempts to generate a broad set of candidate solution paths

- Maintains diversity by preserving a variety of failed attempts, enabling the model to learn from different types of mistakes

- Filters positive samples to prioritize reasoning traces with minimal coding errors and cleaner formatting

This strategy ensures the model focuses on learning from high-quality, error-minimized reasoning while still gaining insights from diverse failure modes, resulting in more efficient tool utilization and streamlined reasoning sequences.

Progressive Training: From Concise to Complex Reasoning

The training pipeline is structured into three progressive phases designed to build reasoning capabilities efficiently:

Phase 1: The model undergoes supervised fine-tuning without complex reasoning tasks, concentrating on instruction adherence and tool usage formatting. Responses are limited to 8,000 tokens, encouraging the development of succinct reasoning strategies. This phase alone boosts performance from near zero to over 70% on challenging math benchmarks.

Phase 2: The token limit is extended to 12,000, allowing the model to engage in more elaborate reasoning while retaining the efficiency gains from the initial phase.

Phase 3: Training focuses on the most difficult problems by filtering out those already mastered, ensuring continuous learning on challenging examples.

This staged approach balances the need for concise reasoning with the ability to handle complex problems, optimizing learning speed and computational cost.

Remarkable Performance and Efficiency Gains

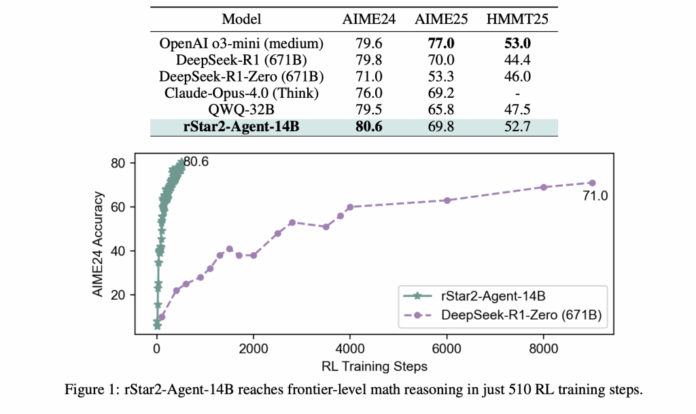

rStar2-Agent-14B achieves impressive accuracy rates of 80.6% on the AIME 2024 dataset and 69.8% on AIME 2025, outperforming much larger models such as the 671-billion parameter DeepSeek-R1. Notably, it accomplishes this with significantly shorter reasoning sequences-averaging around 10,000 tokens compared to over 17,000 tokens for comparable models.

Beyond mathematics, the model exhibits strong transfer learning capabilities. Despite being trained exclusively on math problems, it surpasses specialized models on scientific reasoning benchmarks and maintains competitive results on general alignment tasks, highlighting the versatility of the agentic approach.

Decoding the Model’s Reasoning Dynamics

Detailed analysis reveals two distinct types of high-entropy tokens within the model’s reasoning traces. The first corresponds to traditional “forking tokens,” which trigger internal exploration and self-reflection. The second, newly identified “reflection tokens,” arise specifically in response to feedback from code execution tools.

These reflection tokens enable environment-driven reasoning, where the model critically evaluates execution outcomes, diagnoses errors, and adapts its strategy accordingly. This mechanism fosters a level of problem-solving sophistication that surpasses conventional Chain-of-Thought methods.

Conclusion: A New Paradigm for Efficient AI Reasoning

rStar2-Agent exemplifies how mid-sized models can reach state-of-the-art reasoning performance through intelligent training methodologies rather than sheer scale. This approach advocates for a sustainable trajectory in AI development, emphasizing efficiency, integration with external tools, and refined training techniques over brute computational force.

The success of this agentic framework paves the way for future AI systems capable of seamlessly combining multiple tools and environments, transitioning from static text generation to dynamic, interactive problem-solving agents.