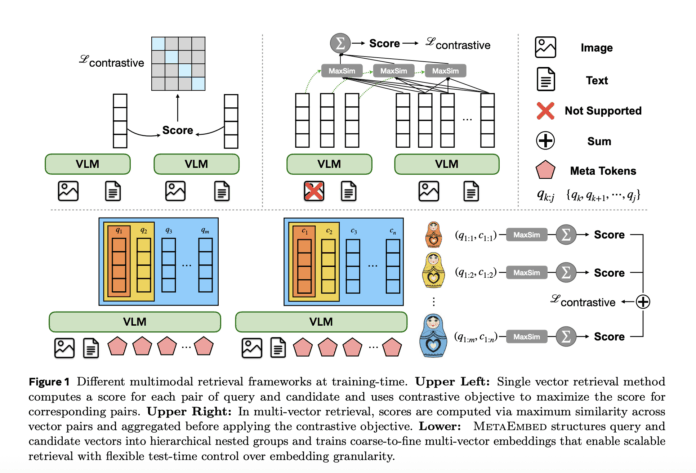

Imagine having the ability to dynamically adjust multimodal retrieval performance during deployment-balancing precision, response time, and index footprint-simply by selecting the number of adaptable Meta Tokens used for queries and candidates (ranging from 1 to 16 for queries and 1 to 64 for candidates). Meta Superintelligence Labs unveils MetaEmbed, an innovative late-interaction framework for multimodal retrieval that offers a single, intuitive control lever at inference: the count of compact, trainable “Meta Tokens” applied to both query and candidate inputs. Unlike traditional methods that either compress each item into a single vector (as in CLIP) or expand into hundreds of patch-level vectors (like ColBERT), MetaEmbed appends a fixed set of learnable Meta Tokens during training and leverages their final hidden states as multi-vector embeddings at runtime. This design empowers test-time scalability, enabling system operators to flexibly trade off retrieval accuracy against latency and index size without the need for retraining.

Understanding MetaEmbed’s Mechanism

MetaEmbed’s training hinges on a novel approach called Matryoshka Multi-Vector Retrieval (MMR). Here, Meta Tokens are structured into nested prefix groups, ensuring that each prefix subset independently retains discriminative power. At inference, the retrieval configuration is defined by a tuple ((r_q, r_c)) that specifies how many Meta Tokens to utilize on the query and candidate sides respectively-for example, ((1,1), (2,4), (4,8), (8,16), (16,64)). The scoring function employs a ColBERT-inspired MaxSim late-interaction over L2-normalized Meta Token embeddings, preserving detailed cross-modal relationships while maintaining a compact vector set.

Performance Benchmarks and Evaluation

MetaEmbed has been rigorously tested on challenging datasets such as the Massive Multimodal Embedding Benchmark (MMEB) and ViDoRe v2 (Visual Document Retrieval), both designed to evaluate retrieval robustness across diverse modalities and realistic document queries. Using Qwen2.5-VL backbones, MetaEmbed achieves impressive overall scores at the highest budget setting ((16,64)): 3B parameters = 69.1, 7B = 76.6, and 32B = 78.7. Notably, performance improves steadily as the retrieval budget increases, with larger models benefiting more significantly. On ViDoRe v2, MetaEmbed surpasses both single-vector and naive fixed-length multi-vector baselines in average nDCG@5, with the margin widening at higher budgets.

Impact of Matryoshka Multi-Vector Retrieval

Ablation studies highlight the critical role of MMR in enabling test-time scaling without compromising full-budget accuracy. Disabling MMR (NoMMR) causes a sharp decline in performance at lower budgets, whereas enabling MMR allows MetaEmbed to match or outperform single-vector baselines consistently across different budget levels and model sizes.

Resource Efficiency and Memory Considerations

When handling 100,000 candidate items per query with a scoring batch size of 1,000 on an NVIDIA A100 GPU, the computational and memory costs vary significantly with the retrieval budget. As the budget scales from ((1,1)) to ((16,64)), scoring FLOPs increase dramatically from 0.71 GFLOPs to 733.89 GFLOPs, scoring latency rises modestly from 1.67 ms to 6.25 ms, and bfloat16 index memory expands from 0.68 GiB to 42.72 GiB. Importantly, the dominant factor in end-to-end latency is query encoding: processing an image query with 1,024 tokens demands approximately 42.72 TFLOPs and takes around 788 ms, far exceeding scoring costs for typical candidate sets. Therefore, optimizing encoder throughput and managing index size-potentially by offloading to CPU or balancing budgets-is essential for practical deployment.

Comparative Analysis with Existing Methods

- Single-vector approaches (e.g., CLIP-style): These methods offer minimal index size and rapid dot-product scoring but lack sensitivity to fine-grained compositional details. MetaEmbed enhances precision by employing a small, context-aware multi-vector set while maintaining independent encoding of inputs.

- Naive multi-vector methods (e.g., ColBERT-style) applied to multimodal data: While rich in token-level detail, these approaches suffer from excessive index sizes and computational demands, especially when both queries and candidates include images. MetaEmbed’s use of a limited number of Meta Tokens drastically reduces vector counts and enables efficient, budgeted MaxSim scoring.

Key Insights and Practical Recommendations

- Unified model with flexible budgets: Train a single MetaEmbed model and select the retrieval budget ((r_q, r_c)) at serving time to balance recall and computational cost. Lower budgets are ideal for initial retrieval stages, while higher budgets can be reserved for precise re-ranking.

- Encoder throughput is critical: Since query encoding dominates latency, focus on optimizing image tokenization and visual-language model (VLM) efficiency. Scoring remains lightweight for typical candidate volumes.

- Memory scales linearly with budget: Plan index storage and sharding strategies (e.g., GPU vs. CPU) based on the chosen Meta Token budget to ensure efficient resource utilization.

Summary

MetaEmbed introduces a novel serving-time adjustable control for multimodal retrieval systems. By training nested, coarse-to-fine Meta Tokens with Matryoshka Multi-Vector Retrieval, it produces compact multi-vector embeddings whose granularity can be tuned post-training. This approach consistently outperforms single-vector and naive multi-vector baselines on benchmarks like MMEB and ViDoRe v2, while providing clear insights into practical costs-highlighting encoder-bound latency, budget-dependent index size, and millisecond-scale scoring on standard accelerators. For teams developing retrieval pipelines that require seamless integration of fast recall and accurate re-ranking across image-text and visual-document tasks, MetaEmbed offers a straightforward, architecture-compatible solution.