How can a single speech recognition system comprehend thousands of languages, including many without prior automatic speech recognition (ASR) models? Meta AI introduces Omnilingual ASR, an open-source multilingual speech recognition platform that supports over 1,600 languages. Remarkably, it can also extend to previously unseen languages using just a handful of speech-text examples, all without the need for retraining.

Comprehensive Data Collection and Language Diversity

The foundation of Omnilingual ASR’s supervised training lies in the extensive AllASR dataset, which aggregates 120,710 hours of annotated speech paired with transcripts spanning 1,690 languages. This vast corpus integrates multiple data sources, including publicly available datasets, proprietary and licensed collections, partner contributions, and a specially commissioned dataset known as the Omnilingual ASR Corpus.

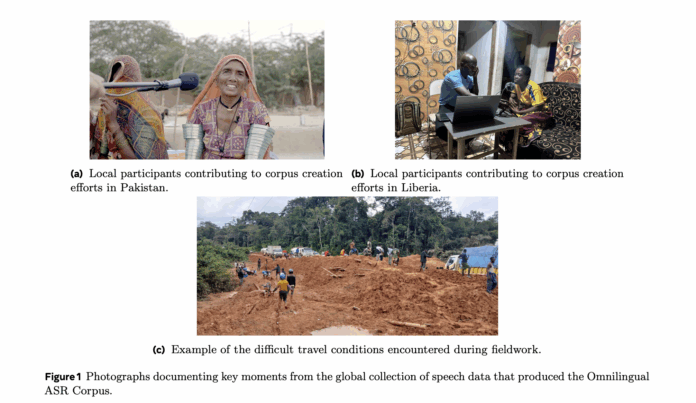

The Omnilingual ASR Corpus itself adds 3,350 hours of speech data covering 348 languages. This data was gathered through collaborative fieldwork with local communities and native speakers in regions such as Africa and South Asia. Unlike scripted prompts, speakers provide spontaneous monologues in their native tongues, resulting in authentic acoustic and lexical diversity that better reflects real-world speech patterns.

For self-supervised pretraining, the system employs wav2vec 2.0 encoders trained on an enormous unlabeled speech collection. This pretraining dataset includes approximately 3.84 million hours of speech with language labels across 1,239 languages, plus an additional 460,000 hours of unlabeled audio, totaling around 4.3 million hours. Although this is less than the 12 million hours used by some competing models like USM, Omnilingual ASR demonstrates impressive data efficiency and performance.

Architecture and Model Variants

Omnilingual ASR offers three primary model categories, all built upon the wav2vec 2.0 speech encoder backbone:

- Self-Supervised Learning (SSL) Encoders (OmniASR W2V): These wav2vec 2.0 encoders are trained using contrastive learning objectives and come in various sizes:

•omniASR_W2V_300Mwith ~317 million parameters

•omniASR_W2V_1Bwith ~966 million parameters

•omniASR_W2V_3Bwith ~3 billion parameters

•omniASR_W2V_7Bwith ~6.5 billion parameters.

Post-training, the quantizer is removed, and the encoder serves as a robust speech feature extractor. - Connectionist Temporal Classification (CTC) ASR Models: These models add a linear classification layer atop the encoder and are trained end-to-end with a character-level CTC loss. Parameter counts range from approximately 325 million to 6.5 billion. The smallest model achieves real-time transcription speeds as fast as 0.001 real-time factor on an NVIDIA A100 GPU for 30-second audio clips.

- Large Language Model (LLM) ASR Variants: These models integrate a Transformer-based decoder on top of the wav2vec 2.0 encoder. The decoder functions as a character-level language model, processing tokens including special markers like

<BOS>(beginning of sequence) and<EOS>(end of sequence). Training optimizes next-token prediction over sequences combining speech and text embeddings. Model sizes range from 1.63 billion parameters (omniASR_LLM_300M) up to 7.8 billion parameters (omniASR_LLM_7B). A specialized zero-shot checkpoint (omniASR_LLM_7B_ZS) enables transcription of unseen languages without retraining.

All LLM ASR models support optional language conditioning, where languages are encoded as {language_code}_{script} (e.g., eng_Latn for English in Latin script or cmn_Hans for Mandarin in Simplified Chinese). A learned embedding for the script is injected into the decoder input. During training, language tags are occasionally omitted, allowing the model to function without explicit language identifiers during inference.

Zero-Shot Speech Recognition with Contextual Examples and SONAR Retrieval

While Omnilingual ASR covers over 1,600 languages with supervised data, many languages lack transcribed speech corpora. To address this, the system’s zero-shot LLM ASR model leverages in-context learning by conditioning on a few speech-text pairs from the target language.

During zero-shot training, the decoder receives N + 1 speech-text pairs: the first N serve as contextual examples, and the last is the transcription target. These pairs are embedded and concatenated into a single input sequence for the decoder, which learns to map speech to text based on limited examples.

At inference, the omniASR_LLM_7B_ZS model can transcribe new utterances in any language-seen or unseen-by conditioning on a small set of example pairs, without any model updates. This approach mirrors few-shot learning techniques popular in natural language processing.

To select the most relevant context examples, Omnilingual ASR employs SONAR, a multilingual multimodal encoder that projects both audio and text into a shared embedding space. By embedding the target audio once, the system performs nearest neighbor searches over a database of speech-text pairs to retrieve the best contextual matches. This SONAR-based retrieval significantly enhances zero-shot transcription accuracy compared to random or text-only similarity-based selection.

Performance Highlights and Benchmark Results

The flagship omniASR_LLM_7B model achieves a character error rate (CER) below 10% on 78% of the 1,600+ supported languages, demonstrating robust accuracy across diverse linguistic contexts.

On multilingual evaluation sets such as FLEURS 102, this 7-billion parameter LLM ASR model outperforms both its CTC counterparts and Google’s USM models in average CER, despite using less unlabeled data (4.3 million hours versus 12 million) and a simpler pretraining pipeline. These results underscore the effectiveness of scaling wav2vec 2.0 encoders combined with Transformer decoders for broad-coverage multilingual ASR.

Summary of Key Innovations

- Omnilingual ASR offers open-source speech recognition for over 1,600 languages and can generalize to more than 5,400 languages through zero-shot in-context learning.

- The models leverage large-scale wav2vec 2.0 encoders pretrained on approximately 4.3 million hours of unlabeled audio spanning 1,239 labeled languages plus additional unlabeled speech.

- The suite includes SSL encoders, CTC-based ASR, LLM-based ASR, and a dedicated zero-shot LLM ASR model, with encoder sizes ranging from 300 million to 7 billion parameters and LLM decoders up to 7.8 billion parameters.

- The 7B LLM ASR model achieves CER below 10% on the majority of supported languages, rivaling or surpassing previous multilingual ASR systems, especially in low-resource scenarios.

Final Thoughts

Omnilingual ASR represents a groundbreaking advancement in multilingual speech recognition by framing ASR as a scalable, adaptable framework rather than a fixed set of languages. Combining a powerful 7-billion parameter wav2vec 2.0 encoder with both CTC and LLM decoders, plus a zero-shot LLM model capable of adapting to new languages with minimal examples, it achieves impressive accuracy across a vast linguistic spectrum. Released under Apache 2.0 and CC BY 4.0 licenses, Omnilingual ASR stands as the most flexible and extensible open-source speech recognition system available today.