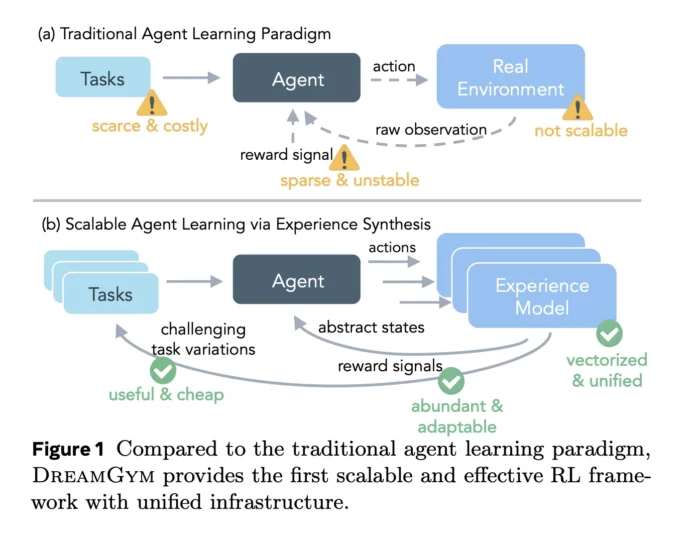

While reinforcement learning (RL) for large language model (LLM) agents holds significant promise theoretically, its practical application is hindered by high costs, complex infrastructure, and noisy reward signals. Training agents to navigate web pages or perform multi-step tool interactions often demands tens of thousands of real-world interactions, each of which is slow, fragile, and difficult to reset. Meta’s innovative framework tackles this challenge by transforming the bottleneck into a modeling problem. Instead of applying RL directly within environments like WebShop, ALFWorld, or WebArena Lite, it develops a reasoning-driven experience model that fully simulates these environments through textual representation.

Challenges in Scaling RL with Real-World Environments

Current reinforcement learning workflows for agents encounter four intertwined obstacles: the high expense of real environment rollouts, limited variety in tasks, unstable reward feedback, and a complicated infrastructure. Web-based environments frequently change, rewards rely on delicate scrapers, and many agent actions are irreversible. Additionally, implementing reset mechanisms and controlling episodes is challenging, making long-horizon tasks noisy and inefficient in terms of sample usage.

Existing benchmarks fall into two categories. WebShop and ALFWorld are compatible with RL but require approximately 80,000 real environment transitions to achieve competitive baselines using algorithms like PPO or GRPO, making them costly. Conversely, WebArena Lite lacks reliable reset and automatic reward verification, rendering online RL training in the real environment practically impossible.

DreamGym: A Textual Simulator Powered by Reasoning

DreamGym introduces a novel approach centered on three integral components: a reasoning-based experience model, an experience replay buffer, and an adaptive curriculum task generator. Together, these elements create a synthetic Markov decision process (MDP) where the environment exists entirely as text.

The reasoning-based experience model (denoted as Mexp) functions within an abstract textual state space. Instead of raw HTML, states are concise summaries of task-relevant information, such as cleaned page elements. At each step, the agent inputs the current state, chosen action, task instructions, and interaction history. The model retrieves the top-k most similar past transitions from the replay buffer and employs chain-of-thought reasoning to generate a reasoning trace, the subsequent state, and the reward.

Essentially, Mexp acts as a large language model’s world simulator for web and tool-based tasks, operating purely on text. It is trained via supervised fine-tuning on offline trajectories, optimizing jointly to produce both the reasoning trace and the next state conditioned on that trace. This training encourages the model to capture causal relationships rather than merely local text patterns.

Experience Replay Buffer: Anchoring Synthetic Experience

The experience replay buffer is initially populated with offline data collected from real environments such as WebShop, ALFWorld, and WebArena Lite. As DreamGym trains policies within the synthetic environment, it continuously adds new trajectories to this buffer. During each prediction step, Mexp uses an encoder to retrieve a small set of similar transitions from this memory, conditioning its reasoning and state generation on these examples.

This retrieval mechanism serves as a grounding tool, ensuring that synthetic transitions remain close to the real data distribution and minimizing hallucinations during extended rollouts. Experiments demonstrate that removing either the history or retrieval components degrades the consistency, informativeness, and factual accuracy of generated states, as evaluated by external metrics, and reduces downstream success rates on WebShop and WebArena Lite.

Adaptive Curriculum Driven by Reward Variability

The curriculum task generator leverages the same architecture as the experience model to select tasks for training. It prioritizes seed tasks exhibiting high reward variance under the current policy, indicating intermediate difficulty where the agent sometimes succeeds and sometimes fails. For each selected task, the model creates variations that maintain the same action types but alter constraints, goals, or context.

This selection strategy is based on reward entropy calculated over batches of rollouts per task. Tasks with balanced success and failure rates and non-zero variance are favored. Ablation studies reveal that disabling this adaptive curriculum results in a roughly 6% drop in performance on WebShop and WebArena Lite, with training plateauing early due to the replay buffer filling with easy, low-entropy trajectories.

Integrating RL Algorithms Within DreamGym and Theoretical Insights

Within DreamGym, policies are trained using standard RL algorithms such as Proximal Policy Optimization (PPO) and Group Relative Policy Optimization (GRPO). The training process alternates between the policy selecting actions and the experience model generating the next states and rewards. From the perspective of the RL algorithm, DreamGym functions as a conventional environment interface.

The framework also provides a theoretical trust-region style improvement bound linking policy performance in the synthetic MDP to that in the real environment. This bound incorporates error terms related to reward prediction inaccuracies and the divergence between real and synthetic transition distributions. As these errors diminish, improvements in DreamGym translate to enhanced performance in the actual task.

Performance Evaluation Across Diverse Benchmarks

DreamGym has been evaluated using agents based on Llama and Qwen architectures across WebShop, ALFWorld, and WebArena Lite, revealing three distinct performance patterns.

- In RL-ready but resource-intensive environments like WebShop and ALFWorld, agents trained solely within DreamGym using synthetic transitions achieve performance on par with PPO and GRPO baselines that require approximately 80,000 real environment interactions. This demonstrates that reasoning-based synthetic experience can effectively guide stable policy improvement.

- In environments not suited for RL such as WebArena Lite, DreamGym enables practical RL training, boosting success rates by over 30% compared to all non-RL baselines, including supervised fine-tuning and behavior cloning.

- For sim-to-real transfer, the DreamGym-S2R approach pretrains policies entirely in the synthetic environment before fine-tuning with a limited number of real rollouts. This method yields more than a 40% improvement over training from scratch in the real environment, while using less than 10% of the real data and reducing total training costs to roughly one-third to one-fifth of traditional RL approaches.

Summary of Core Contributions

- DreamGym replaces fragile real-world environment interactions with a reasoning-driven experience model operating in an abstract textual state space, predicting next states and rewards based on history, task context, and retrieved similar transitions.

- The framework integrates three key components: a reasoning experience model, an experience replay buffer seeded with real trajectories, and a curriculum task generator that selects and diversifies tasks using a reward entropy heuristic, collectively stabilizing and enriching RL training.

- In costly but RL-compatible environments like WebShop and ALFWorld, agents trained entirely within DreamGym using synthetic data match the performance of baselines relying on extensive real environment interactions.

- In environments unsuitable for direct RL, such as WebArena Lite, DreamGym facilitates online RL training, achieving over 30% higher success rates than all non-RL methods.

- Sim-to-real training with DreamGym-pretrained policies fine-tuned on limited real data delivers over 40% performance gains while drastically reducing data requirements and training expenses.

Final Thoughts

DreamGym represents a significant advancement toward scalable and practical reinforcement learning for LLM agents by redefining the environment as a reasoning-based experience model, anchored by a replay buffer and guided by a reward entropy-driven curriculum, rather than relying on fragile browser-based stacks. The substantial improvements demonstrated across WebArena Lite, WebShop, and ALFWorld with PPO and GRPO suggest that combining synthetic experience generation with sim-to-real adaptation could become a foundational paradigm for training agents at scale. Ultimately, DreamGym shifts the focus from policy optimization alone to enhancing the experience model as the primary driver for scaling RL agents.