Contents Overview

The Need for a Next-Generation Multilingual Encoder

For over half a decade, XLM-RoBERTa (XLM-R) has been the cornerstone of multilingual natural language processing (NLP), an unusually extended period of dominance in the rapidly evolving AI landscape. While early breakthroughs centered on encoder-only architectures like BERT and RoBERTa, the research community’s focus gradually shifted toward decoder-based generative models. Despite this trend, encoder models continue to offer superior efficiency and often excel in tasks such as embedding generation, information retrieval, and classification. However, innovation in multilingual encoders has largely plateaued.

Addressing this stagnation, a research team from Johns Hopkins University introduces mmBERT, a state-of-the-art encoder that not only surpasses XLM-R but also competes with recent large-scale models like OpenAI’s o3 and Google’s Gemini 2.5 Pro, marking a significant leap forward in multilingual encoding.

Dissecting mmBERT’s Design and Capabilities

mmBERT is available in two primary variants:

- Base version: Comprises 22 transformer layers, a hidden size of 1152, and approximately 307 million parameters (with 110 million outside embeddings).

- Compact version: Contains around 140 million parameters, including 42 million non-embedding parameters.

The model utilizes the Gemma 2 tokenizer featuring a vast vocabulary of 256,000 tokens, incorporates rotary position embeddings (RoPE), and leverages FlashAttention2 to optimize computational efficiency. A standout feature is its ability to handle sequences up to 8192 tokens, a substantial increase from the 1024-token limit of XLM-R. This is achieved through unpadded embeddings and a sliding-window attention mechanism, enabling mmBERT to process nearly ten times longer contexts while maintaining faster inference speeds.

Comprehensive Training Regimen and Data Sources

mmBERT’s training corpus is vast and diverse, encompassing 3 trillion tokens across an impressive 1,833 languages. The dataset integrates multiple sources such as FineWeb2, Dolma, MegaWika v2, ProLong, and StarCoder, ensuring broad linguistic coverage. English content constitutes roughly 10% to 34% of the data, varying by training phase to balance language representation.

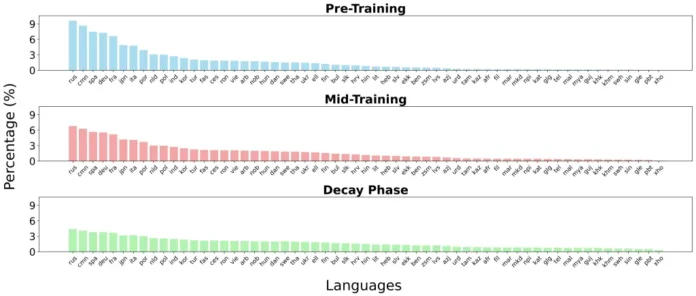

The training process unfolds in three distinct stages:

- Initial pre-training: Exposure to 2.3 trillion tokens spanning 60 languages plus programming code.

- Intermediate training: Focused on 600 billion tokens from 110 languages, emphasizing higher-quality datasets.

- Final decay phase: Incorporates 100 billion tokens covering all 1,833 languages, prioritizing adaptation to low-resource languages.

Innovative Training Techniques Enhancing mmBERT

mmBERT’s superior performance is driven by three key methodological advancements:

- Annealed Language Learning (ALL): Languages are introduced progressively-from 60 to 110, then to 1,833-while sampling shifts from favoring high-resource languages to a more uniform distribution. This strategy prevents overfitting on abundant languages and boosts representation for low-resource ones during later training stages.

- Inverse Masking Schedule: The masking ratio begins at 30%, promoting broad, coarse learning, and gradually decreases to 5%, allowing the model to refine its understanding with finer details as training progresses.

- Model Fusion via Decay Variants: Multiple models trained during the decay phase-each emphasizing different language subsets (English-heavy, 110-language, and full 1,833-language)-are merged using TIES merging. This approach harnesses complementary strengths without the need for retraining from scratch.

Benchmark Performance Highlights

- English Natural Language Understanding (GLUE): The mmBERT base model achieves a score of 86.3, outperforming XLM-R’s 83.3 and closely approaching ModernBERT’s 87.4, despite dedicating over 75% of its training to non-English data.

- Multilingual NLU (XTREME): mmBERT attains 72.8, surpassing XLM-R’s 70.4, with notable improvements in classification and question-answering tasks.

- Embedding Evaluations (MTEB v2): mmBERT ties with ModernBERT in English tasks (53.9 vs. 53.8) and leads in multilingual benchmarks (54.1 vs. XLM-R’s 52.4).

- Code Retrieval (CoIR): Demonstrates a roughly 9-point advantage over XLM-R, although EuroBERT still holds an edge on proprietary datasets.

Robustness in Low-Resource Language Scenarios

The annealed learning framework ensures that languages with limited data receive increased focus during the final training phase. On specialized benchmarks such as Faroese FoQA and Tigrinya TiQuAD, mmBERT significantly outperforms both OpenAI’s o3 and Google’s Gemini 2.5 Pro models. These results underscore the potential of carefully trained encoder architectures to generalize effectively even in extremely low-resource contexts.

Efficiency and Speed Advantages

mmBERT delivers remarkable efficiency, operating 2 to 4 times faster than predecessors like XLM-R and MiniLM, while supporting extended input lengths of up to 8192 tokens. Impressively, it processes 8192-token sequences faster than older encoders handle just 512 tokens. This acceleration is attributed to the ModernBERT training paradigm, advanced attention mechanisms, and optimized embedding strategies.

Concluding Insights

mmBERT emerges as a much-needed successor to XLM-R, redefining the capabilities of multilingual encoders. It combines speed, scalability, and superior performance across both high-resource and low-resource languages. Its innovative training approach-leveraging 3 trillion tokens, annealed language learning, inverse masking, and model merging-demonstrates how thoughtful design can achieve broad generalization without excessive computational overhead. As an open, efficient, and scalable solution, mmBERT sets a new benchmark and lays a solid foundation for future advancements in multilingual NLP.