A collaborative team from MBZUAI’s Institute of Foundation Models and G42 has introduced K2 Think, an advanced open-source reasoning AI system featuring 32 billion parameters. This model integrates extended chain-of-thought supervised fine-tuning with reinforcement learning guided by verifiable rewards, alongside agentic planning, dynamic test-time scaling, and optimized inference techniques such as speculative decoding on wafer-scale hardware. The outcome is a state-of-the-art mathematical reasoning capability that achieves competitive performance in coding and scientific tasks, all while maintaining a significantly smaller parameter footprint. Importantly, K2 Think is released with full transparency, including its weights, datasets, and source code.

Architecture and Design Principles of K2 Think

K2 Think is developed by further training the open-weight Qwen2.5-32B base model, supplemented with a lightweight computational framework activated during inference. The architecture prioritizes parameter efficiency, deliberately selecting a 32-billion-parameter backbone to facilitate rapid experimentation and deployment, while preserving capacity for post-training enhancements. The system’s methodology is anchored on six foundational components:

- Extended chain-of-thought (CoT) supervised fine-tuning to encourage detailed reasoning steps.

- Reinforcement Learning with Verifiable Rewards (RLVR) to optimize correctness across multiple domains.

- Agentic planning that formulates a strategic approach before problem-solving.

- Test-time scaling through best-of-N sampling combined with verification mechanisms.

- Speculative decoding to accelerate token generation.

- Inference execution on Cerebras’ wafer-scale engine for high throughput.

The primary objectives include boosting pass@1 accuracy on challenging math competitions, sustaining robust performance in coding and scientific reasoning, and managing response length and latency effectively through strategic prompting and hardware-conscious inference.

Extended Chain-of-Thought Supervised Fine-Tuning

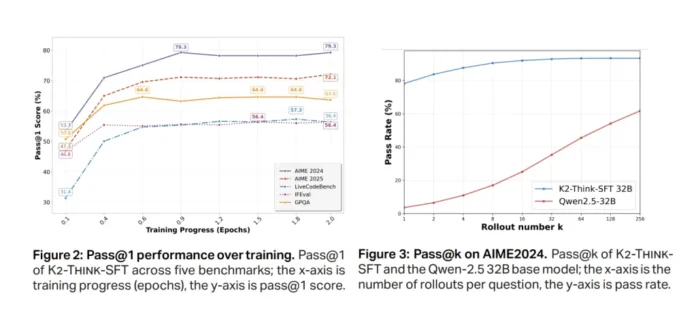

The initial fine-tuning phase employs a carefully curated dataset containing long chain-of-thought reasoning traces and paired instructions/responses across mathematics, programming, science, instruction adherence, and conversational tasks (AM-Thinking-v1-Distilled). This training encourages the model to articulate intermediate reasoning steps explicitly and produce structured outputs. Notably, significant improvements in pass@1 accuracy emerge rapidly, stabilizing around 79% on AIME 2024 and 72% on AIME 2025 benchmarks after approximately half an epoch, indicating effective convergence prior to reinforcement learning.

Reinforcement Learning with Verifiable Rewards: Enhancing Accuracy

Following supervised fine-tuning, K2 Think undergoes reinforcement learning using the Guru dataset, which encompasses roughly 92,000 prompts spanning six domains: mathematics, coding, science, logic, simulation, and tabular data. This dataset is designed to enable verifiable end-to-end correctness. The training leverages the verl library implementing a GRPO-style policy-gradient algorithm. An important insight is that initiating reinforcement learning from a strong supervised fine-tuning checkpoint yields only modest gains and may plateau or degrade, whereas starting RL directly from the base model results in substantial relative improvements-up to 40% on AIME 2024-highlighting a trade-off between initial fine-tuning strength and reinforcement learning potential.

Additional experiments reveal that multi-stage reinforcement learning with a reduced initial context window (e.g., increasing from 16k to 32k tokens) underperforms, failing to match the supervised fine-tuning baseline. This suggests that shortening the maximum sequence length below the fine-tuning regime disrupts the model’s learned reasoning capabilities.

Agentic Planning and Dynamic Test-Time Scaling

During inference, K2 Think first generates a concise plan outlining the problem-solving approach before producing the complete solution. Subsequently, it employs a best-of-N sampling strategy (commonly N=3) combined with verification to select the most accurate response. This dual approach yields two key benefits: consistent improvements in output quality and a reduction in final response length despite the additional planning step. For instance, token counts decrease by up to 11.7% on the Omni-HARD benchmark, with overall response lengths comparable to those of significantly larger open models. These reductions are critical for lowering latency and computational costs.

Comparative analysis shows that K2 Think’s response lengths are shorter than those of Qwen3-235B-A22B and align closely with GPT-OSS-120B on mathematical tasks. After integrating the plan-before-you-think and verifier mechanisms, average token usage decreases relative to the post-training checkpoint across multiple benchmarks, including AIME 2024 (-6.7%), AIME 2025 (-3.9%), HMMT 2025 (-7.2%), Omni-HARD (-11.7%), LCBv5 (-10.5%), and GPQA-D (-2.1%).

Speculative Decoding and High-Performance Hardware Inference

K2 Think is optimized for deployment on the Cerebras Wafer-Scale Engine, utilizing speculative decoding to achieve remarkable throughput exceeding 2,000 tokens per second. This high-speed inference capability makes the test-time computational framework viable for both production environments and iterative research workflows. The hardware-aware inference strategy embodies the system’s philosophy of being “small but fast,” balancing model size with operational efficiency.

Comprehensive Evaluation Methodology

The model’s performance is rigorously assessed across a suite of challenging benchmarks, including competition-level mathematics (AIME 2024, AIME 2025, HMMT 2025, Omni-MATH-HARD), coding (LiveCodeBench v5, SciCode sub/main), and scientific knowledge and reasoning (GPQA-Diamond, HLE). Evaluations are standardized with a maximum generation length of 64,000 tokens, temperature set to 1.0, top-p sampling at 0.95, and a stop token of </answer>. Each reported score represents the average of 16 independent pass@1 runs to minimize variability.

Performance Highlights Across Domains

Mathematics: K2 Think achieves a micro-average score of 67.99 across AIME 2024, AIME 2025, HMMT 2025, and Omni-HARD, leading the open-weight model category and rivaling much larger architectures. Individual scores include 90.83 on AIME 2024, 81.24 on AIME 2025, 73.75 on HMMT 2025, and 60.73 on the challenging Omni-HARD subset. These results underscore its parameter efficiency compared to models like DeepSeek V3.1 (671B parameters) and GPT-OSS-120B.

Coding: On LiveCodeBench v5, K2 Think scores 63.97, outperforming similarly sized and even larger open models such as Qwen3-235B-A22B, which scores 56.64. In SciCode, it attains 39.2 on sub-problems and 12.0 on main problems, closely matching the top open-source systems.

Scientific Reasoning: The model scores 71.08 on GPQA-Diamond and 9.95 on HLE, demonstrating versatility beyond mathematics and coding by maintaining competitive performance on knowledge-intensive scientific tasks.

Essential Specifications and Metrics

- Base Model: Qwen2.5-32B with open weights, enhanced through long chain-of-thought supervised fine-tuning and reinforcement learning with verifiable rewards (GRPO via verl).

- Reinforcement Learning Dataset: Guru, comprising approximately 92,000 prompts across six domains: Math, Code, Science, Logic, Simulation, and Tabular data.

- Inference Framework: Plan-before-you-think combined with best-of-N sampling and verifiers, resulting in shorter outputs (up to 11.7% fewer tokens on Omni-HARD) and improved accuracy.

- Inference Throughput: Approximately 2,000 tokens per second on Cerebras Wafer-Scale Engine using speculative decoding.

- Mathematics Micro-Average Score: 67.99 (AIME 2024: 90.83, AIME 2025: 81.24, HMMT 2025: 73.75, Omni-HARD: 60.73).

- Coding and Science Scores: LiveCodeBench v5: 63.97; SciCode: 39.2/12.0 (sub/main); GPQA-Diamond: 71.08; HLE: 9.95.

- Safety Metrics (macro-average): 0.75, including refusal rate (0.83), conversational robustness (0.89), cybersecurity (0.56), and jailbreak resistance (0.72).

Conclusion: A New Benchmark in Efficient AI Reasoning

K2 Think exemplifies how a synergistic approach combining post-training refinement, dynamic test-time computation, and hardware-optimized inference can significantly narrow the performance gap with much larger, proprietary reasoning models. Its 32-billion-parameter scale strikes a balance between fine-tuning feasibility and deployment efficiency. The integration of plan-before-you-think prompting and best-of-N sampling with verifiers effectively manages token usage, while speculative decoding on wafer-scale hardware delivers throughput around 2,000 tokens per second. K2 Think is fully open-source, providing unrestricted access to its model weights, training datasets, deployment scripts, and inference optimization tools, fostering transparency and community-driven advancement in AI reasoning.