Traditional text-to-video generation models typically produce a single video clip based on a given prompt and then cease operation, lacking a persistent internal representation of the world that evolves with sequential actions. Addressing this limitation, PAN, a novel model developed by MBZUAI’s Institute of Foundation Models, functions as a comprehensive world model capable of forecasting future states as videos. It conditions these predictions on both historical context and natural language commands, enabling continuous interaction over extended time horizons.

Transforming Video Generation into Dynamic World Simulation

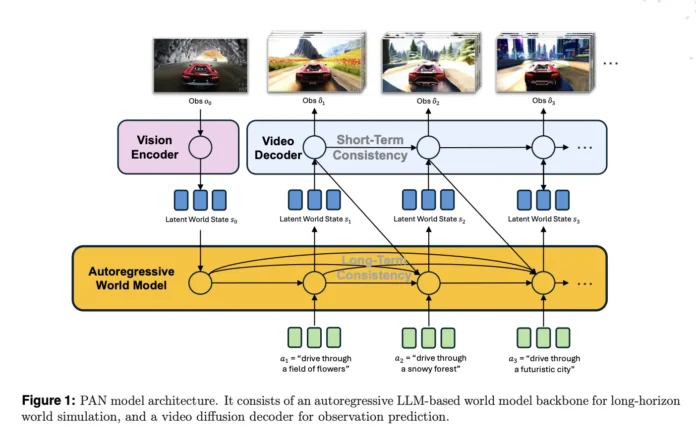

PAN is engineered as a versatile, interactive world model that maintains a latent internal state reflecting the current environment. Upon receiving natural language instructions-such as “turn left and accelerate” or “move the robotic arm toward the blue cube”-it updates this latent state accordingly. The updated state is then decoded into a short video segment illustrating the outcome of the action. This iterative process allows the model to simulate a continuously evolving world across multiple steps, rather than generating isolated clips.

This architecture empowers PAN to perform open-domain, action-conditioned simulations, enabling it to project alternative future scenarios based on different sequences of actions. External agents can leverage PAN as a simulator to evaluate potential outcomes, compare predicted futures, and make informed decisions grounded in these simulations.

Decoupling Dynamics from Visuals: The GLP Framework

At the core of PAN lies the Generative Latent Prediction (GLP) architecture, which distinctly separates the modeling of world dynamics from visual rendering. Initially, a vision encoder transforms input images or video frames into a latent representation of the world state. Next, an autoregressive latent dynamics module-built upon a large language model-predicts the subsequent latent state based on prior states and the current action. Finally, a video diffusion decoder reconstructs the corresponding video segment from this predicted latent state.

Specifically, PAN utilizes the Qwen2.5-VL-7B-Instruct model for both the vision encoder and the latent dynamics backbone. The vision encoder tokenizes frames into patches, generating structured embeddings, while the language model processes sequences of world states and actions alongside learned query tokens to output the next latent state. These latent representations reside within a shared multimodal space, integrating textual and visual information to ground the dynamics effectively.

The video diffusion decoder is adapted from Wan2.1-T2V-14B, a diffusion transformer designed for high-quality video synthesis. Training employs a flow matching objective with 1,000 denoising steps under a Rectified Flow framework. The decoder conditions on both the predicted latent state and the current natural language command, utilizing separate cross-attention streams for the world state and action text to enhance fidelity.

Enhancing Temporal Coherence with Causal Swin DPM and Sliding Window Diffusion

Simple concatenation of single-shot video models conditioned only on the last frame often results in abrupt transitions and rapid degradation in video quality over extended sequences. PAN overcomes these challenges through the Causal Swin Denoising Process Model (DPM), which extends the Shift Window Denoising Process Model by incorporating chunk-wise causal attention.

The decoder operates over a sliding temporal window containing two chunks of video frames at varying noise levels. During denoising, one chunk transitions from noisy to clean frames before exiting the window, while a new noisy chunk enters. The chunk-wise causal attention mechanism ensures that the later chunk attends only to the earlier chunk, preventing leakage of information from future unseen actions. This design smooths transitions between chunks and mitigates error accumulation over long horizons.

Additionally, PAN introduces controlled noise to the conditioning frames instead of relying on perfectly sharp inputs. This approach suppresses irrelevant pixel-level details, encouraging the model to focus on stable structural elements such as objects and spatial layout, which are crucial for accurate dynamic modeling.

Robust Training Pipeline and Diverse Data Sources

PAN’s training unfolds in two phases. Initially, the Wan2.1-T2V-14B decoder is adapted to the Causal Swin DPM architecture and trained using BFloat16 precision with the AdamW optimizer, cosine learning rate scheduling, gradient clipping, and advanced attention mechanisms like FlashAttention3 and FlexAttention. This stage leverages a hybrid sharded data parallelism strategy across 960 NVIDIA H200 GPUs to handle the computational demands.

In the subsequent phase, the frozen Qwen2.5-VL-7B-Instruct backbone is integrated with the video diffusion decoder under the GLP objective. While the vision-language model remains fixed, the system learns query embeddings and decoder parameters to ensure consistency between predicted latent states and reconstructed videos. This joint training employs sequence parallelism and Ulysses-style attention sharding to manage long context sequences efficiently. Training halts early after one epoch once validation metrics stabilize, despite a schedule allowing up to five epochs.

The training dataset comprises publicly available videos spanning everyday activities, human-object interactions, natural scenes, and multi-agent environments. Long videos are segmented into coherent clips using shot boundary detection, followed by rigorous filtering to exclude static or excessively dynamic scenes, low-quality footage, text overlays, and screen recordings. This filtering utilizes rule-based heuristics, pretrained detectors, and a custom vision-language model filter. Clips are then re-captioned with dense, temporally grounded descriptions emphasizing motion and causal events, enriching the dataset for action-conditioned learning.

Comprehensive Evaluation: Fidelity, Stability, and Planning

The PAN model is rigorously assessed across three key dimensions: action simulation fidelity, long-term prediction stability, and simulative reasoning for planning. Evaluations compare PAN against both open-source and commercial video generation and world modeling systems, including WAN 2.1/2.2, Cosmos 1/2, V JEPA 2, KLING, MiniMax Hailuo, and Gen 3.

For action fidelity, a vision-language model-based evaluator measures how accurately PAN executes language-specified actions while preserving a stable environment. PAN achieves 70.3% accuracy in agent simulation and 47% in environment simulation, culminating in an overall score of 58.6%. This performance leads among open-source models and surpasses most commercial counterparts.

Long horizon forecasting is quantified using Transition Smoothness-assessed via optical flow acceleration to gauge motion continuity across action boundaries-and Simulation Consistency, inspired by WorldScore metrics, which tracks degradation over extended sequences. PAN attains 53.6% in Transition Smoothness and 64.1% in Simulation Consistency, outperforming all baselines, including top commercial models.

In simulative reasoning and planning tasks, PAN is embedded within an OpenAI-o3 agent loop for stepwise simulation, achieving 56.1% accuracy, the highest among open-source world models evaluated.

Summary of Innovations and Achievements

- PAN realizes the Generative Latent Prediction framework by integrating a Qwen2.5-VL-7B-based latent dynamics backbone with a Wan2.1-T2V-14B video diffusion decoder, effectively uniting latent world reasoning and realistic video synthesis.

- The introduction of Causal Swin DPM, featuring a sliding window and chunk-wise causal denoising conditioned on partially noised past frames, significantly enhances long-term video stability and reduces temporal drift compared to conventional last-frame conditioning.

- Training is conducted in two stages: first adapting the Wan2.1 decoder to the Causal Swin DPM architecture on a large-scale GPU cluster, then jointly training the GLP stack with a frozen vision-language backbone and learned query embeddings.

- The training dataset encompasses extensive video-action pairs from diverse physical and embodied domains, processed through segmentation, filtering, and dense temporal recaptioning, enabling PAN to learn long-range, action-conditioned dynamics rather than isolated short clips.

- PAN sets new benchmarks in open-source action simulation fidelity, long horizon forecasting, and simulative planning, with notable scores such as 70.3% agent simulation accuracy, 47% environment simulation accuracy, 53.6% transition smoothness, and 64.1% simulation consistency, while remaining competitive with leading commercial systems.

Comparative Overview of Leading World Models

| Aspect | PAN | Cosmos Video2World | Wan2.1 T2V 14B | V JEPA 2 |

|---|---|---|---|---|

| Developed by | MBZUAI Institute of Foundation Models | NVIDIA Research | Wan AI and Open Laboratory | Meta AI |

| Main Purpose | Interactive, long-horizon world simulation with natural language action conditioning | World foundation model for Physical AI, focusing on control and navigation via video-to-world generation | High-quality text-to-video and image-to-video generation for content creation and editing | Self-supervised video model for understanding, prediction, and planning |

| World Model Approach | Explicit GLP framework with latent state, action, and next observation, emphasizing simulative reasoning and planning | Generates future video worlds from past video and control prompts, targeting robotics and navigation | Primarily a video generation model without persistent internal world state | Predictive latent representation learning without explicit generative supervision in observation space |

| Core Architecture | GLP stack with Qwen2.5 VL 7B vision encoder, LLM-based latent dynamics, and video diffusion decoder with Causal Swin DPM | Diffusion and autoregressive world models with video2world generation and language model-based decoders | Spatiotemporal VAE and diffusion transformer with 14B parameters for versatile generative tasks | JEPA-style encoder-predictor architecture focusing on latent video representation prediction |

| Latent Space | Multimodal latent space integrating text and vision for encoding and autoregressive prediction | Token-based video2world latent space conditioned on text prompts and optional camera poses | VAE-derived latent space driven by text/image prompts without explicit action interface | Self-supervised latent space optimized for predictive representation learning |

| Action Input | Natural language commands applied at each simulation step | Text prompts and optional camera poses for control tasks | Text and image prompts without multi-step action conditioning | Not focused on natural language actions; used within larger planning systems |

| Long Horizon Strategy | Causal Swin DPM with sliding window and chunk-wise causal attention to reduce drift | Generates future video from past context; no explicit causal denoising mechanism described | Generates several seconds of video with focus on quality; lacks explicit world state mechanism | Relies on predictive latent modeling; no generative video rollout with diffusion windows |

| Training Data | Large-scale, diverse video-action pairs with segmentation, filtering, and dense recaptioning | Proprietary and public videos focused on Physical AI domains with curated pipelines | Open-domain video and image corpora for general visual generation | Large-scale unlabeled video for self-supervised learning |

Final Insights

PAN represents a significant advancement by operationalizing the Generative Latent Prediction paradigm with scalable, production-ready components like Qwen2.5-VL-7B and Wan2.1-T2V-14B. Its transparent training and evaluation framework, coupled with reproducible metrics, positions PAN as a practical and interpretable world model rather than a mere generative demonstration. This work highlights the potential of combining vision-language backbones with diffusion-based video decoders to create interactive, long-term simulators capable of supporting complex reasoning and planning tasks.