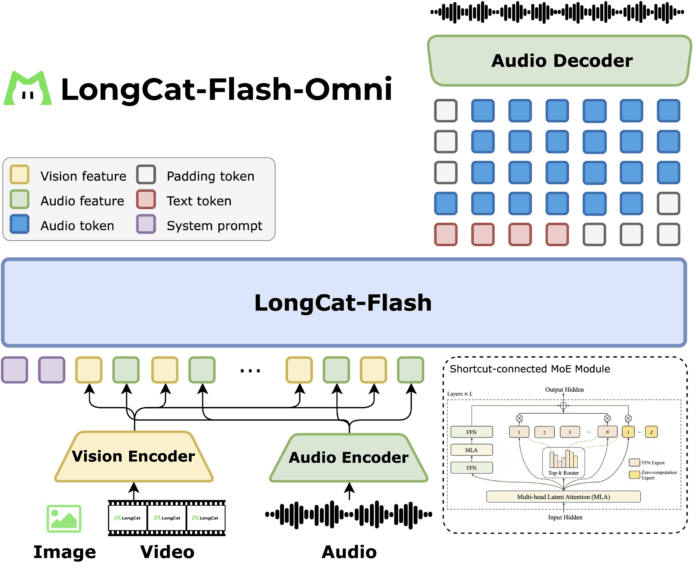

How can a single AI model seamlessly process and respond to text, images, video, and audio in real time without compromising performance? Meituan’s LongCat team has unveiled LongCat Flash Omni, an advanced open-source multimodal model featuring 560 billion parameters, with approximately 27 billion activated per token. This model leverages the innovative shortcut-connected Mixture of Experts (MoE) architecture introduced in LongCat Flash, enabling efficient scaling. By extending the text-based backbone to incorporate vision, video, and audio modalities, LongCat Flash Omni supports an extensive 128K token context window, facilitating prolonged conversations and comprehensive document-level understanding within a unified framework.

Unified Architecture for Multimodal Perception

LongCat Flash Omni preserves the core language model architecture while integrating specialized perception modules. A single LongCat Vision Transformer (ViT) encoder processes both static images and dynamic video frames, eliminating the need for separate video-specific networks. Complementing this, an audio encoder combined with the LongCat Audio Codec converts speech into discrete tokens. This design allows the decoder to generate speech outputs from the same large language model (LLM) stream, enabling fluid real-time audio-visual interactions.

Efficient Streaming via Feature Interleaving

The model employs a chunk-wise interleaving strategy for audio-visual features, bundling audio data, video frames, and timestamps into one-second segments. By default, video is sampled at 2 frames per second, with the sampling rate dynamically adjusted based on the video’s duration-a method known as duration-conditioned sampling. This approach maintains low latency while preserving spatial context essential for tasks such as graphical user interface (GUI) interpretation, optical character recognition (OCR), and video question answering (Video QA).

Progressive Training Strategy from Text to Multimodal

Training follows a carefully structured curriculum. Initially, the LongCat Flash text backbone is trained, activating between 18.6 billion and 31.3 billion parameters per token, averaging 27 billion. This is followed by continued pretraining on text and speech data, then multimodal pretraining incorporating images and videos. Subsequently, the context window is extended to 128K tokens, and finally, the audio encoder is aligned to the model, ensuring cohesive multimodal understanding.

Advanced Systems Design with Modality-Decoupled Parallelism

Recognizing the distinct computational demands of encoders and the LLM, Meituan implements modality-decoupled parallelism. Vision and audio encoders utilize hybrid sharding combined with activation recomputation, while the LLM operates with pipeline, context, and expert parallelism. A dedicated ModalityBridge component synchronizes embeddings and gradients across modalities. This architecture achieves over 90% of the throughput compared to text-only training during multimodal supervised fine-tuning, marking a significant systems engineering accomplishment.

Performance Benchmarks and Comparative Analysis

LongCat Flash Omni attains a score of 61.4 on OmniBench, outperforming Qwen 3 Omni Instruct (58.5) and Qwen 2.5 Omni (55.0), though it trails behind Gemini 2.5 Pro’s 66.8. On the VideoMME benchmark, it achieves 78.2, closely matching GPT-4o and Gemini 2.5 Flash. In audio evaluation via VoiceBench, it scores 88.7, slightly surpassing GPT-4o Audio, demonstrating its competitive edge in real-time multimodal understanding.

Summary of Innovations and Impact

- LongCat Flash Omni is an open-source multimodal model built on Meituan’s 560B parameter MoE backbone, activating roughly 27B parameters per token through a shortcut-connected MoE design that balances large capacity with inference efficiency.

- The model integrates unified vision and video encoding alongside a streaming audio pathway, employing a default 2 fps video sampling rate with duration-conditioned adjustments. Audio-visual features are synchronized in one-second chunks, enabling real-time, cross-modal interactions.

- It achieves a strong 61.4 score on OmniBench, surpassing several contemporaries, though still behind the leading Gemini 2.5 Pro.

- Meituan’s modality-decoupled parallelism strategy allows vision and audio encoders to run with hybrid sharding, while the LLM leverages pipeline, context, and expert parallelism, maintaining over 90% of text-only training throughput during multimodal fine-tuning.

Insights and Future Directions

This release underscores Meituan’s commitment to making multimodal AI interaction practical and scalable rather than purely experimental. By retaining the 560B parameter shortcut-connected MoE with 27B activated parameters, the model ensures backward compatibility with previous LongCat versions. The introduction of streaming audio-visual perception with adaptive video sampling maintains low latency without sacrificing spatial awareness. Furthermore, the modality-decoupled parallelism approach delivers impressive throughput efficiency during multimodal supervised fine-tuning, setting a new standard for real-time multimodal AI systems.