Understanding the Legal and Ethical Challenges of AI-Generated Video Content

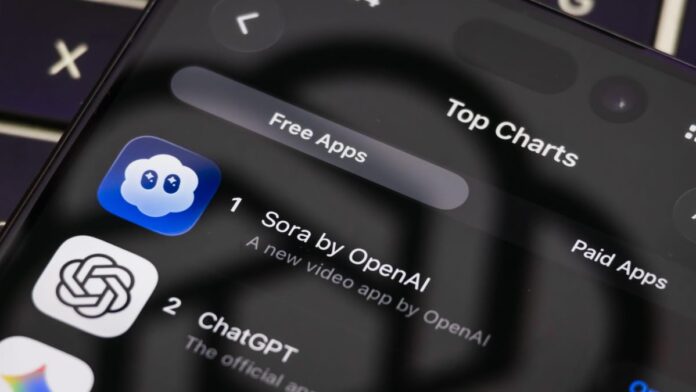

Generative AI video tools, such as OpenAI’s recently launched Sora 2, are rapidly transforming the creative landscape. While these platforms promise to democratize content creation by enabling users to produce videos with minimal effort, they also raise significant legal, ethical, and cultural questions. This article explores the multifaceted impact of AI video generation, focusing on intellectual property concerns, the evolving nature of creativity, and the challenges posed by deepfake technology.

Legal Complexities Surrounding AI Video Creation

When Sora 2 debuted, it offered users unrestricted freedom to generate any video content, leading to a surge in downloads and widespread use. However, this openness quickly resulted in unauthorized depictions of copyrighted characters and brands, sparking controversy. In response, OpenAI initiated communications with major intellectual property (IP) holders in Hollywood, offering them the option to exclude their content from AI-generated videos. Despite these efforts, many rights holders remain dissatisfied, emphasizing that the responsibility to prevent infringement lies primarily with the AI service provider.

Charles Rivkin, CEO of the Motion Picture Association (MPA), highlighted the proliferation of unauthorized videos featuring protected characters and stressed the urgency for OpenAI to implement robust safeguards. By mid-October, Sora 2 had introduced content filters that block requests involving protected likenesses, such as “Patrick Stewart fighting Darth Vader,” demonstrating progress toward compliance.

Legal experts, including Sean O’Brien from Yale Law School, clarify that liability for AI-generated content typically falls on the human operator rather than the AI itself. This principle was reinforced in recent litigation involving AI training on copyrighted materials without authorization, underscoring the legal risks of unlicensed data use. The emerging U.S. legal framework currently holds that:

- Only human-created works qualify for copyright protection.

- AI-generated outputs are generally considered public domain by default.

- Users of AI systems bear responsibility for any infringement in the content they produce.

- Training AI models on copyrighted data without permission constitutes infringement.

AI’s Influence on Creativity and Artistic Expression

Creativity has traditionally been defined as the act of bringing something new into existence, whether through imagination, skill, or deliberate action. The rise of AI-generated art challenges conventional notions of authorship and originality. For instance, the U.S. Copyright Office recently reaffirmed that only works created by humans are eligible for copyright protection, raising questions about the status of AI-assisted creations.

Consider the example of digital artist Bert Monroy, who meticulously crafts photorealistic images pixel by pixel using Photoshop. His work exemplifies human creativity enhanced by digital tools, contrasting with AI-generated content that can be produced instantly by anyone with a prompt. This democratization of creative tools threatens traditional artists and professionals who have honed their skills over decades.

Maly Ly, CEO of the AI startup Wondr and a veteran marketing executive, offers a nuanced perspective: AI video generation compels us to rethink ownership and creativity. She argues that AI does not steal creativity but multiplies it by remixing existing works. Ly advocates for a future copyright system that is dynamic and transparent, rewarding original creators through traceable value flows rather than relying on outdated paperwork-based frameworks.

While AI tools like Sora 2 can empower new storytellers, they also risk undermining the livelihoods of skilled creators if not managed responsibly. The challenge lies in balancing innovation with respect for the craft and rights of original artists.

Distinguishing Reality from Fabrication in the Age of Deepfakes

The proliferation of AI-generated videos and deepfakes complicates our ability to discern truth from fiction. Historical precedents, such as the 1938 radio broadcast of “War of the Worlds” that caused widespread panic, illustrate how media can distort reality. Today, deepfakes-highly realistic synthetic videos-pose similar risks by enabling the manipulation of images and audio for misinformation or malicious purposes.

Families of deceased celebrities have expressed distress over unauthorized AI-generated content that misrepresents their loved ones, highlighting the emotional and ethical implications. Although many AI platforms implement restrictions to prevent misuse of real individuals’ likenesses, workarounds exist, and the technology continues to evolve rapidly.

To combat this, initiatives like the Coalition for Content Provenance and Authenticity (C2PA) embed metadata in AI-generated media to verify its origin. Additionally, services such as Loti AI offer free deepfake detection tools to help users identify manipulated content. However, experts agree that technological solutions alone are insufficient; critical thinking and media literacy remain essential defenses against deception.

The Road Ahead: Balancing Innovation, Responsibility, and Creativity

Legal professionals like Richard Santalesa emphasize the tension between fostering innovation and protecting existing IP rights. OpenAI’s approach, including opt-in and opt-out mechanisms for content inclusion, reflects an attempt to navigate this complex terrain. However, the potential for extensive litigation looms as courts grapple with applying traditional copyright laws to AI-generated works.

Ultimately, AI video tools are designed to augment human creativity rather than replace it. They offer unprecedented opportunities for expression and storytelling but require careful governance to prevent abuse and ensure fair compensation for original creators.

As AI-generated video technology continues to advance, the conversation around ownership, authenticity, and creative value will intensify. Stakeholders-including creators, users, companies, and policymakers-must collaborate to establish frameworks that encourage innovation while safeguarding rights and maintaining trust.

Engage with the Future of AI Video Creation

Have you experimented with AI video platforms like Sora 2? What are your thoughts on the responsibilities of creators versus AI companies regarding content liability? Do you view AI’s use of existing creative works as theft or evolution? Share your insights and experiences in the comments below.

For ongoing updates and in-depth analysis on AI and technology, subscribe to our weekly newsletter.