Karen Hao, Inside OpenAI’s Empire: A Conversation with Karen Hao (19459000)

: Hi everyone, and welcome back to this special edition Roundtables. These are events that are only available to subscribers. You can listen to the conversations between editors and journalists. I’m thrilled to announce that we have a fantastic event planned for today. I’m delighted to have Karen Hao with us today to discuss her new book. She is a fantastic AI journalist. How are you, Karen?

Karen Hao: Good. Niall, thank you so much for welcoming me back.

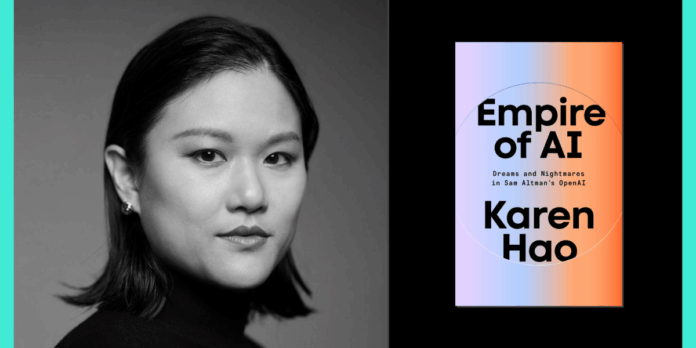

Niall Firth : Nice to see you. I’m sure that you all know Karen, and that’s the reason you’re here. Karen holds a mechanical engineering degree from MIT. She has been cited by Congress, written articles for The Wall Street Journalas well as The Atlantic ; and she set up a series of classes at the Pulitzer Center for journalists to learn how to cover AI.

Most importantly, she is here to discuss Empire of AI, her new book. I have a copy of it here. In the UK, the subtitle is “Inside the reckless racing for total dominance,” and in the US, it’s “Dreams & nightmares of Sam Altman’s OpenAI.” A remarkable feat of reporting, with 300 interviews and 90 with people at OpenAI. It’s a brilliant look not only at OpenAI’s growth and the character of Sam Altman which is interesting in its own rights, but also an astute look into what kind of AI that we’re building, and who holds the key.

Karen: The core of the book was the rise and rising of OpenAI. It was one of your very first big features in MIT Technology Review. This is a brilliant piece of journalism that revealed for the first time what was happening at OpenAI… and they hated it!

Karen Hao: I agree, and thank you all for being here. It’s great to be back home. I still consider MIT Tech Review as my journalistic home. And that story–I did it only because Niall assigned after I said “Hey, OpenAI seems like an interesting thing” and he said, you should profile them. Niall thought I could do it, even though I’d never done it before. I had never written about a company, and I didn’t think I was up to it. It really wasn’t possible without you.

Let me know what OpenAI is. I went in with an open mind. Let me take their words at face value. They were founded by a nonprofit. They have a mission to ensure that artificial general intelligence is beneficial to all humanity. What do they mean? What are they trying to accomplish? How do they strike a balance between mission-driven AI and the need to raise capital and money?

After spending three days in the company and interviewing dozens outside the company, I realized that there was a fundamental gap between what the company publicly proclaimed and how it operated. This is where I focused my profile, and this is why they weren’t very happy.

Niall Firth : How has OpenAI changed since you created the profile? This kind of misalignment has gotten more confusing and messy in the years that have passed.

Karen Hao: Absolutely. OpenAI is now arguably one of the most capitalistic companies in Silicon Valley. They raised $40 billion in the largest private fundraising round ever in the history of tech industry. They are valued at $300 billion. They still claim to be a nonprofit.

This really gets at the heart of how OpenAI has tried so hard to position itself and reposition themselves throughout its decade-long existence, to ultimately play to the narratives they think will work best with the public, and with policymakers. In spite of what they may actually be doing to develop their technologies and to commercialize them. Niall Firth

Niall Firth I cite Sam Altman who says, “You know, the race to AGI is what motivates a lot of these things, and I’ll get back to that before the end.” He compares it to the Manhattan Project of AI. You quote him quoting Oppenheimer in the book (of course there’s no self aggrandizing here): “Technology is possible because it’s possible,” says he.

I feel like this is a theme of the book, the idea that technology does not just happen. It is a result of the choices people make. It’s not inevitable that people and things are as they are. What they believe is important, and that influences the direction in which we travel. What does this mean in practice, if it’s true?

Karen Hao: OpenAI, in particular, made a key decision very early in their history which led to the AI technologies we see dominating today’s marketplace and headlines. It was a decision made to advance AI by scaling existing techniques. When OpenAI was founded, at the end 2015, and when they made this decision, roughly around 2017, it was a very unpopular viewpoint within the broader AI field.

There was a spectrum or two extremes of ideas on how to advance AI. The first extreme was that we already have all the necessary techniques and should scale aggressively. The other extreme is that we don’t have the necessary techniques. To get more breakthroughs, we need to keep innovating and do fundamental AI research. The field assumed this side [focusing on fundamental AI research] would be the most likely to lead to advancements. But OpenAI was adamantly committed towards the other extreme — the idea that we could just take neural networks, pump ever more data and train on supercomputers larger than any ever built.

They made this decision because they were in competition with Google, which held a monopoly over AI talent. OpenAI knew they wouldn’t be able to beat Google by focusing on research breakthroughs alone. This is a very difficult path. You never know when a breakthrough will occur in fundamental research. It’s not linear, but scaling can be. You can make gains as long as you keep pumping more data and computing power. They thought that they could do it faster than anyone. We’re going to be able to leapfrog Google by doing this. It was also in line with Sam Altman’s skillset because he’s a once-in a-generation fundraiser, and capital is the main bottleneck when you want to scale up AI models.

It was a good fit for his skillset, because he is a very quick and efficient capital collector. This is how you can ultimately see that technology is the result of human choices and perspectives. They were the specific skills and talents that the team possessed at the time to determine how they wanted the project to proceed.

Niall Firth : To be fair, it works right? It was fantastic, amazing. You know, the breakthroughs, GPT-2 and GPT-3, were just mind-blowing, when we look back.

Karen Hao Yes, it’s amazing how much it worked, because it was skepticism that scale would lead to the kind technical progress we’ve seen. One of my biggest criticisms of this approach is the cost. There are many ways to advance AI. We could have gotten all these benefits and we could continue to reap more benefits in the future without engaging in a highly consumptive and expensive approach to its development.

Niall Firth : Okay, so in terms consumptive, we’ve recently touched on that here at MIT Technology Review. Like the energy costs of AI. The costs of data centers are absolutely incredible, right? The data behind the data center is amazing. It’s only going to get worse if we continue on this path in the next few decades, right?

Karen Hao Okay… so everyone should read the series Tech Review published on the energy issue, if they haven’t done so already. It breaks down everything, from the energy consumption at the smallest level of interacting with the models, to the highest levels.

I’ve seen this number a lot and I’ve repeated it. According to a McKinsey study, if we continue looking at the pace of data centers and supercomputers being built and scaled up, in five years we will have to add between two and six times the energy consumption of California to the grid. Most of this energy will be supplied by fossil fuels because these data centres and supercomputers must run 24/7. We don’t have enough nuclear capacity to power such massive infrastructure. We are already accelerating climate change.

We’re also accelerating the public-health crisis. Thousands of tons of air pollution are being pumped into the air by coal plants whose lives are being extended and methane turbines which are being built to power these data centers. In addition, the freshwater crisis is intensifying, as these infrastructure pieces must be cooled using freshwater. If it’s not fresh water, it will corrode the equipment and cause bacterial growth.

Bloomberg published a recent story showing that two-thirds (or more) of these data centres are going to be built in areas with limited water, where communities already lack enough fresh water. This is just one of the many dimensions that I mention when I talk about the extraordinary costs associated with this particular path for AI development.

Niall Firth : In terms of costs and extractive processes of making AI, i wanted to give you a chance to talk about another theme of the book apart from OpenAI’s explosive growth. It’s a colonial view of the way AI is created: the empire. This is obvious because we are here, but the idea came from your reporting at MIT Technology Review that you continued in the book. Tell us how this framing can help us understand how AI is created today.

Karen Hao: Yes, this was a frame that I began thinking about a lot when I worked on the AI Colonialism Series for Tech Review. The stories examined the way in which, even before ChatGPT, the commercialization and deployment of AI into the world had already entrenched historical inequities.

One example was the story about how companies that specialize in facial recognition were flooding into South Africa, trying to harvest more data at a time when their technology was being criticized for not accurately recognizing black faces. The deployment of these facial recognition technologies in South Africa, on the streets of Johannesburg was leading to a recreation of digital apartheid, the controlling of black bodies and movement of black people, as South African scholars called it.

This idea haunted my mind for a long time. In my reporting for that series, I came across so many examples of this thesis that the AI industry perpetuated. It felt like the AI industry was becoming a neocolonial power. ChatGPT was released, and it became apparent that the pace of change was accelerating.

As you increase the scale of these technologies and start training them to use the entire Internet, you will start using supercomputers the size of dozens, if not hundreds, of football fields. You can then start to talk about the extraordinary level of extraction and exploitation taking place around the world in order to produce these technologies. The historical power imbalances are then even more apparent.

I draw four parallels in my book, between what I now call empires of AI and empires from the past. The first is that empires claim resources that aren’t their own. These companies are scraping data that isn’t theirs, and stealing all the intellectual property they don’t own.

Empires are known to exploit a large amount of labor. We see them move to countries in the Global South, or other economically vulnerable areas to contract workers for some of the worst jobs in the development pipeline to produce these technologies. They also produce technologies that are labor-automating in nature and engage in labor exploitation themselves.

The third feature is the monopolization of knowledge production by the empires. In the last decade, the AI industry has monopolized more and more AI researchers around the world. AI researchers no longer contribute to open science and are no longer working in universities or independent institutes. The effect on research is similar to what would happen if the majority of climate scientists were funded by oil and gas corporations. You and we would not have a clear understanding of the limitations of this technology, or if it is possible to improve on them.

The fourth and final feature of empires is that they always engage in aggressive race rhetoric where there are evil empires and good empires. The good empire must be strong enough in order to defeat the evil empire. For this reason, they should be allowed to consume and exploit all these resources. If the evil empire gains the technology before humanity, it will be hell for everyone. If the good empire gets technology first, it will civilize the world and humanity will go to heaven. On many levels, the empire theme was the best way to describe how these companies work and their impact on the world.

Niall Firth : Oh, brilliant. You talk about the evil Empire. What happens if it’s the evil empire that gets it first? AGI is what I’ve mentioned. It’s like an extra character in my book. It’s like a ghost at the dinner, looming over all of us, saying, “This is what drives everything at OpenAI.” This is what we have to do before anyone else.

In the book, there’s a section about how OpenAI is talking internally, saying that we need to ensure AGI stays in US hands, where it’s secure, rather than anywhere else. Some of the international staff openly say–that’s a strange way to frame it isn’t? Why is the US version better than other versions?

Tell us how it drives their work. And AGI isn’t a fact that will happen in the future, is it? It’s still not a thing.

Karen Hao: It’s not clear whether it’s possible or even what it is. Cade Metz’s New York Times article cited a survey conducted by long-time AI researchers in the field. 75% of the respondents still believe that we do not have the techniques to reach AGI. AGI can be defined as the ability to recreate human intelligence within software. We also don’t agree on what human intelligence is. In the book, I discuss a number of aspects, including when we will have reached a consensus on what human intelligence is and how it should look. What capabilities should we use to evaluate these systems in order to determine if we have reached this point? OpenAI can do whatever they want.

It’s just this ever-present, shifting goalpost. You know, the company has used a wide range of definitions over the years. They even make a joke: If you asked 13 OpenAI researchers to define AGI, you would get 15 different definitions. They are aware that this isn’t a real word and that it doesn’t have much meaning.

It does serve the purpose of creating a quasi-religious fervor about what they’re trying to do, where people believe that they must keep driving towards this distant horizon and that when they arrive, it will have a civilizationally transformational impact. What else could you do in your life but work on this? Who else but you should be working on this?

This is their justification for not only continuing to push, scale and consume all of these resources — because none of this consumption, none if the harm matters if you reach this destination. They also use it to develop their technology in a deeply anti-democratic manner, claiming that they are the only ones with the expertise and the right to control the development of the technology. We cannot allow anyone else to participate, because this technology is just too powerful.

Niall Firth : I’m interested in the religious framing, and you mention the factions. AGI was a niche concept that was fun to talk about, but it has now become mainstream. They have the boomers and doomers dichotomy. Where do you fall on this spectrum?

Karen Hao : So the boomers think AGI will bring us to utopia and the doomers believe AGI will destroy all of humanity. To me, these two sides are really the same coin. Both believe AGI is imminent and possible and will change everything.

I’m not on this spectrum. I’m in the third space. This is the AI accountability area. It is based on the observation that these companies, to return to the empire analogy, have accumulated a tremendous amount of economic and political power.

To avoid a return to the age of empire, we must hold these companies accountable using all the tools available to us. We also need to recognize the harms they are already causing through their misguided AI development.

Niall Firth : There are a few questions from readers. I’m going to try and pull them all together because Abbas asked, what would post imperial AI look like. Liam asked a similar question. How can you create a more ethical AI that does not fit into this framework?

Karen Hao: This idea has been touched upon a bit already. There are many ways to develop AI. There have been many different techniques used in the decades-long history of AI. Techniques have risen and fallen in response to various shifts. It’s not based on the technical or scientific merit of a particular technique. Sometimes, certain techniques are popularized for business reasons or due to the funding source’s ideologies. We’re seeing this today, with the complete indexing AI development on large AI model development.

These large-scale models… We talked about the remarkable technical leaps, but in terms social progress or economic advancement, their benefits have been middling. I think we can shift to AI models that will be A) more beneficial, and B) less imperial by refocusing on task-specific AI solutions that tackle well-defined challenges. These are problems that lend themselves to the strengths that AI systems have because they are computational optimization problems.

I’m referring to things like using AI for grid integration of renewable energy. We need this. We need to accelerate the electrification process of the grid. One of the challenges is the unpredictable nature of renewable energy. This is one of the key strengths of AI technologies: the ability to predict and optimize energy production from different renewables in order to match it with the energy demand of different people who are drawing energy from the grid.

Niall Firth : Many people in the chat have asked different versions of the question. What can you do as an AI scientist in the early stages of their career, or as someone who is involved with AI, to help bring about a more ethical AI? Is it too late or do you still have any power?

Karen Hao: I don’t believe it’s at all too late. I’ve talked to a lot people in the lay-public and one of their biggest challenges is that they don’t know any other alternatives to AI. They want AI’s benefits, but also don’t want to be part of a supply chain which is harmful. The first question to ask is always: Is there an alternative? Which tools should I use? Unfortunately, there aren’t many options available right now.

I would tell early-career AI entrepreneurs and researchers to create these alternatives because many people are excited about the idea of switching to ethical alternatives. One of the analogies that I use is to say that we need to do the same thing with the AI industry as happened with the fashion sector. There was also a great deal of environmental exploitation and labor exploitation in fashion, and there was a sufficient amount of consumer demand to create new markets for ethically and sustainably sourced clothing. We need more options in that space.

Niall Firth : Are you optimistic about the future? Where are you sitting? You know that things aren’t as good as you describe them now. Where is the hope for us now?

Karen Hao: I am. I’m super optimistic. I’m super optimistic. Do you have enough material to write about?

I think everyone is talking about AI now, and that’s a good thing. I think it’s a great way to start. It is important that we pay attention to how we use and develop these technologies. We need to have this public debate, and that is a major change from the past.

The next step is to turn awareness into action and have people take an active part. This was a major reason for me to write this book. Think about the many ways you interact with the AI supply chain, such as when you give or withhold data.

There’s probably a data center being built near you right now. If you are a parent, some sort of AI policy is being crafted in [your kid’s] the school. Some kind of AI policy is being crafted in your workplace. All of these are what I call democratic contestation sites, where you can use them to express your opinion about how AI should be developed and deployed. You can push back if you don’t want these companies to take certain types of data.

Because I didn’t like that they were scraping photos from my social media accounts to train their generative AI model, I closed all my personal accounts. I’ve seen students, parents and teachers form committees in schools to discuss and draft their AI policy. Businesses are doing the same thing. They’re doing it too. If we all

I’m super optimistic that things will improve if we all step up and take an active role.

Niall Firth : Mark mentioned the Maori story in New Zealand at the end of your books in the chat. Isn’t that an example of community-led AI?

Karen Hao: Yeah. A community in New Zealand wanted to revitalize the Maori Language by building a speech-recognition tool that could recognize Maori and be able transcribe rich archival audio recordings of their ancestors talking Maori. When they started this project, they asked the community: Do you want this AI tool?” Imagine that.

Karen Hao: Yes, I know! This idea of consenting at every stage is a radical one. They asked the community to consent and they said yes. They then launched a public awareness campaign to explain what it takes to develop an AI tool. We’re going to need some data. We will need audio transcription pairs in order to train this AI model. They then ran a contest to encourage dozens, or even hundreds, of members of their community to donate data for this project. They then made sure to actively explain to the community, at every stage, how their data would be used, stored, and how it would remain protected. Any other project that uses the data must first get the community’s permission and consent.

It was a democratic process to decide whether the community wanted the tool. To develop the tool. To determine how the tool would be used. And to determine how their data would be used in the future.

Niall Firth : Excellent. I know we have gone a little over time. I have two more questions for you. I’m basically going to put together all the questions that people asked in the chat regarding your opinion on what role regulations should be. What’s your opinion?

Karen Hao Yeah. In an ideal world, where we had a functioning federal government, regulation would absolutely play a major role. It shouldn’t be thinking only about how to regulate an AI model once it is built. But still think about the entire supply chain of AI, regulating data and what can be trained into these models, regulating land use. What land is allowed to be used for data centers? How much water and energy can data centers consume? Transparency is also regulated. We don’t even know what data are in these training data sets. Or the environmental costs associated with training these models. We don’t even know how much water is used by these data centers. These companies are actively hiding this information to prevent democratic processes. If regulators were to make a major intervention, it would be to increase the transparency of the supply chain.

Niall Firth : Okay. Let’s finish by going back to OpenAI and Sam Altman. He sent a famous email, didn’t it? After your original Tech Review story saying this isn’t great. We don’t like it. Did he not want to talk to you about your book either?

Karen Hao: No, he did not.

Niall Firth : He did not. Imagine Sam Altman in this chat. He subscribes toTechnology Review and is watching the Roundtables to see what you have to say about him. What would you ask him if you could speak to him directly?

Karen Hao: How much harm must you see before you realize you should choose a different path to follow?

Niall Firth : Nicely blunt and to the point. Karen, I appreciate your time.

Karen Hao: Thank you so much, everyone.

MIT Technology Review Roundtables are an online event series for subscribers only where experts discuss what’s new in emerging technologies. Sign up to be notified about upcoming sessions.