Introducing IBM’s Granite 4.0: A Breakthrough in Efficient Large Language Models

IBM has unveiled Granite 4.0, a cutting-edge open-source family of large language models (LLMs) that innovatively replaces traditional monolithic Transformer architectures with a hybrid design combining Mamba-2 state-space layers and Transformer blocks. This novel approach significantly reduces memory consumption during inference while maintaining high-quality outputs. The Granite 4.0 lineup includes four model variants ranging from a compact 3-billion parameter dense model to a 32-billion parameter hybrid mixture-of-experts (MoE) model with approximately 9 billion active parameters.

Revolutionizing Model Architecture: The Hybrid Mamba-2/Transformer Approach

Granite 4.0’s architecture intersperses a small portion of self-attention Transformer blocks with predominantly Mamba-2 state-space layers in a 9:1 ratio. This hybrid structure enables the models to achieve over 70% reduction in RAM usage during long-context and multi-session inference compared to conventional Transformer-only LLMs. This efficiency gain translates directly into lower GPU operational costs without compromising throughput or latency targets. Internal benchmarks from IBM reveal that even the smallest Granite 4.0 models outperform their predecessors, such as Granite 3.3-8B, despite having fewer parameters.

Granite 4.0 Model Variants: Tailored for Diverse Use Cases

IBM offers both Base and Instruct versions across four initial Granite 4.0 models:

- Granite-4.0-H-Small: A 32-billion parameter hybrid MoE model with around 9 billion active parameters.

- Granite-4.0-H-Tiny: A 7-billion parameter hybrid MoE model with approximately 1 billion active parameters.

- Granite-4.0-H-Micro: A 3-billion parameter hybrid dense model.

- Granite-4.0-Micro: A 3-billion parameter dense Transformer model designed for environments that do not yet support hybrid architectures.

All models are released under the Apache-2.0 license and are cryptographically signed to ensure integrity. Notably, Granite 4.0 is the first open-source LLM family certified under the ISO/IEC 42001:2023 AI management system standard, underscoring IBM’s commitment to trustworthy AI. Enhanced reasoning-optimized “Thinking” variants are slated for release in 2025.

Training Regimen, Context Length, and Data Types

Granite 4.0 models were trained on datasets containing sequences up to 512,000 tokens and have been evaluated on contexts as long as 128,000 tokens, supporting extensive long-form understanding. The publicly available checkpoints on Hugging Face utilize BF16 precision, with additional quantized and GGUF format conversions provided for streamlined deployment. While FP8 precision is supported during execution on compatible hardware, it is not the format of the released weights.

Enterprise-Grade Performance: Benchmark Highlights

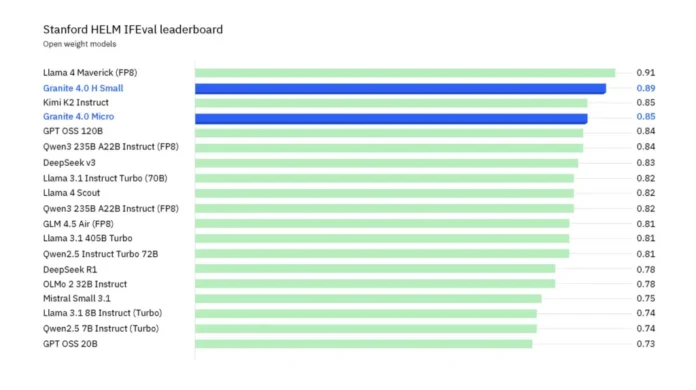

IBM emphasizes Granite 4.0’s strong performance in instruction-following and tool-use scenarios, validated through several key benchmarks:

- IFEval (HELM): Granite-4.0-H-Small ranks among the top open-weight models, only surpassed by much larger models like Llama 4 Maverick.

- BFCLv3 (Function Calling): The H-Small model competes effectively with larger proprietary and open models, offering a cost-efficient alternative.

- MTRAG (Multi-turn Retrieval-Augmented Generation): Demonstrates enhanced reliability in complex retrieval workflows, crucial for enterprise applications.

Where to Access Granite 4.0

Granite 4.0 is accessible through IBM’s watsonx.ai platform and is distributed across multiple channels including Dell Pro AI Studio/Enterprise Hub, Docker Hub, Hugging Face, Kaggle, LM Studio, NVIDIA NIM, Ollama, OPAQUE, and Replicate. IBM is actively working on enabling support for hybrid serving frameworks such as vLLM, llama.cpp, NexaML, and MLX to facilitate broader adoption.

Analysis and Implications for Enterprise AI Deployment

The hybrid Mamba-2/Transformer architecture combined with active-parameter MoE design in Granite 4.0 presents a pragmatic solution to reducing total cost of ownership (TCO) for large language models. The substantial memory savings and improved long-context throughput allow organizations to operate smaller GPU clusters without sacrificing accuracy in instruction-following or tool integration tasks. The availability of BF16 checkpoints with GGUF conversions simplifies local testing and deployment pipelines. Furthermore, the ISO/IEC 42001 certification and cryptographically signed model artifacts address critical provenance and compliance challenges that often hinder enterprise AI adoption. Overall, Granite 4.0 offers a streamlined, auditable, and production-ready model family with active parameter counts ranging from 1 billion to 9 billion, making it more accessible and manageable than previous 8-billion parameter Transformer models.