IBM has steadily established a formidable footprint within the open-source artificial intelligence landscape, and its newest model releases underscore why the company remains a key player. Recently, IBM unveiled two innovative embedding models-granite-embedding-english-r2 and granite-embedding-small-english-r2-engineered to excel in high-efficiency retrieval and retrieval-augmented generation (RAG) applications. These models are not only streamlined and resource-conscious but also come with an Apache 2.0 license, facilitating seamless integration into commercial environments.

Introducing IBM’s Latest Embedding Models

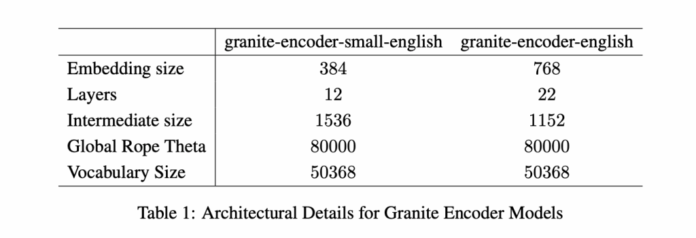

IBM’s dual-model release caters to varying computational capacities. The more substantial granite-embedding-english-r2 boasts 149 million parameters and generates embeddings of size 768, leveraging a 22-layer ModernBERT architecture. Its smaller sibling, granite-embedding-small-english-r2, is designed with 47 million parameters and an embedding dimension of 384, built upon a 12-layer ModernBERT encoder.

Both models support an impressive maximum context length of 8192 tokens, a significant leap from the original Granite embeddings. This extended context window is particularly advantageous for enterprise scenarios that involve processing lengthy documents and executing intricate retrieval operations.

Architectural Innovations Behind the Granite R2 Models

At the core of both models lies the ModernBERT framework, which incorporates several cutting-edge enhancements:

- Alternating global and local attention mechanisms to optimize the balance between computational efficiency and capturing long-range dependencies.

- Rotary positional embeddings (RoPE) fine-tuned for positional interpolation, enabling the models to handle extended context lengths effectively.

- FlashAttention 2 technology, which enhances memory efficiency and accelerates inference throughput.

IBM’s training regimen employed a sophisticated multi-phase pipeline. Initially, the models underwent masked language modeling pretraining on an extensive dataset exceeding two trillion tokens, compiled from diverse sources such as web content, Wikipedia, PubMed, BookCorpus, and proprietary IBM technical documents. Subsequent stages included expanding the context window from 1,000 to 8,000 tokens, applying contrastive learning with knowledge distillation from the Mistral-7B model, and fine-tuning for specialized domains like conversational AI, tabular data retrieval, and code search.

Benchmark Performance and Domain-Specific Strengths

On prominent retrieval benchmarks such as MTEB-v2 and BEIR, the larger granite-embedding-english-r2 consistently surpasses comparable models like BGE Base, E5, and Arctic Embed. Meanwhile, the smaller granite-embedding-small-english-r2 delivers accuracy levels comparable to models two to three times its size, making it an excellent choice for latency-sensitive applications.

These models also excel in niche retrieval tasks, including:

- Long-document retrieval (e.g., MLDR, LongEmbed), where the extended 8k token context is crucial.

- Table-based retrieval challenges (such as OTT-QA, FinQA, OpenWikiTables) that demand structured reasoning capabilities.

- Code retrieval scenarios (CoIR), effectively managing both text-to-code and code-to-text queries.

Efficiency and Scalability for Real-World Applications

One of the standout features of these Granite R2 models is their remarkable efficiency. On an Nvidia H100 GPU, the granite-embedding-small-english-r2 can process nearly 200 documents per second, significantly outpacing competitors like BGE Small and E5 Small. The larger granite-embedding-english-r2 achieves a throughput of 144 documents per second, outperforming many other ModernBERT-based models.

Importantly, these models maintain practical usability on CPU-only setups, enabling organizations to deploy them without heavy reliance on GPUs. This combination of speed, compactness, and retrieval precision makes them highly versatile for diverse production environments.

Implications for Retrieval Systems and Knowledge Management

IBM’s Granite Embedding R2 series illustrates that high-quality embedding models do not necessarily require enormous parameter counts to deliver exceptional results. By integrating extended context handling, top-tier benchmark accuracy, and rapid processing within a compact design, these models offer a compelling solution for enterprises developing retrieval pipelines, knowledge bases, or RAG workflows. Granite R2 stands out as a robust, commercially viable alternative to existing open-source embedding models.

Conclusion: A Balanced Solution for Modern Retrieval Needs

In summary, IBM’s Granite Embedding R2 models achieve a harmonious blend of compact architecture, long-context support, and superior retrieval performance. Optimized for both GPU and CPU environments and distributed under an Apache 2.0 license, they provide unrestricted commercial use and ease of integration. For organizations seeking efficient, scalable, and production-ready embedding solutions for RAG, search, or large-scale knowledge management, Granite R2 represents a highly attractive option.